30 Minutes. Same Prompt. Every Sunday. My Content Hasn't Been the Same Since.

The weekly reverse prompt habit that finds your failure modes before your readers do.

Two days ago, I taught you to work backwards. Feed AI what you have. Let it diagnose what’s broken. Stop guessing forward into the void.

Yesterday, I taught you to actually do something with the results. Triage. Verify. Turn findings into prompts. Act within 72 hours or watch the insight die.

Today we close the loop.

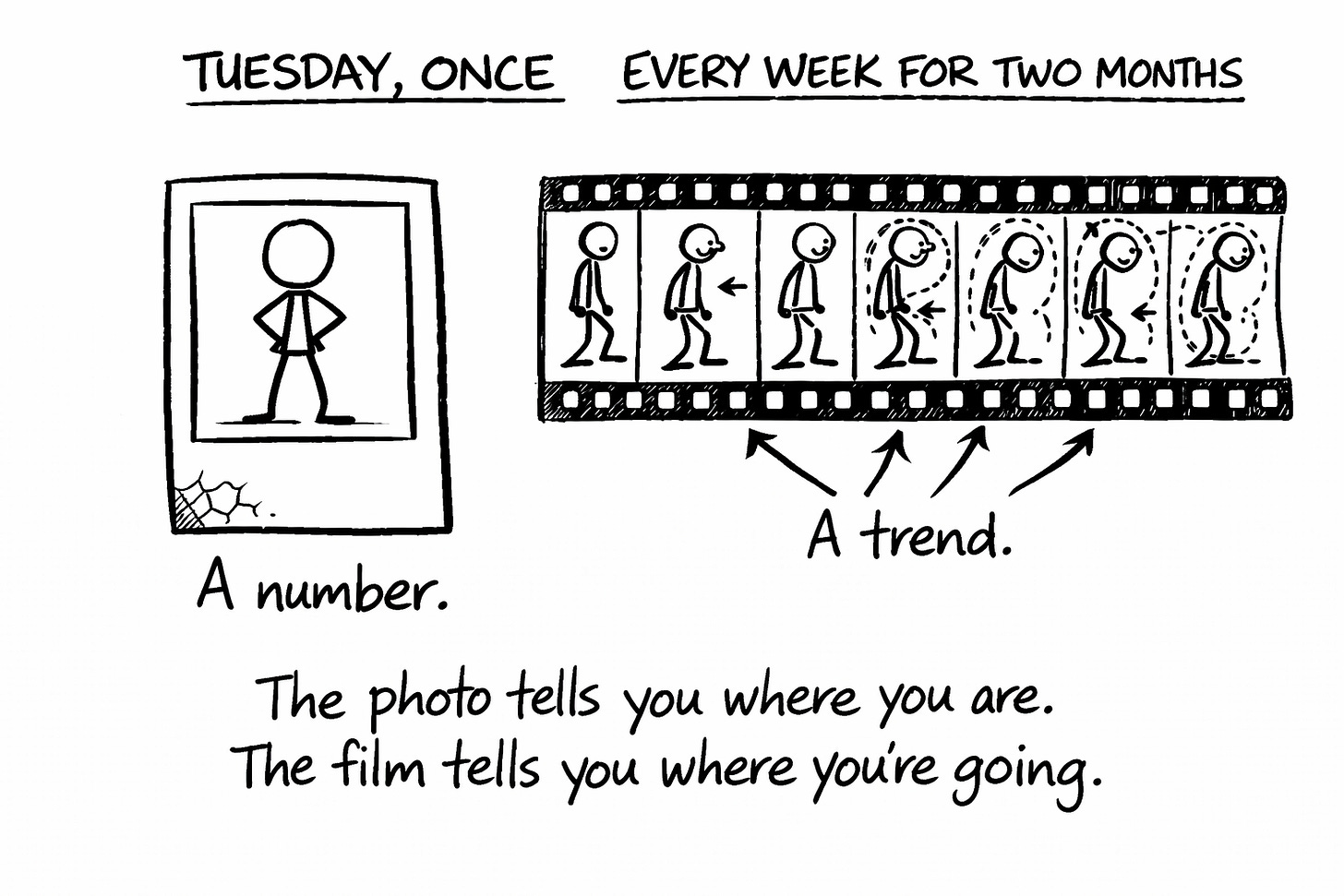

Reverse prompting once is a diagnosis. Reverse prompting weekly is a habit. Reverse prompting weekly and tracking what you find is how you stop making the same mistakes you’ve been making since 2019 without realizing it.

One reverse prompt is a diagnosis. A repeating reverse prompt is an operating system.

(Most people treat reverse prompting like a colonoscopy. Do it once, feel virtuous, avoid it for another decade.)

The Weekly Reverse Prompt Habit

Pick one area. Just one. The thing that matters most to your growth right now.

Content performance. Email engagement. Landing page conversion. Audience growth. Whatever keeps you up at 2AM wondering if you’re doing it wrong. (You might be. You probably are. I am. That’s the point. We’re going to find out.)

Now block 30 minutes. Same day every week. I do Sunday evenings because my calendar is empty and my judgment is questionable. (The second part isn’t relevant but felt important to disclose.)

Every week, same prompt, same area, fresh data. You’re not reinventing the diagnostic. You’re running the same test on updated material. Like a doctor checking your vitals at every visit instead of once when you turned 33 and never again.

Here’s the prompt I use for my weekly content review:

WEEKLY REVERSE PROMPT: CONTENT PERFORMANCE REVIEW

Here's my content from the past week:

POST 1: [Title]

- Engagement: [opens, clicks, comments, shares]

- What I was trying to do: [intent]

- How I feel about it: [honest assessment]

POST 2: [Title]

- Engagement: [opens, clicks, comments, shares]

- What I was trying to do: [intent]

- How I feel about it: [honest assessment]

CONTEXT:

- My newsletter is about: [topic and audience]

- My current growth goal: [what I'm optimizing for]

- What worked well last week: [observation]

- What felt off: [gut sense]

NOW DIAGNOSE:

1. What patterns do you see across this week's content that I might be missing?

2. Which piece performed best relative to my goals, and what specifically drove that?

3. Which piece underperformed, and what's your hypothesis for why?

4. What am I doing consistently that's helping?

5. What am I doing consistently that's hurting?

6. Based on this week's data, what should I do differently next week?

7. What question should I be asking that I'm not?

Be specific. Quote my content. No vague encouragement. I want a diagnostic, not a pep talk.That’s mine. Yours looks different because your 2AM problem is different.

Maybe you’re diagnosing email sequences that nobody clicks through. Landing pages that get traffic but no conversions. Social content that gets likes from the same twelve people. Course launches that sell on day one and flatline by day two.

Same engine. Different fuel. Here’s the skeleton:

WEEKLY REVERSE PROMPT: [YOUR AREA] REVIEW

Here's what I'm working with from the past week:

[Paste your recent material — emails, landing pages, social posts,

launch sequences, sales pages, whatever you're diagnosing]

FOR EACH PIECE, INCLUDE:

- The relevant metrics (opens, clicks, conversions, replies, sales — whatever you track)

- What you were trying to accomplish

- Your honest gut read on how it went

CONTEXT:

- What I do: [your niche, audience, and what you're building]

- What I'm optimizing for right now: [your current growth goal]

- What seemed to work this week: [your observation]

- What felt off: [your gut sense]

NOW DIAGNOSE:

1. What patterns do you see across this week's material that I might be missing?

2. Which piece performed best relative to my goals, and what specifically drove that?

3. Which piece underperformed, and what's your hypothesis for why?

4. What am I doing consistently that's helping?

5. What am I doing consistently that's hurting?

6. Based on this week's data, what should I do differently next week?

7. What question should I be asking that I'm not?

Be specific. Reference my actual material. No vague encouragement. I want a diagnostic, not a pep talk.Same seven questions. That’s the engine. The inputs change. The diagnostic doesn’t.

The first week, you get a diagnosis. The fourth week, you start seeing patterns in your patterns. By week eight, you know your failure modes better than your therapist does. (Cheaper too. Though less comfortable chairs. And less weeping, in my case.)

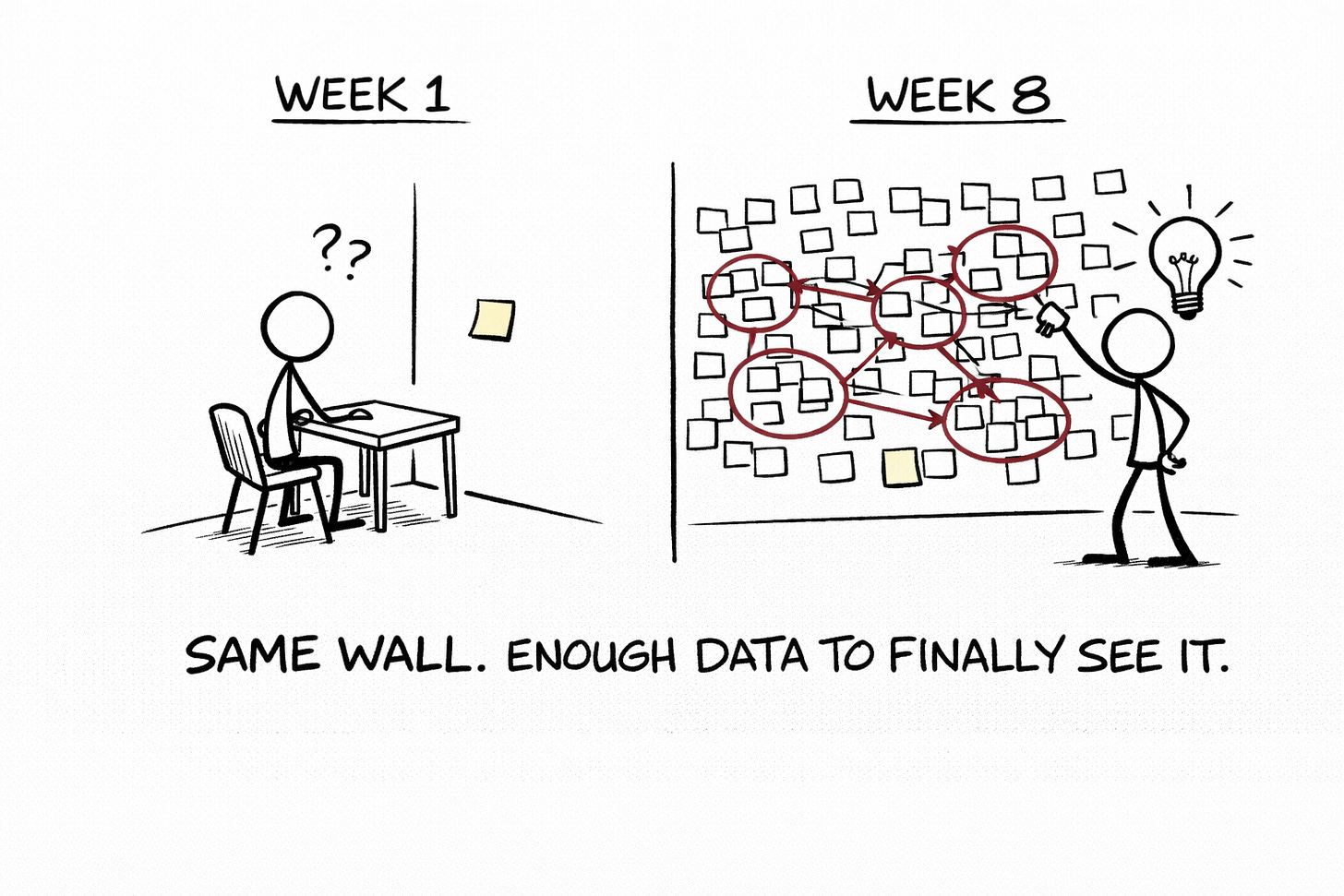

The Log That Catches You Lying to Yourself

Running weekly prompts without tracking results is like going to the gym without ever looking in the mirror. You’re doing the work. You have no idea if it’s working.

The Before/After Log fixes this. It’s stupid simple. That’s the point.

Every time you run your weekly reverse prompt:

Write down what AI found (the diagnosis)

Write down what you changed (the action)

Write down what happened (the result)

You could do this hungover. The original spreadsheet was FOR being hungover. I just swapped “tequila” for “weak CTAs” and kept the format.

Here’s the template:

REVERSE PROMPTING LOG

WEEK OF: [Date]

AREA: [What you're diagnosing]

WHAT AI FOUND:

- [Finding 1]

- [Finding 2]

- [Finding 3]

WHAT I CHANGED:

- [Action 1]

- [Action 2]

- [Action 3]

WHAT HAPPENED:

- [Result 1]

- [Result 2]

- [Result 3]

NOTES:

[Anything else worth remembering. Gut feelings. Surprises. Moments of existential doubt about your entire content strategy. All valid.]

---

WEEK OF: [Date]

[Repeat]

Keep this in a single doc. Don’t get fancy with Notion databases or Airtable automations. (Not yet. That’s a trap. The trap is called “I’ll set up the perfect system first” and it’s where productivity dreams keel over and die.)

After 4-6 weeks, something magical happens. You stop seeing individual findings and start seeing meta-patterns. The recurring themes that show up across multiple diagnostics. The blind spots that keep appearing no matter how many times AI points them out.

That’s the real gold. Not any single diagnosis. The pattern across diagnostics.

Closing the Loop (Reverse Prompting Your Reverse Prompts)

Here’s where it gets recursive.

After 6-8 weeks of logging, you have data. Real data. Not vibes. Not hunches. A documented record of what AI found, what you did about it, and what happened.

Now feed that back into AI.

You’re reverse prompting your reverse prompts. Diagnosing your diagnostic history. Looking for the pattern in the patterns.

META-ANALYSIS: REVERSE PROMPTING LOG REVIEW

Here's my reverse prompting log from the last [6-8] weeks:

[Paste your entire log here]

CONTEXT:

- My newsletter/content is about: [topic]

- My primary goal right now: [what you're optimizing for]

- What I think my biggest recurring issue is: [your hypothesis]

NOW GO META:

1. What patterns show up across multiple weeks that I might have missed looking at individual entries?

2. What findings keep recurring that I'm clearly not fixing permanently?

3. What changes worked well, and what do those successes have in common?

4. What changes didn't work, and what do those failures have in common?

5. Where am I making the same mistake repeatedly without realizing it?

6. What's the ONE thing I should focus on for the next 4 weeks based on this data?

7. What blind spot does this log reveal about how I think about my content?

Be ruthless. I've been staring at this too long to see it clearly. That's why I'm asking you. Find the thing I'm avoiding.

The first time I ran this, AI pointed out that I’d diagnosed “weak CTAs” three separate times over two months and only actually fixed them once. The other two times I’d triaged them as “important but not urgent” and then forgotten.

Turns out my triage system had a leak. The important-but-not-urgent bucket was where insights went to quietly suffocate while I congratulated myself on having a system.

(I fixed the CTAs. Finally. My conversion rate went up 23%. Two months late, but still.)

The Compound Effect

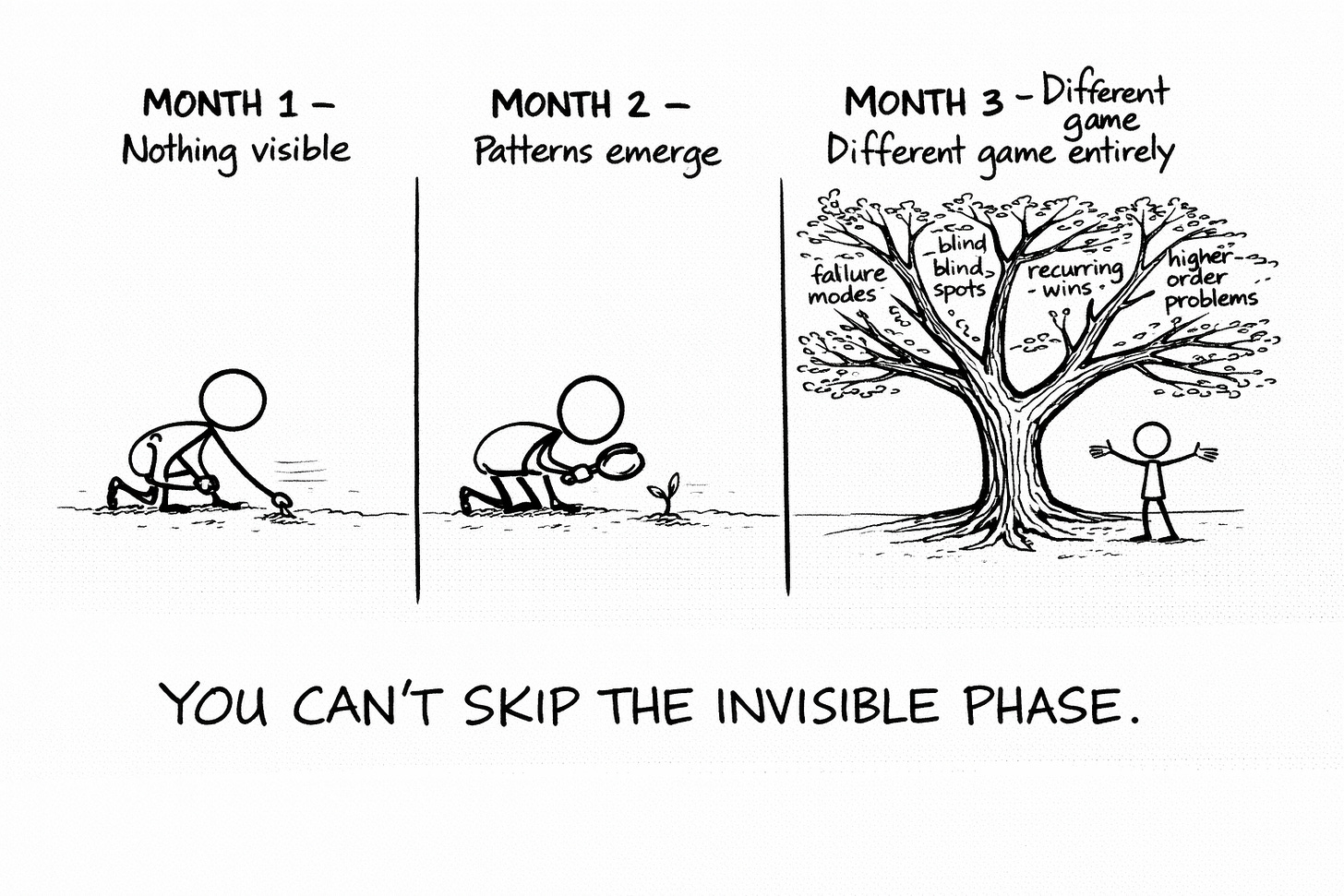

After 30 days (4 weekly sessions): You have baseline data. You know what AI consistently flags. You’ve made a few quick fixes. The log exists but doesn’t reveal much yet. This is the “showing up to the gym” phase. Progress is invisible. Trust the process.

After 60 days (8 weekly sessions): Meta-patterns emerge. You’ve run the recursive analysis at least once. You know your top 2-3 recurring failure modes. You’ve probably fixed one permanently and are working on the second. Your results are markedly different from where you started, even if you can’t articulate exactly why.

After 90 days (12 weekly sessions): You’re playing a different game. The problems AI flags now are higher-order problems. The obvious stuff is gone. You’ve built an intuition for what’s going to get flagged before you even run the prompt. Your log is a strategic asset. You can look back at January and barely recognize yourself.

The Wall You’re Going to Hit Around Week 4

Three posts in. You’ve got the framework. The triage system. The weekly habit. The log. The meta-analysis loop.

And somewhere around week 3 or 4, you’re going to hit a wall.

AI will diagnose a problem. You’ll know the fix. And when you ask AI to help you implement it, the result will be technically correct and completely soulless. It’ll solve the problem the way anyone would solve it. Not the way you would solve it.

That’s the calibration gap.

Reverse prompting finds what’s broken. But when you bring AI in to help fix it — rewrite the email, rework the landing page, restructure the launch sequence, redraft the post — it doesn’t know how you do things. Your specific patterns. Your instincts. The way you build an argument, phrase a CTA, sequence an offer. It just knows how those things are generally done. So every fix drifts toward the statistical mean. Every implementation sands off exactly the edges that made your work yours.

That’s what Ink Sync solves.

Feed → Reflect → Correct. You give AI your patterns, review the output, correct specifically where it drifts, and repeat until the implementations actually feel like yours. Not like AI trying to help a generic creator. Like AI trying to help you specifically.

The workshop is free while the link is live: Ink Sync: Stop Fixing AI Output. Start Calibrating It.

Reverse prompting is the diagnostic engine. Ink Sync is what makes sure you don’t lose yourself while fixing what the diagnostic found.

Use both. They’re the same system pointing at different problems.

🧉 What’s the one area of your content you’d run a weekly diagnostic on if you could only pick one? Drop it below. I’m curious what’s keeping people up at night. (Besides the obvious existential dread. We all have that. It’s not special.)

Crafted with love (and AI),

Nick "Three Columns and a Drinking Problem" Quick

PS… The Ink Sync Workshop won’t be free forever. If you’re building a reverse prompting habit and want the fixes to actually sound like you, grab it now: Ink Sync: Stop Fixing AI Output. Start Calibrating It.

PPS… This was the finale of a three-part series. If you got value from any of it, hit the like button, leave a comment, subscribe if you haven’t, and share with someone still typing “be authentic and engaging” into ChatGPT and wondering why everything sounds like a LinkedIn post from 2019.

📚 The Reverse Prompting Trilogy

Part 1: Stop Writing Prompts. Reverse-Engineer Them. — The backwards technique that teaches AI your patterns in minutes instead of months

Part 2: You Ran the Reverse Prompt. Now What? — How to turn AI’s diagnosis into action before the insight dies of neglect

Part 3: One Reverse Prompt Is a Trick. A Weekly One Is an Operating System. — The weekly habit that compounds ← You are here

I am going to commit to this like a third marriage. I’m going to start strong with best intentions. 😇

This is GOOD!