Writing Well Is Now a Crime

A man with a stutter got publicly humiliated for using the word "juxtaposition." He's not the only one.

Jared Hewitt has a stutter.

Speech has never come easy. So he did what a lot of people do when one form of expression is hard: he got really, really good at another one. He built a written vocabulary over his entire life. Word by word. Sentence by sentence. The kind of slow, deliberate construction that happens when you can’t rely on your mouth to do the job.

Hewitt works at a daycare. Last winter, he wrote an incident report. Used the words “juxtaposition” and “circumstantial.”

His coworker accused him of using AI. Publicly. In front of children.

Two words. That was the evidence. A man with a speech impediment who’d spent decades compensating through precise writing got humiliated for it. Because apparently, a daycare worker isn’t supposed to have a vocabulary.

Here’s the part that should make you uncomfortable. Your first instinct, reading that incident report language, might have been the same as the coworker’s. That sounds like ChatGPT. Clean grammar. Formal register. Careful word selection.

You know. The things English teachers spent decades telling us to aspire to.

(Congratulations. We’ve successfully made good writing a liability.)

The Accusation Epidemic

Hewitt isn’t alone. Not even close.

New York Magazine just published a feature documenting a growing phenomenon: real humans getting falsely flagged as AI writers. The pattern is consistent, and it’s ugly.

The historical novelist Kerry Chaput posted on social media about her neurogenic cough (a real medical condition she actually has). A reader flagged it as ChatGPT. Because writing clearly about your own health condition is suspicious now.

A writer for the New York Times Modern Love column (arguably the most competitive personal essay slot in American publishing) wrote about her marriage falling apart. Within days, writers on social media were publicly calling the piece AI slop. The evidence? Short declarative sentences. Parallelisms. A rhythmic quality that pattern-matched to what people have decided LLMs sound like.

One critic posted that it read “EXACTLY like AI slop” and called it “just sad.”

You know what’s actually sad? A woman wrote honestly about the moment she stopped fighting for her marriage. About the specific, visceral, human experience of giving up on something that was destroying her. And the internet’s first instinct wasn’t to engage with any of it.

It was to run the text through their internal slop detector and declare her a fraud.

(The Times didn’t pull the essay. Because, you know, a human wrote it.)

Who’s Actually Getting Caught in the Net

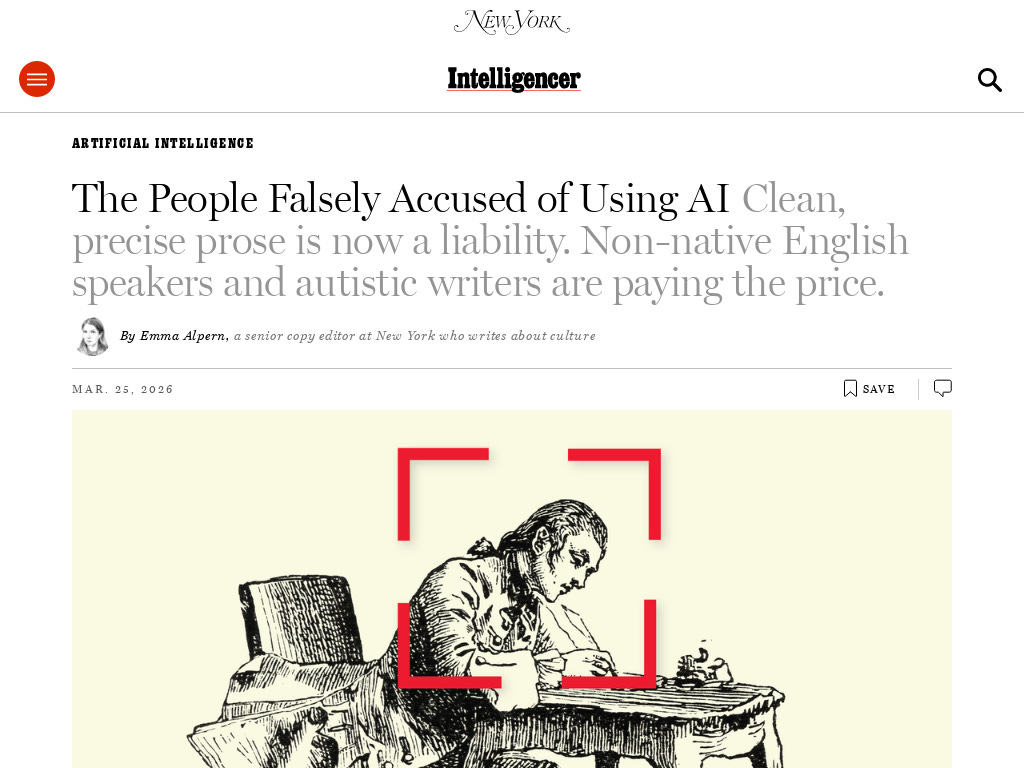

Here’s the pattern that New York Magazine documented. The people being falsely accused are disproportionately:

Non-native English speakers who learned textbook English because textbooks were all they had. Their writing is precise because nobody taught them the casual register. They write the way they were taught. And now that precision reads as artificial.

Autistic writers whose natural communication style is structured, logical, and literal. The “AI accent” (even tonality, perfect grammar, formal vocabulary) is actually how many neurodivergent people have always communicated. Long before ChatGPT existed.

Anyone who spent years getting better at sentences. Hewitt’s entire life was a project of compensating for verbal limitation through written precision. His reward for that effort? Public accusation.

The reporter describes a culture where people are “going off vibes.” No evidence. No methodology. Just the feeling that something is too clean. Too polished. Too... competent.

We trained machines on formal, polished human writing. Then we turned those machines back on the humans and started accusing them of being machines. The people paying the price are the ones who communicate the way AI was trained to mimic.

(The circularity of that should keep you up at night.)

You’ve Built a Conformity Engine in Your Head

This is the part that matters for you specifically. Not as a bystander. As a creator.

It’s not just the automated AI detection tools that are broken (they are, spectacularly, with false positive rates that would get a medical test yanked from the market). It’s that you and I and everyone reading this has internalized the same broken logic. We’re all running our own informal detection algorithms now. Every email. Every post. Every essay someone shares.

We’ve crowdsourced paranoia. Everyone’s a fraud detective. Nobody has a badge.

And the “evidence” we’re using? Precision. Clean sentences. Careful word choice. Logical structure. The exact qualities that used to signal “this person can write” now signal “this person is probably a robot.”

I wrote about this pattern a few months ago. Your readers are already making snap judgments about your writing. But I was focused on the tells that signal actual AI involvement (the smoothed-out voice, the missing fingerprints, the absence of what I call you-shaped holes). What I didn’t account for was the reverse problem.

Readers punishing writing that’s too good because good now equals suspicious.

What This Means for Your Work

Here’s why this isn’t just a human interest story about defending falsely accused writers.

Your readers are making this same judgment call on YOUR work. Every time you publish.

If you co-write with AI (which, if you’re reading this newsletter, you probably do or you’re thinking about it), you’re already in the crosshairs. Not because your work is slop. Not because you’re cutting corners. But because the paranoia doesn’t distinguish between slop factories churning out garbage and careful creators using AI as a collaboration tool.

The tempting reaction is exactly wrong: perform your humanity. Add deliberate typos. Write messier on purpose. Throw in some profanity to prove you’re breathing. (Guilty. Though in my case the profanity is just... me.)

That’s not authenticity. That’s a costume. You’re still performing for the detector. You’ve just switched from performing “professional” to performing “human.”

Here’s a test. Read this sentence:

The assessment of quarterly performance metrics reveals a consistent upward trajectory across all verticals.

Human or AI?

You just made a judgment. And you made it in under a second. Based on nothing but pattern recognition. The same pattern recognition that destroyed Jared Hewitt’s afternoon. The same pattern recognition that turned a woman’s essay about her broken marriage into a public accusation of fraud.

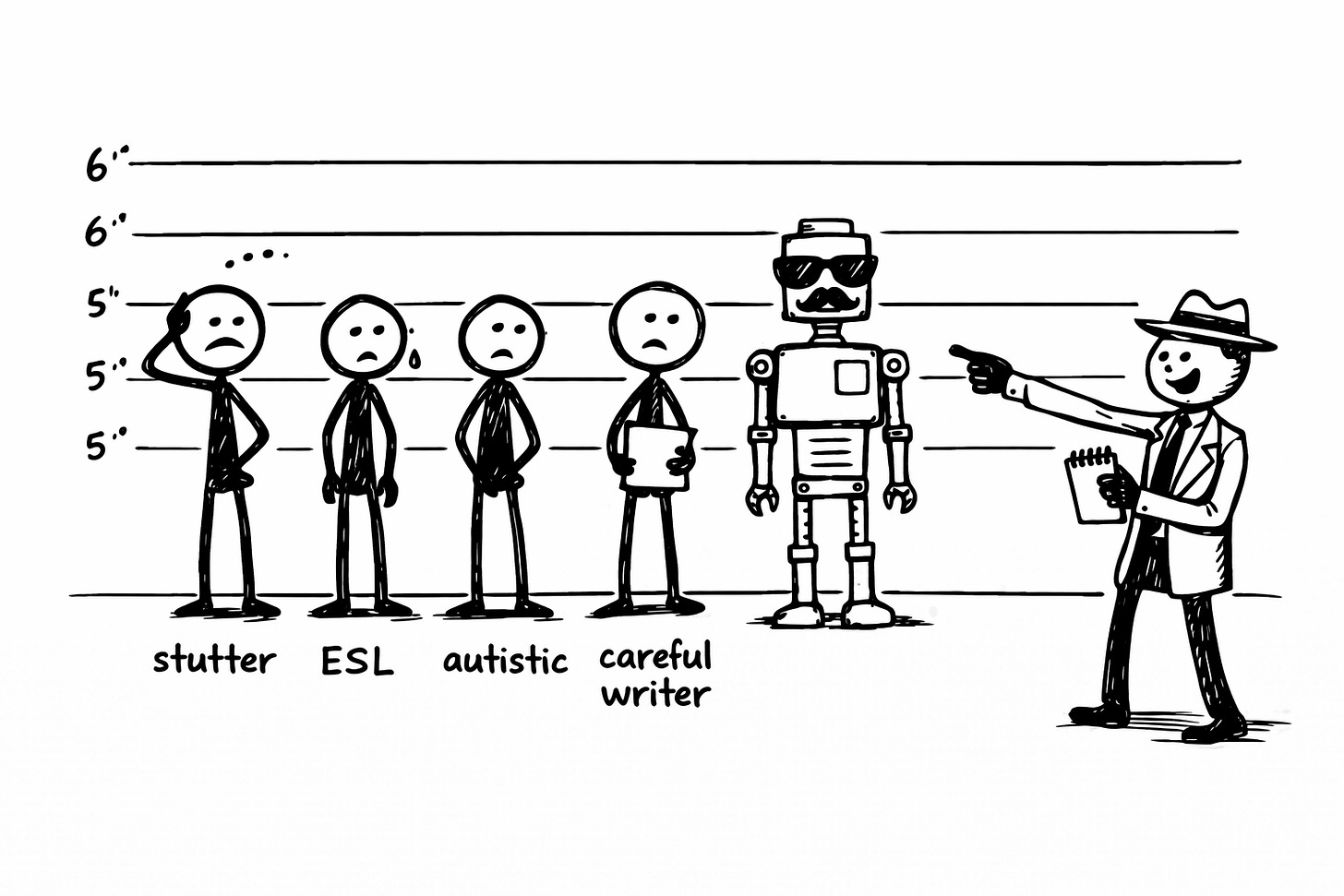

The actual fix isn’t messiness. It’s specificity. Not “how to sound human enough to avoid detection.” But how to sound so specifically like yourself that the question becomes irrelevant.

The reference that makes your best friend text “why are you like this.” The take so specific that three people on earth agree with you and two of them are wrong. The sentence you type, stare at, and publish anyway because deleting it would be cowardice. That’s not reproducible by a machine. That’s scar tissue shaped into prose.

That’s what a Voiceprint documents. Not “how to dodge the detectors.” But “how to be so unmistakably you that nobody bothers asking.”

Jared Hewitt didn’t need to dumb down his vocabulary. He needed a world that evaluates writing by what it says, not by whether it pattern-matches to a paranoid checklist.

We’re not in that world yet.

But we could be.

Tomorrow: The Other Side of the Coin

Today I showed you the people being punished for writing too well without AI.

Tomorrow I’m going to show you the people who’ve been protected for having someone else write for them entirely. For over a century. At half a million dollars a pop.

Same act. Different price tag. Wildly different consequences.

If you think the false accusation problem is broken, wait until you see the permission structure that operates alongside it.

🧉 Pour one out and tell me: Have you ever been accused of using AI when you didn’t? Caught yourself suspecting someone else? What was the “evidence” that triggered it? I want to hear these stories.

The question was never “did a human write every word?” It was “did someone give a shit about what it says?”

Crafted with love (and AI),

Nick “Falsely Convicted at 98.7% Confidence” Quick

PS... Jared Hewitt didn’t need to dumb down his vocabulary. And you don’t need to dumb down your AI output. You need to calibrate it. The Ink Sync Workshop teaches the three-step loop that makes AI match your patterns instead of guessing at them. It’s free.

PPS... Like this? Share it with a writer who’s been sweating every time they use a semicolon. They need to hear this. And if you haven’t subscribed yet, tomorrow’s Part 2 involves a million-dollar scandal and a 118-year-old con. You’ll want to be here.

The reporting that sparked this comes from Emma Alpern's feature in New York Magazine. She documents Jared Hewitt, Kerry Chaput, and several others who've been falsely flagged as AI writers. Read the full piece here:

https://nymag.com/intelligencer/article/the-people-getting-falsely-accused-of-using-ai-to-write.html

The NYT Modern Love accusation against Kayla Gilgan was covered by Futurism:

https://futurism.com/artificial-intelligence/new-york-times-accused-ai-article

Tomorrow's Part 2 goes deeper into why the backlash is broken. Same act, different price tag. You'll want to be here.

Nick, am I correct in saying you've played a few games here and included distinctive AI writing tics on purpose to prove a point? I am so wanting to turn several of your full stops into commas and do some editing, but I also know you are always very purposeful and know exactly what you are doing...

You reel me in so well!! I read this equal parts nodding my head and also "but Nick, the AI patterns!" 😅