The $1 Million Cheater

Ghostwriting has been elite AI for a century. We only got mad when it became free.

In 1908, a newspaper in Lincoln, Nebraska published a story about a woman who paid someone $5,000 to write a book under her name.

The paper called this person a “ghost.”

That was 118 years ago. Adjusted for inflation, $5,000 in 1908 is roughly $170,000 today. For one book. Written entirely by someone else. Published under the name of a person who didn’t write a word of it.

Nobody went to prison. Nobody launched an investigation. Nobody even seemed particularly bothered.

They just coined a fun little word for it and moved on.

(The word stuck. The discomfort didn’t. Funny how that works.)

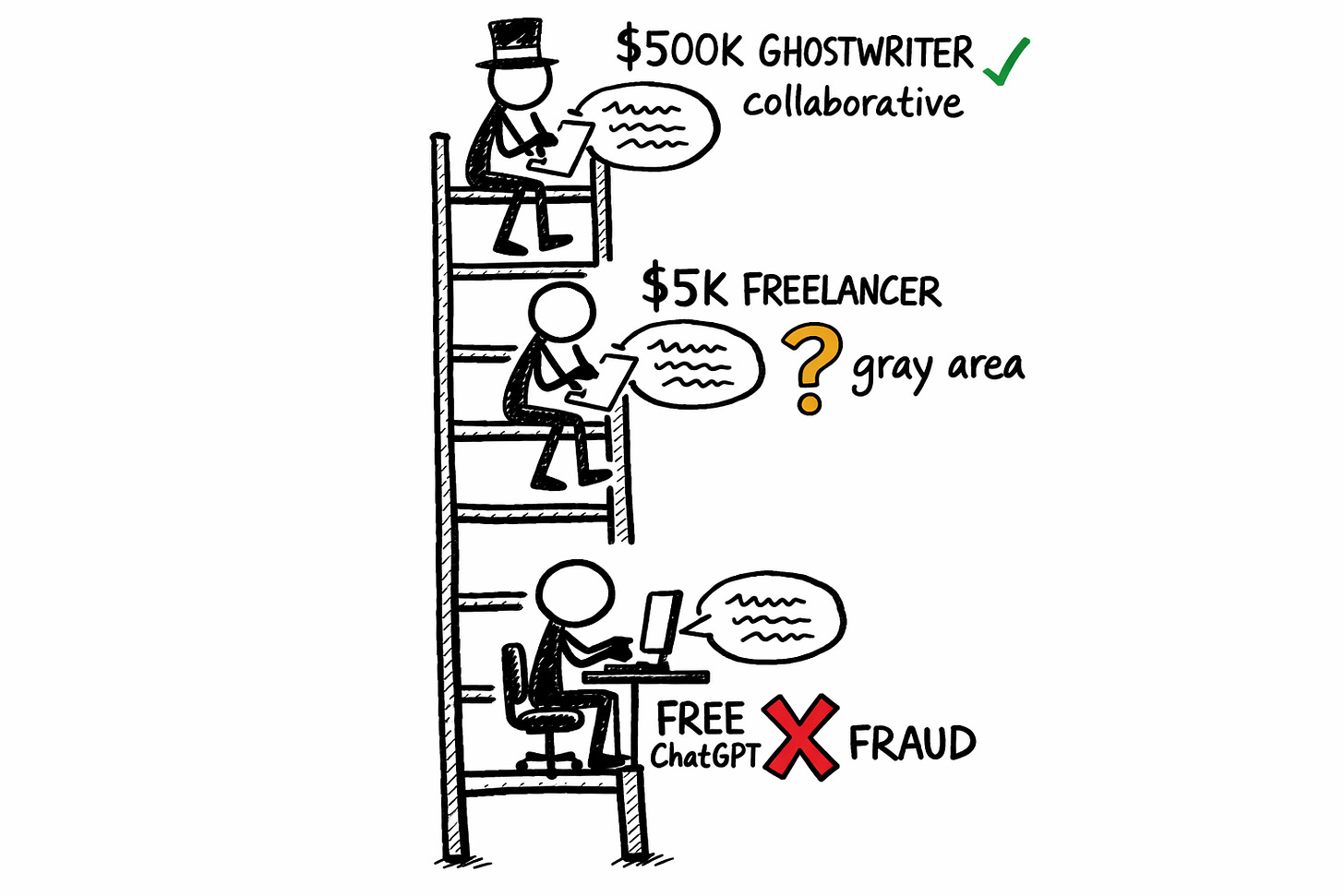

The Legitimacy Ladder

Let’s play a quick game. I’ll describe an act. You tell me if it’s cheating.

A person has an idea for a piece of writing. They don’t have the skill, time, or bandwidth to execute it themselves. So they bring in outside help. The helper does most of the actual writing. The person whose name goes on the finished product reviews it, maybe tweaks a few things. Then publishes it as their own.

Cheating?

Your answer depends entirely on how much the helper cost.

If the helper is a $500,000 ghostwriter, it’s called “collaboration.” There are agencies for this. Contracts. An entire industry with trade conferences and LinkedIn thought leaders and probably a podcast called something like Ghost in the Machine hosted by two guys both named Brad. (I haven’t checked. I’m afraid to check. It definitely exists.) The ghostwriter gets a polite mention in the acknowledgments (maybe) and a wire transfer. Everyone’s comfortable.

If the helper is ChatGPT, it’s called “cheating.” Universities launch investigations. Students get expelled. Writers get publicly shamed on social media by other writers who almost certainly use Grammarly but draw the line at large language models because apparently the authenticity threshold is somewhere between autocomplete and autocomplete-but-bigger. (Nobody can tell you exactly where. But they're very sure you crossed it.)

Same act. Same outcome. Same relationship between the person with the idea and the entity that executed it.

Different price tag. Different reaction.

(If you’re feeling defensive right now, good. Sit with it. I’ll wait.)

The Vanderbilt Incident (Or: How to Get Investigated for Being Efficient)

In February 2023, Vanderbilt University needed to send an email to its student body. A campus shooting had occurred at Michigan State. The moment called for empathy, community, human connection.

An administrator drafted the email. Or rather, had it drafted. In tiny type at the bottom: “paraphrased from OpenAI’s ChatGPT.”

You can probably guess what happened next.

A student called it “sick and twisted irony.” (Fair.) The university launched a professionalism and ethics investigation. (Also fair.) An associate dean described the situation as “learning pains tied to the adoption of new technology.” (The most university-administrator sentence ever spoken. Someone should check if that was generated by AI.)

What if they’d hired a ghostwriter to draft it instead?

Not a chatbot. A human ghostwriter. Someone who writes emails and speeches for institutions professionally. (These people exist. They cost between $500 and $5,000 per piece. They are, effectively, the ChatGPT that went to Dartmouth.)

If Vanderbilt had paid someone $3,000 to write that exact same email, word for word, and put the dean’s name on it, nobody would have blinked. The investigation wouldn’t have happened. The student wouldn’t have called it “sick and twisted.” It would’ve been called “professional communications.”

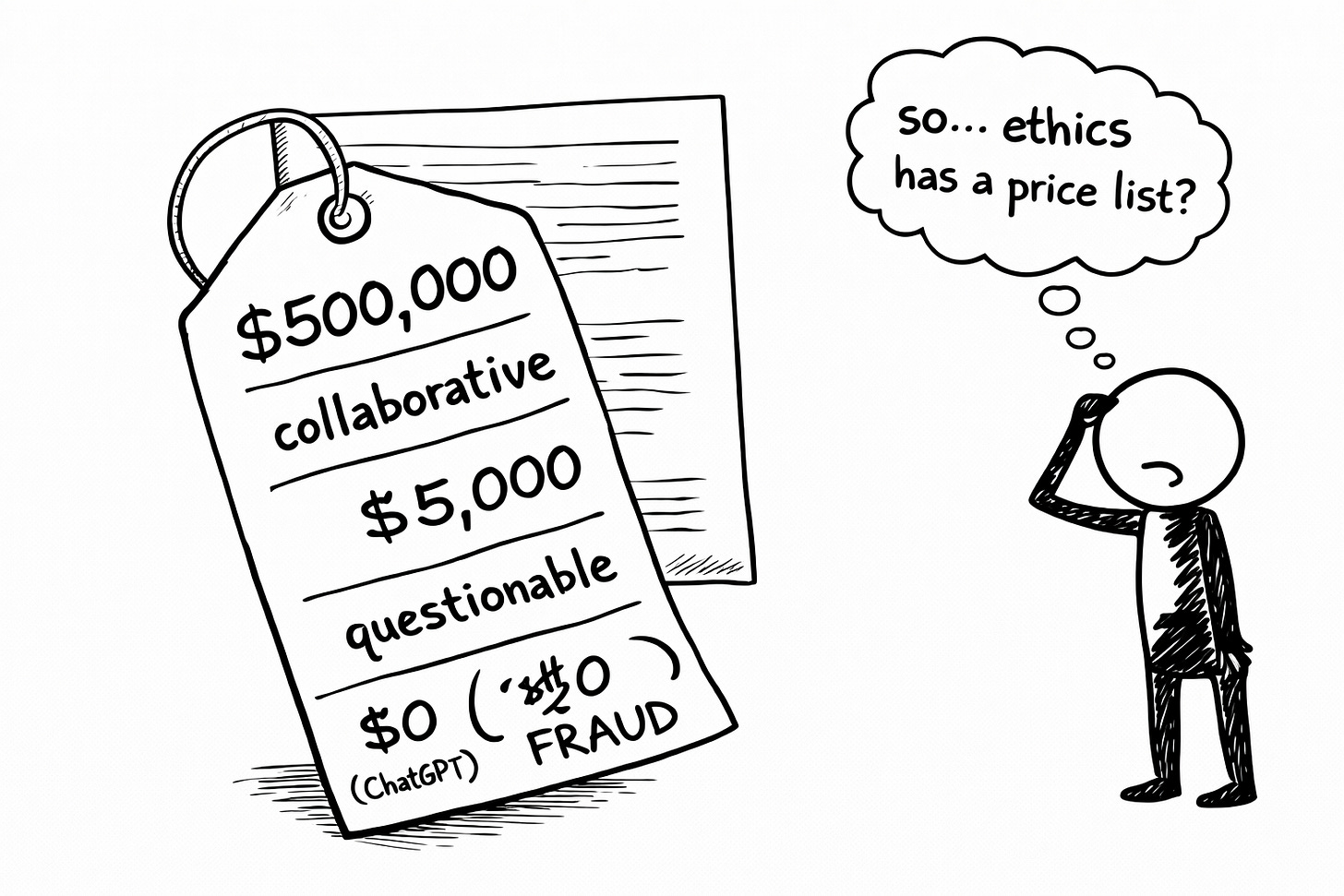

(Same words. Same lack of personal authorship. But one version costs money and the other is free. And apparently morality is a premium feature.)

The Guilt Is the Tell

Here’s a detail from the research that hit me sideways.

An anonymous Reddit poster wrote: “I feel so guilty and ashamed whenever I use a ghostwriter now.”

Not a ghostwriter and AI. Just a ghostwriter. A human one. Paid for. Consensual. Contractual. Two adults agreeing to exchange money for words in a transaction as old as publishing itself.

And this person still feels like a fraud.

Meanwhile, Millie Bobby Brown published a novel in 2023 with a credited co-writer. (Industry speak for “ghostwriter who negotiated a slightly better deal.” The credit being: you get your name on the cover in a font size roughly equivalent to the copyright notice.) The internet’s response was predictable and vicious: “You should be ashamed.” “The ghostwriter’s name should be on the cover.”

One veteran ghostwriter (110+ books, six-figure career) has been refreshingly blunt about where the industry is headed: ghostwriting will survive by rebranding as a “boutique service for elites.” Not his critics saying this. Him. The guy whose livelihood depends on it. (Essentially: we’ll be fine because rich people will still pay us to do the thing everyone else gets crucified for doing with free tools.)

Not because the human ghostwriter’s output will be better. But because paying a human signals something that paying zero for AI doesn’t.

Status. Exclusivity. The luxury of not needing the free version.

(It’s like first class and economy on the same plane. Same destination. Same turbulence. One of them gets a hot towel and moral superiority.)

This is what I mean when I say the authenticity debate is broken. We haven’t actually figured out the ethics of collaborative writing. We’ve just built permission structures based on prestige and price, then decorated them with enough industry language to make them feel principled.

The Class War You’re Already Fighting

Yesterday’s post showed you the people getting punished for writing too well without AI. Jared Hewitt. Kerry Chaput. The NYT Modern Love essayist. Their crime: precision. Their sentence: public humiliation.

Today’s post shows you the opposite. People who’ve been protected for having someone else write for them entirely. Their shield: money, prestige, and 118 years of industry infrastructure designed to make it acceptable.

I wrote about this class divide a few months ago. The split between “artisanal human” writers and “AI-assisted” writers was already forming. But I underestimated something. The divide isn’t just between AI users and AI refusers. It runs deeper than that.

It’s between people who can afford the acceptable version of writing help and people who can’t.

The ghostwriter charges six figures. The freelance editor charges four. Grammarly charges $30 a month. ChatGPT is free.

And the cheaper the tool, the more suspicious the output.

(If that doesn’t smell like a class system to you, I don’t know what to tell you. Maybe check if your detection algorithm has a bias setting. It does. It’s factory-installed.)

The $500,000 ghostwriter survives. The $5,000 ghostwriter gets automated. And the person using ChatGPT for free gets called a cheater by someone whose LinkedIn thought leadership was written by an agency.

(The call is coming from inside the agency.)

So What Are We Actually Measuring?

Here’s where both days of this series converge.

Saturday: we punish people for writing too well. Because precision signals AI.

Sunday: we protect people for not writing at all. Because paying someone signals legitimacy.

Neither one measures the thing that actually matters.

We’re not evaluating quality. We’re not evaluating honesty. We’re not even evaluating effort. We’re evaluating conformity in one direction and class in the other. And the people caught in between (the ones actually trying to produce good work with whatever tools they have access to) are the ones getting squeezed from both sides like toothpaste in a tube that’s been rolled from both ends.

(Sorry. That metaphor got away from me. But you know the feeling. You know exactly the feeling.)

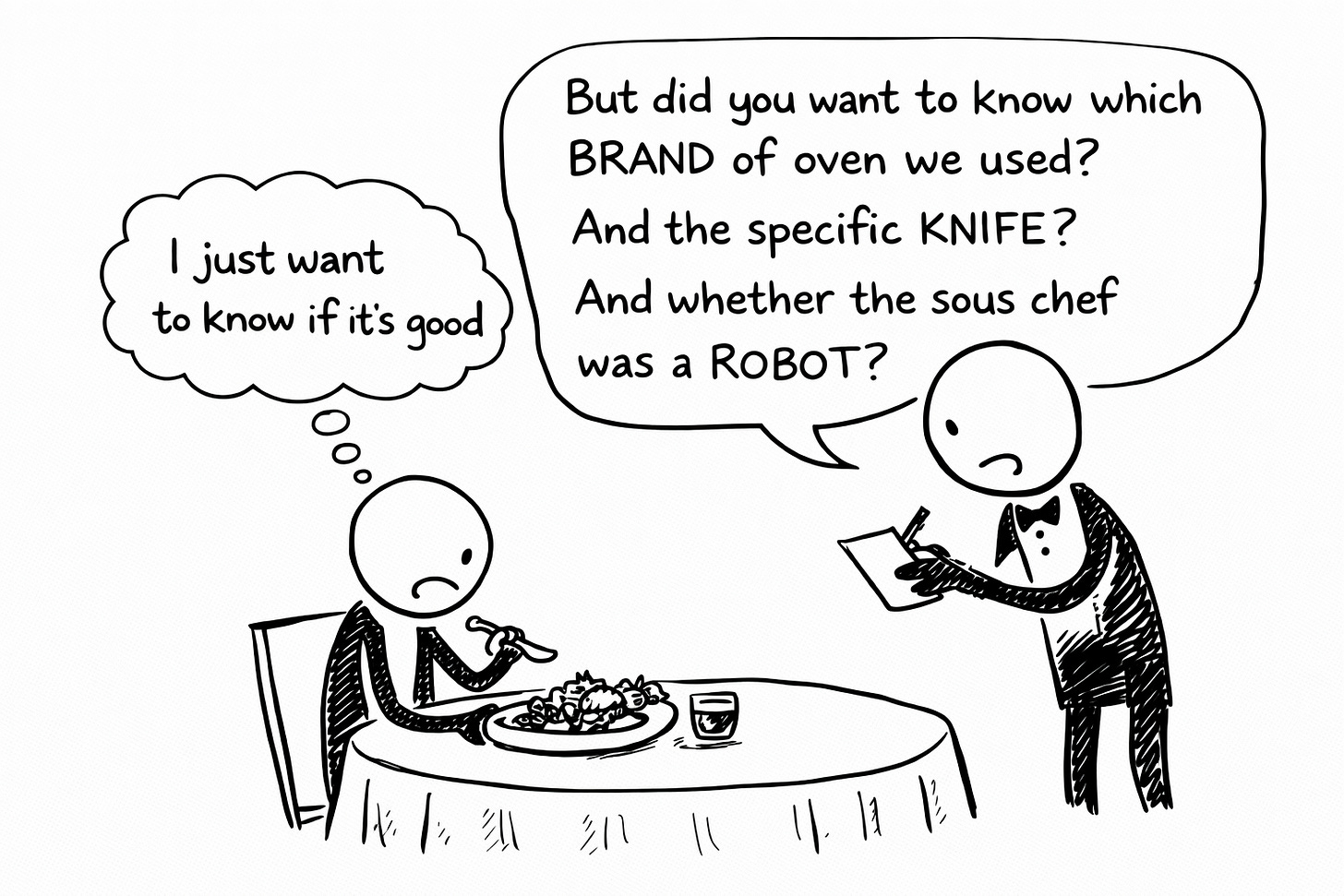

This is the argument for co-writing with AI. Not “AI is fine, relax.” Not “tools don’t matter.” The argument is that we need an entirely different framework for evaluating writing. One that doesn’t care whether a ghost or a model helped produce it. One that asks one question:

Did someone give a shit about what this says?

That’s it. The entire evaluation.

Did the person whose name is on it care enough to shape it? To review it? To ensure the ideas are theirs, even if every sentence isn’t? To put their fingerprints on the work so thoroughly that the tool becomes irrelevant?

The co-writing middle path I keep arguing for isn’t a compromise. It’s the only honest position left standing. Because it refuses to pretend. It doesn’t hide the ghostwriter in the acknowledgments. It doesn’t bury “paraphrased from ChatGPT” in tiny type. It says: “I used AI. I also did the work. Here’s the result. Judge the result.”

That’s more transparent than the ghostwriter who hides behind “special thanks to.” More honest than the executive whose “thought leadership” was written by an agency and a prayer. More ethical than the university that disclosed their AI use in fine print and still got investigated for it.

(Vanderbilt’s real crime wasn’t using AI. It was disclosing it in a font size that implied they knew it was wrong. The tiny type was the confession, not the tool.)

The Disclosure Power Move

I want to leave you with a reframe.

It's been there since day one. It was there when my audience was me, my chihuahua, and whatever Google bot happened to crawl past. It'll be there if I ever hit 50,000.

That line isn’t a confession. It’s a position statement.

I co-write with AI. I also document my voice patterns so precisely that the AI follows my map instead of its own default settings. (Which, for the record, default to “LinkedIn influencer who recently discovered stoicism.” Nobody wants that.) I review, edit, rewrite, rethink, and occasionally delete everything the AI produced and start over.

Because adequate is my mortal fucking enemy.

I publish daily using a system I built, and I show the receipts for how it works.

The ghostwriting industry has spent 118 years building elaborate structures to make “someone else helped me write this” acceptable. They did it with contracts, agencies, NDAs, and polite euphemisms hidden in acknowledgment sections that nobody reads. (Shoutout to everyone who’s ever read an acknowledgments page. All nine of you. You’re doing the Lord’s work.)

I do it with six words at the bottom of every post.

Which one is more honest?

You already know the answer. You knew it before I asked.

🧉 Pour one out and tell me: Where do you draw your personal line between “acceptable help” and “cheating” in writing? Is it the tool? The cost? The disclosure? Something else entirely? Drop your take. No wrong answers. (Except “real writers don’t use any help,” which is historically illiterate and also probably ghostwritten.)

The permission to use AI was never anyone’s to grant. The only permission that matters is the one you give yourself: to do the work, use the tools, and put your name on something worth reading.

Crafted with love (and AI),

Nick “$0 Ghostwriter, Full Disclosure” Quick

PS... If you missed yesterday’s Part 1, go read Writing Well Is Now a Crime first. Today’s piece hits harder when you’ve already met Jared Hewitt. (And yes, you probably would have flagged his incident report too. It’s okay. That’s the point.)

PPS... If someone forwarded this to you: welcome. I publish daily. I disclose my AI use. I don’t charge six figures for it. The Ink Sync Workshop is the free starting point. And subscribing costs exactly $0, which according to today’s piece makes me suspicious. Subscribe anyway.

Today's piece is built on research by Emily Hodgson Anderson, a USC professor who's literally writing the book on ghostwriting. The book is called Ghostwriting: A Secret History, from God to A.I. which is a title I wish I'd thought of first.

Her article:

https://fortune.com/2026/03/27/ai-backlash-slop-debate-ghostwriting-history-plagiarism-cheating/

If you missed yesterday's Part 1, start there. Get angry. Then come back here and get angrier.

https://nickquick.substack.com/p/writing-well-is-now-a-crime