Your Draft Got Flagged As AI. The Detector Isn't Wrong. You Are.

Eight tells that make clean human writing look like a robot wrote it, plus the pre-publish checklist that fixes them in fifteen minutes.

“This is really well-written. Which AI did you use?”

Sounds like a compliment. Lands like a slap in the face from someone still smiling.

You already know where this goes. You published something real. Rewrote the opening until your eyes stopped seeing words. Cut the paragraph you loved because loving it wasn’t enough to justify what it was doing to the one behind it. Hit publish with that quiet, dangerous satisfaction of someone who gives a shit.

And then, before the dopamine wears off: “Which AI did you use?”

(The correct response is arson. The socially acceptable response is “Ha, none actually, just me!” while your soul quietly exits through a side door it built for exactly this occasion.)

Here’s why that question is going to follow you now. Not because people are mean. Not because they’re suspicious. Because your best work and the slop factory default output settings have converged to the point where a genuine human being can’t tell the difference by reading alone. Your prose and the machine’s prose showed up to the same party in the same outfit. And the machine got there first. So now you look like the one who copied.

Let me be precise about what’s broken here, because it’s not AI. I co-write with AI every day and I’ll be doing it again tomorrow. The problem is work that’s been sanded so smooth there’s nothing left to grip. Doesn’t matter who brought the sandpaper. The result is the same: content with a pulse but no fingerprints. Alive enough to publish. Dead enough to forget by noon.

That’s not an insult. It’s a diagnosis. And the condition is treatable, if you’re willing to hear what the symptoms actually are.

The convergence nobody warned us about

The detectors aren’t hunting for AI. They’re hunting for smoothed. Low perplexity (predictable word choices). Low burstiness (sentences plodding along at the same length like a conveyor belt that doesn’t know what it’s manufacturing and doesn’t need to). Hedging. Tidy transitions. The surgical absence of anything weird, specific, or slightly embarrassing.

Guess what fifteen years of “tighten your prose” advice was actually training you to produce?

Every writing teacher who told you to “vary your sentences less dramatically” or “smooth your transitions” or “cut the personal tangent, it’s distracting” was unknowingly sculpting your writing into the exact statistical corridor that a trillion-parameter model wanders into by default. You and the robot had the same editor. You both did the homework. You both showed up to the recital in matching outfits. One of you is now being asked to prove they’re real.

(The other one doesn't care, because the other one doesn't have feelings. Or doubt. Or the bone-deep certainty that you peaked two posts ago and everything since has been a slow, polite decline.)

This is what ensloppification actually looks like from the inside. Not AI dragging human writing down. The algorithm did that years ago. SEO taught creators to write for robots before the robots could write for themselves. Trend-chasing taught everyone to publish the same topic on the same day with the same angle. Analytics dashboards rewarded sameness and called it “what’s working.” By the time a language model showed up and started hanging curtains, the corridor was standing room only. You were roommates before you ever met.

Now a reader looks at your best work and can’t tell which roommate wrote it.

That’s what “Which AI did you use?” really means. Not an accusation. A confession. And it should terrify every one of us who does the work for real.

Eight tells your best writing is flashing like a neon sign

I ran every piece I published in March through three different detectors and then against my own manuscripts from a decade ago (pre-GPT, written in Google Docs with all the structural elegance of a drunk trying to parallel park). Then I did the same for five reader drafts that had been falsely flagged.

I expected some overlap. I did not expect to find six or seven of the same eight tells in every single false positive I examined. The specific cocktail varied. The hangover was identical.

These are the reasons someone looked at your best work and asked if a machine wrote it.

1. The transition tax.

However. Moreover. Furthermore. In addition. That said.

Any of these as the first word of a paragraph is an alarm bell ringing inside a building that’s already on fire. Humans almost never start a paragraph with “Moreover.” We just say the next thing. “Moreover” is what you write when you want to sound like you went to college but forgot which one.

2. The hedge sandwich.

“It’s worth noting that X can sometimes be Y, although this may vary depending on context.”

Every clause softens the one before it. By the time you reach the period, you haven't said anything. You've built a sentence the way a committee builds a mission statement: every word approved, no word necessary.

(The detectors love this. I mean really LOVE it. Hedge density is one of their strongest signals. Machines were trained to hedge so their corporate overlords wouldn’t get sued. You, presumably, are not getting sued today. Act like it.)

3. Sentence length stuck in the 12-to-18-word corridor.

This is the burstiness problem. If every sentence falls between twelve and eighteen words, you're not writing. You're paving a sidewalk. Flat. Even. Perfectly functional. Nobody has ever taken a photo of a sidewalk.

Break it.

Write a four-word sentence. Then write a thirty-eight-word one that does three things at once and earns its length by refusing to quit until it has said something the short ones couldn’t have reached on their best day with a running start.

4. Tricolons. Everywhere.

“It’s fast, clean, and effective.” “Precise, confident, and sharp.” “Bold, fresh, and innovative.”

AI loves a tricolon the way a twelve-year-old loves a fog machine. Three beats. Always three beats. Not because three is the right number. Because three is the number that lets a sentence stop trying. One adjective means you chose. Two means you’re comparing. Three means you gave up and called it a pattern.

If you’ve got more than two tricolons in a thousand words, you’re living in the uncanny valley and the rent just went up.

5. The em dash pandemic.

I didn’t stop using em dashes because I wanted to. I stopped because AI made them radioactive.

The math explains why: the em dash is the least risky punctuation token a language model can produce. A semicolon demands precision. A period forces a full restart. The em dash replaces commas, colons, and parentheses interchangeably without tripping a single grammatical wire. It’s punctuation with no consequences. Of course the machine defaults to it.

And here’s what makes it a dead giveaway specifically on social media: there is no em dash on your keyboard. None. Producing one requires either a shortcut nobody remembers or a Google search nobody admits to. When someone’s tweet has three em dashes in it, they either have a very particular relationship with Alt+0151 or they didn’t type that sentence themselves.

6. Signposting that narrates its own structure.

“In this piece, I’ll walk through three common mistakes.” “As we’ve seen above.” “Let’s turn now to the second point.”

Humans writing in flow do not provide a guided tour of their own paragraph architecture. They just change direction and trust you to keep up. The signpost is a confession that you don’t trust your prose to carry itself. Which, if you’re signposting, you might be right about.

7. The abstracted noun phrase.

“The importance of consistency in one’s creative practice cannot be overstated.”

Versus:

“Show up every day. Even when it sucks. Especially then.”

You skimmed the first one. You read the second one. That’s the whole diagnosis. Abstraction is anesthesia. Your reader goes numb before the period and never knows why they stopped caring.

8. No specific, weird, slightly embarrassing details.

This is the big one. The one that separates your content from the machine’s content more than all the other seven combined.

AI has no body. It has never smelled anything, touched anything, or been embarrassed by anything. So when your prose strips out the weird, the too-specific, the slightly-off, you end up matching the machine’s output. Not because you used AI. Because you removed everything AI can’t replicate.

I grew up in southern Idaho, a mile from a sugar beet factory that produced some of the finest sweetener in the Rocky Mountains. The process of making it smelled like a horse had died inside another, larger horse. Nobody described the school by its mascot. One smell overrode everything: “Oh, that’s the one that smells like shit.” Refined, beautiful output. A process that would make your eyes water.

AI cannot write that paragraph. Not because the language is complex. Because the memory requires a body that stood in a parking lot and breathed through its mouth. (I’ve written about this principle before as the you-shaped holes problem. The details AI can’t fabricate are the ones that prove you were there.) AI produces refined sugar. Odorless. No evidence anything raw was ever involved. When your prose reads the same way, it reads like output. Not writing. And that’s exactly the kind of prose that makes a stranger ask: “Which AI did you use?”

The fix is to stop deodorizing your drafts.

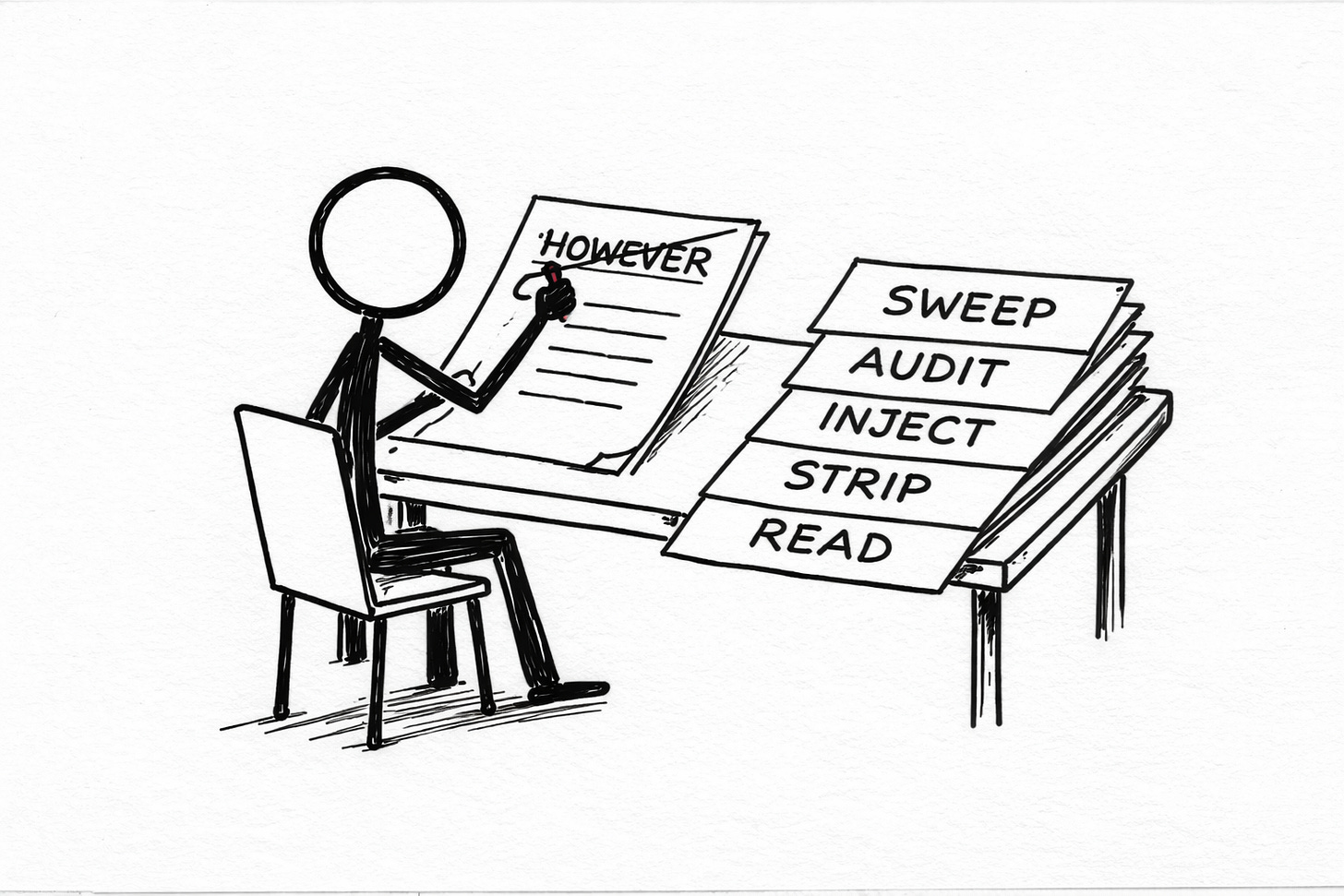

The fifteen-minute pre-publish pass

Here’s the actual workflow. This is what I run before anything leaves my drafts folder. Fifteen minutes on a 1,500-word piece. It has fixed every false positive I’ve thrown at it, which is more than I can say for most things I’ve built with my own hands.

(Looking at you, every empanada I've attempted since moving to South America. The flavor is there. The architecture is a war crime. Butters observes from the doorway with the quiet devastation of a loyal friend watching you make the same mistake for the ninth consecutive time.)

Minute 0-3: The word-frequency sweep.

Open Find. Search for each of these, one at a time: however, moreover, furthermore, additionally, notably, it’s worth noting, in essence, ultimately, crucial, essential, robust, landscape.

For each hit, ask one question: would I say this to someone holding a drink at a party? If no, delete it or replace it. Most of them can just be deleted. The sentence almost always survives, the way a house survives the removal of a decorative pillar that was never load-bearing in the first place.

Minute 3-6: The sentence-length audit.

Scroll through. Eyeball paragraph shapes. If three consecutive sentences look like the same length on the page, they are. I promise. Your eyes are not lying and neither is the detector.

Break one. Fragment another. Let one run so long it feels like it’s overstaying its welcome at a dinner party and knows it and doesn’t care because it has something to finish saying.

You’re looking for visual unevenness. Not metrical perfection. Prose should look like a city skyline, not a picket fence.

Minute 6-9: The specificity injection.

Find every abstract noun (”creativity,” “consistency,” “authenticity,” “practice”) and replace at least half with a concrete image.

“My creative practice” becomes “Tuesdays, 5 AM, the same chipped mug, Butters asleep on my foot like a four-pound paperweight with strong opinions about my typing posture.”

(Not every time. Half. But this is the single highest-return fix in the whole list, and it’s the one people skip because it requires thinking about what actually happened instead of what sounds like it should have happened. Those are different skills. The second one is what the machines are good at.)

Minute 9-12: The hedge strip.

Kill these: perhaps, maybe, arguably, in some cases, it could be argued, generally speaking, for the most part, to some extent, in a sense.

Not all of them. Most of them.

A hedged sentence is a fist that opens into a handshake mid-swing. Machines were trained to hedge because their creators were terrified of lawsuits. You are (probably) not being deposed this afternoon. Say the thing. Mean the thing. Let someone disagree with the thing. That’s called writing.

Minute 12-15: The read-aloud.

Read the whole piece out loud. Not in your head. Out loud. To your room. To your dog. To whatever deity you’ve been quietly negotiating with since you started writing for money.

If your mouth trips, the prose tripped first. If a sentence sounds like it belongs on a professional networking site used primarily by people who describe themselves as “passionate about synergy,” it reads like one too.

Your ear will catch the muzak before any detector does. Assuming you trust your ear. Which is the whole point of doing the work in the first place.

(The read-aloud is also the fastest way to find the sentence that made someone ask “Did you use AI?” It’s the one your mouth doesn’t want to say, because your mouth knows it didn’t come from you. Your mouth is smarter than your detector. Trust your mouth.)

What the detectors are actually doing to us

One more thing. This one doesn’t come with a tidy checklist.

The detectors are training us. Not on purpose. As a side effect, which is always how the worst things happen. Every writer who gets falsely flagged and then edits their prose to pass the detector is being slowly shaped by the detector’s model of what humans sound like. And that model is built from an adversarial arms race with language models that are themselves trying to sound like humans who were themselves shaped by the same writing advice that was already pushing everyone toward the same statistical corridor.

You are being trained by a machine that was trained by a machine that was trained on you.

(Read that again. I needed a full minute and a stiff drink the first time that sentence assembled itself in my head.)

The only way out is to write more like yourself. Not less. More fingerprints. More stench. More sentences that a cautious editor would circle in red and an MFA workshop would debate for forty minutes while missing the point entirely. The detectors are measuring perplexity. Be perplexing. Be the draft whose next word the model couldn’t have predicted if you gave it a million guesses and a head start.

That’s the answer to “Which AI did you use?” You don’t say no. You write so much like yourself that nobody asks..

🧉 "Which AI did you use?" If you've gotten this question about something you actually wrote by hand, I want to hear what you said. Especially if it wasn't polite.

Nobody should have to prove they’re real. But if you do, the detector can’t help you. Only your fingerprints can. The weird ones. The smelly ones. The ones you almost deleted because they felt too specific, too personal, too much. Those are the ones that prove someone was in the room. Leave them in.

Crafted with love (and AI),

Nick "Which AI Did You Use" Quick

Option C: PS... Want to make sure your writing never gets mistaken for a machine’s again? The Voiceprint Quick-Start Guide walks you through documenting the specific patterns that make you unflaggable. Free. Fifteen minutes. Zero “moreover”s.

PPS... If this piece made you re-read your last post through squinted, slightly paranoid eyes, hit the heart so Substack’s algorithm (which is itself being shaped by a detector being trained by a model being trained by you, because the circle never stops circling) shows it to someone who needs it. If you’ve got a better answer to “Did you use AI?” than mine, drop it in the comments.