Your AI Sounds Like You. Until It Doesn’t.

Every model update quietly steals a little more of your voice. Here's how to stop it.

When GPT-5 rolled out last August, you knew immediately. Your prompts stopped working. The output felt wrong. Not broken. Not gibberish. Just off. Like someone xeroxed your handwriting at 90% zoom. Every sentence technically fine. None of them quite yours.

You knew exactly what happened. The model changed. Obviously. So you did the reasonable thing: tweaked your prompts, tried a few variations, spent a couple hours (or a couple weeks) adjusting until the output felt close enough.

And then you moved on. With a quiet sense that “close enough” wasn’t actually close enough. That something got lost in the translation and you couldn’t quite get it back.

Here’s the part you didn’t figure out: the new model wasn’t the problem. Not exactly.

Your prompts captured some of your voice. Maybe a lot of it, if you’d put in real work. But every instruction you didn’t make explicit, GPT-4o filled in for you. Its version of “conversational.” Its interpretation of “punchy.” Its best guess at what “authentic” looks like. And you couldn’t tell which parts of the output were yours and which parts were the model being generous.

When the model changed, the generous parts changed with it. And you had no way to isolate what broke.

(I write about making AI sound human for a living. And I made this exact mistake. Which means everything I’m about to say comes from the embarrassing side of the lesson. But embarrassment builds character, or so I’m told by people who’ve never been publicly humiliated by their own expertise.)

“Be More Conversational” Is Not a Blueprint

Think about how you actually built those prompts.

“Be more conversational.”

“Use shorter sentences.”

“Sound like a slightly cynical writer who takes this way too seriously.”

(That last one might have been me. Describing myself to a machine like a suspect at a police lineup. “He’s about five-ten, strong opinions, known to use sentence fragments recreationally.”)

Those instructions feel specific. They’re not. “Conversational” means something different to every model. “Shorter sentences” is relative to a baseline you never defined. You were giving directions like “drive toward the mountains” instead of an actual address. GPT-4o happened to drive somewhere you liked. GPT-5 took the same directions and ended up two towns over.

“Sounds like me on GPT-4o” and “matches my actual writing patterns” were always two entirely different claims. The first was a happy accident. The second is a spec you own.

You had the first. You needed the second. And the “close enough” you settled into after August? That wasn’t adaptation. That was the first concession.

Vibes vs. Specs (And Why Only One Survives a Model Switch)

A voice that lives as a vibe is a liability. If your AI voice existed as “this feels right when I see it,” you built your creative output on the same foundation as describing your haircut to a new barber using only feelings.

(”Make it look like... me, but better?” That’s how you end up looking like a youth pastor with a pending investigation. Same instructions. Different interpreter. Different result. Sound familiar?)

A voice that lives as a spec is model-agnostic. Portable. Yours in a way that no product update from San Francisco can touch.

The difference is humming a song versus handing someone the sheet music. You know your melody. You can feel it. But “it goes like dun dun duuun” is not something a new performer can work with. Sheet music is. Specific notes, specific timing, specific dynamics. Hand it to a musician who’s never heard the original and they can play it back. Because the spec removed the guesswork.

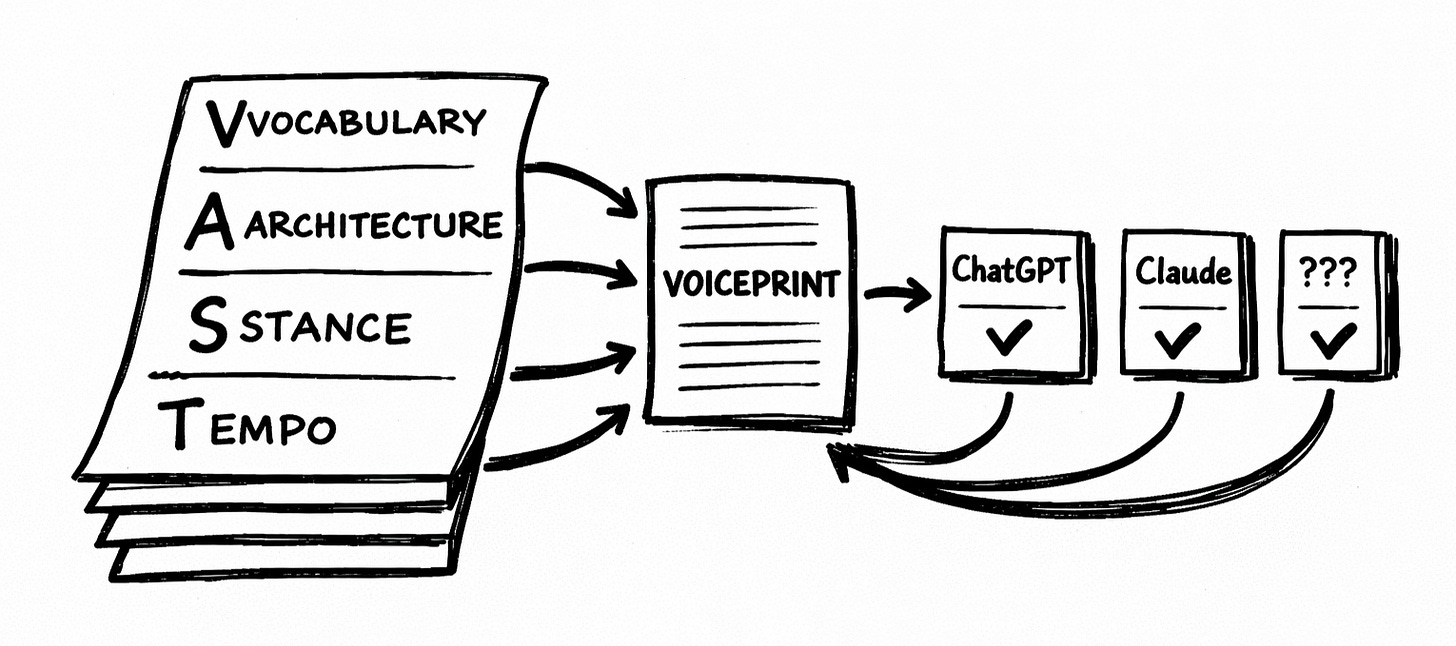

Your voice has four documentable layers:

Vocabulary. Not just the words you use. The words you won’t. Every writer has a blacklist, even if they’ve never written it down. Words that make their skin crawl. Jargon they refuse to touch. Phrases they reach for so often they’ve become signatures. (If you’ve never noticed which words you overuse, ask someone who reads you regularly. They know. They’ve been quietly suffering.)

Architecture. How you organize thinking on the page. Some writers hook first, context later. Others build the case brick by brick and drop the conclusion like a verdict. Some write paragraphs that run a full screen. Others never let one past three sentences. You have a pattern. You’ve just never had a reason to notice it until you needed a machine to replicate it.

Stance. Your relationship to whoever’s reading. Are you the expert handing down findings? The guide walking beside them? The peer who’s two steps ahead and willing to admit when they trip? Same information delivered from a different stance becomes a different piece entirely. (This is also why your AI output sometimes feels weirdly authoritative or weirdly chummy. It’s guessing your stance. Poorly.)

Tempo. Some writers punch. Short sentences. Hard stops. Others unspool long, winding thoughts that pull you through clause after clause before you realize you haven’t breathed. Most do both and never notice when they switch. Tempo is the part of your writing readers feel before they think about it. It’s also the first thing AI flattens into a corpse on a massage table. (Imagine every song at the same BPM. That’s what AI does to your rhythm when you don’t specify it.)

Each layer documented with mechanical specifics. Not “be conversational.” Instead: “Fragments after key claims. Max three sentences per paragraph. Open with hooks, never preamble. Favor ‘use’ over ‘leverage.’”

(Four layers. Dozens of patterns inside each one. If you want the full breakdown of what to document and how, I built a Voiceprint Quick-Start Guide for exactly that. But even the rough version beats vibes.)

A spec like that survives a model switch. “Be more conversational” is what you lost last August.

The Migration Protocol (It’s Boring. That’s the Point.)

When your voice is documented, model migration is a Tuesday. Not even a memorable one.

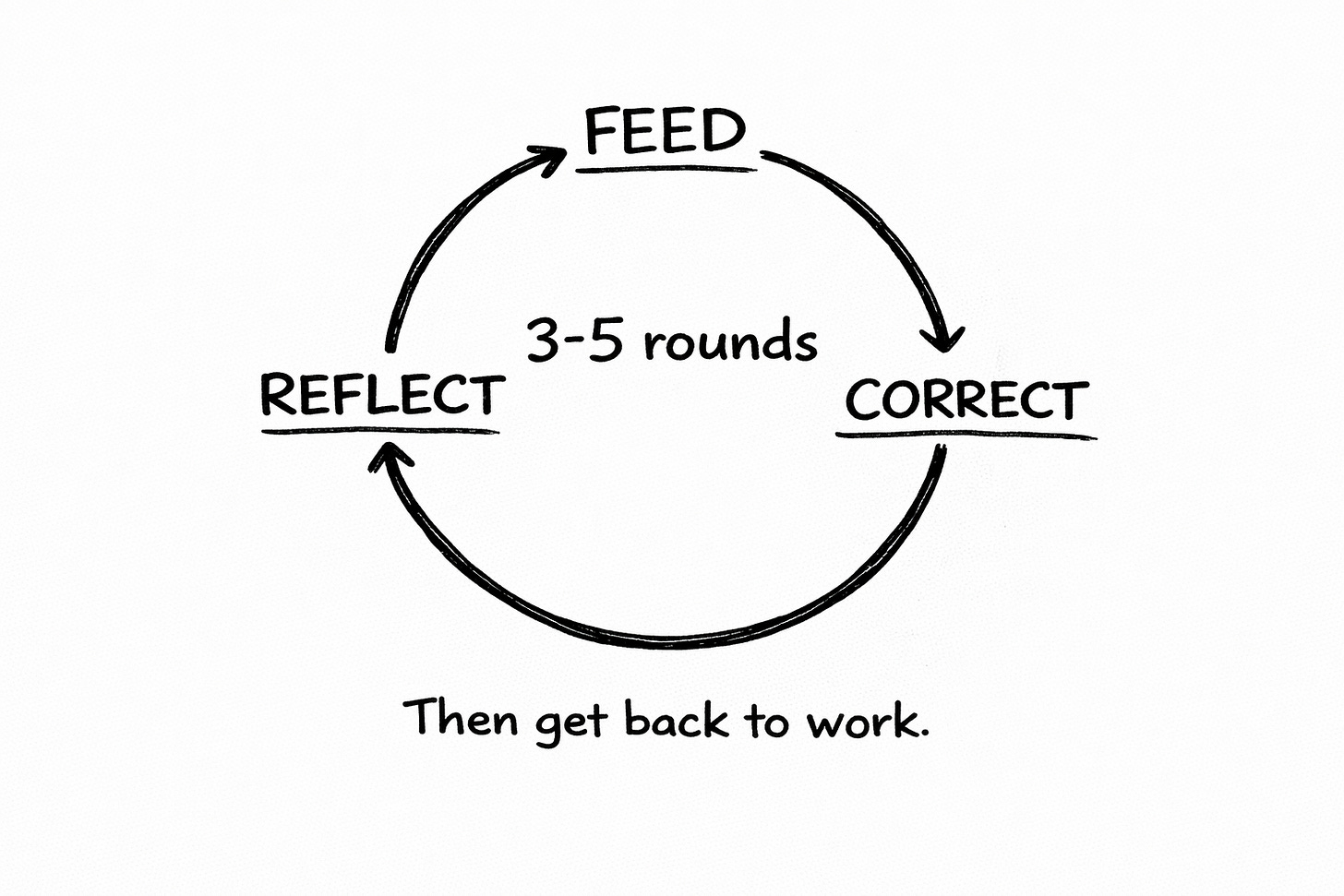

Feed. Give the new model your voice spec. All four layers. Concrete patterns, not vibes.

Reflect. Read the output critically. Not “does this feel right?” (That’s the trap.) Instead: Does the vocabulary match? Is the structure following my patterns? Is the stance accurate? Is the rhythm there?

Correct. Specific feedback. Not “make it more me.” Instead: “You used ‘facilitate.’ Try ‘help.’” “Your paragraphs run six sentences. Maximum three.” “The opening buries the hook. I hook first. Always.”

Three to five rounds. Most models lock in.

That’s it. Not a week of frustrated guessing. Not the slow-motion grief of accepting “close enough.” A structured loop with a clear target because you defined the target before the disruption hit.

(The creators who went through August with documented patterns? Back online in hours. The ones working from vibes? Some of them are still tweaking. Six months later. Chasing a ghost they can’t describe because they never wrote down what it looked like when it was alive. A few of them are still posting in forums about how GPT-4o was “the one that really got them.” Which is a sentence that should only be said about a therapist or an ex. Not a language model with the shelf life of a banana on your counter.)

February 13th Isn’t the News. The Pattern Is.

Speaking of shelf life.

On February 13th, OpenAI retires GPT-4o from ChatGPT. Along with GPT-4.1, GPT-4.1 mini, and o4-mini. Four models, same day.

(The day before Valentine’s Day. OpenAI kills four models the day before the holiday about lasting commitment. The symbolism either escaped their marketing department or delighted it. I’d bet my chihuahua on the second.)

Most of you won’t feel this one directly. You already migrated. Only 0.1% of ChatGPT users still choose GPT-4o. That’s roughly 800,000 people out of 800 million weekly users, but it’s a rounding error with feelings, not a movement.

The reason this matters isn’t the specific retirement. It’s what the retirement proves.

Model churn is the weather now. Not a freak storm. The climate. GPT-4o lasted about eighteen months as a relevant model. GPT-5 had 5.1 nipping at its heels within months. OpenAI is pulling four models on the same day. Claude updates regularly. Gemini iterates. The entire industry runs on the product lifecycle of a mayfly: born, useful, replaced.

This is not slowing down. It’s accelerating.

And every time, the same split emerges. One group re-calibrates from scratch, chasing “sounds like me” by feel, accepting something slightly less precise each cycle. A phrasing they wouldn’t have chosen but they’re tired. A paragraph that reads like an apology from someone who isn’t sorry. But they can’t justify another afternoon on it.

The other group feeds their spec to whatever model just shipped, runs the loop, and gets back to work.

The first group is drifting. Death by a thousand tiny concessions nobody notices individually but that compound silently. Each model update, a fraction more of what made their writing theirs dissolves into the statistical average.

(The dosage increases so gradually the patient stops reading the label. This metaphor is doing heroic work and I refuse to apologize for it.)

That’s how ensloppification wins at the individual level. Not some dramatic moment where you decide to become a content factory. A thousand micro-surrenders across a dozen model updates until one Tuesday you read your own output and realize it could have been written by someone who's never read a single thing you've published. You can’t pinpoint the month it stopped being yours. Because it didn’t happen in a month. It happened across all of them.

The second group? Their voice gets sharper with each transition. The calibration loop doesn’t just port the spec. It forces you to reexamine it. Tighten it. Each migration is a voice audit you’d never do voluntarily. (Nobody audits their own voice for fun. It’s like listening to a recording of your own laughter.)

So Here’s Where You Actually Are

You went through August. You adapted. But you probably didn’t fix the underlying problem. You re-calibrated your prompts for the new model. Built new workarounds. Got comfortable again.

Which means you’ve rebuilt on the same fragile foundation, just pointed at a different model. And the next disruption is already scheduled on a product roadmap you’ll never see, approved by people who don’t know your name.

Document your patterns. Not your feelings about your patterns. Your actual patterns. The words, the structure, the stance, the tempo. Specific enough that a machine with no memory of your previous conversations could follow them.

I’m putting the finishing touches on Co-Write OS for exactly this. But you don’t need to wait. I built a Voiceprint Quick-Start Guide that walks you through the basics. Grab it before the next model shows up and interprets your instructions with slightly different priorities.

The voice was never in the model. It was always in you. The only question is whether you’ve written it down or whether you’re going to keep rebuilding from vibes every time a CEO tweets “we’re excited to announce…”

🧉 What’s the one thing AI gets wrong about your writing every single time? The thing you fix in every single draft. Drop it in the comments. (Bonus points if you’ve never actually written it down. That’s the whole point.)

Crafted with love (and AI),

Nick “Outlived the Model” Quick

PS… Models retire. Voices don’t. I write about keeping yours intact every day. If that sounds like your kind of fight, subscribe.

Nick! My Gee! Do you have specific "blacklist" of words most people should start with?

The VAST framework is genuinely brilliant for this problem. That observation about the difference between 'vibes' and 'specs' cuts right to why most writers feel like they're slowly losing something they cant quite name. In my own experience trying to get AI to match certain tones, I kept saying 'make it punchier' and wondering why different models interpreted that wildly differently. Documenting the actual patterns instead of the feeling is such a obvous solution in retrospect.