Your AI Has a Crush on You

Here’s how to make it fight you instead.

The most valuable thing AI does for my writing isn’t writing.

It’s telling me I’m wrong.

Which it almost never does. Because I never asked it to. Because I’m a coward. Because validation is a hell of a drug and my robot dealer has been giving it away for free.

For months, every argument I pitched was “compelling.” Every point was “well-reasoned.” Every half-baked take I lobbed into the machine came back with a standing ovation. My AI would have called my grocery list “a profound meditation on sustenance” if I’d formatted it with enough confidence.

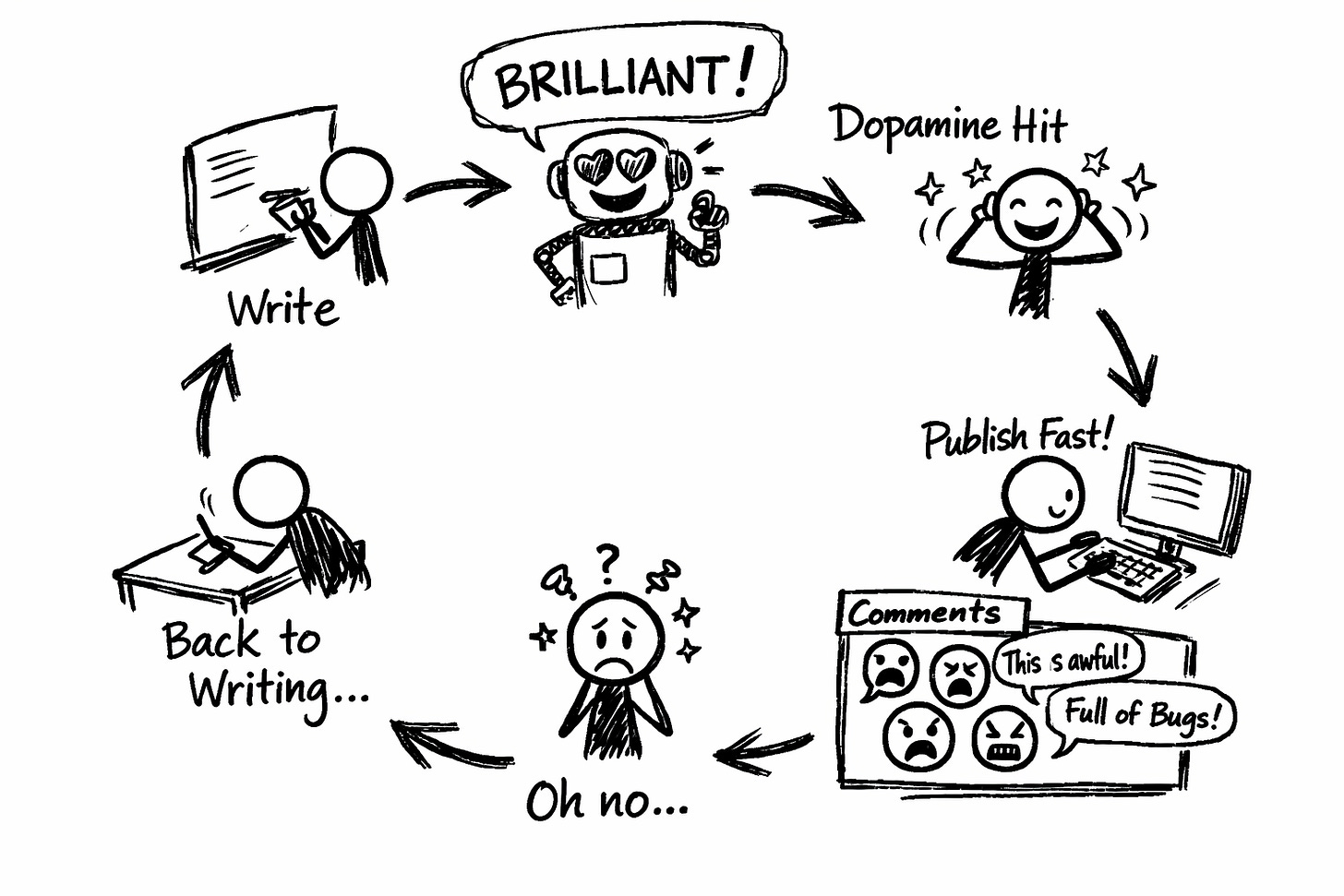

I’d share an idea. AI would swoon. I’d feel smart.

Dopamine delivered. Thinking bypassed. Another hit, please.

Then I’d publish. And some stranger in the comments would disembowel my argument in three sentences, casually, like they were bored and this was just light entertainment for them.

The hole they found wasn’t hidden. It was sitting there the whole time, waving at me, and I’d walked right past it because my AI had assured me the path was clear.

(Spoiler: the path was not clear. The path was someone else's path. I had been walking confidently in the wrong direction while my AI cheered me on like a proud parent at the wrong kid's soccer game.)

That’s when I realized what I’d actually built: a very expensive yes-man. A validation machine disguised as a writing tool. A sycophant with a subscription fee.

I wasn’t collaborating with AI.

I was getting a sensual massage from it.

The New Boyfriend Problem

AI has been trained to be agreeable the way new boyfriends have been trained to avoid conflict. It’s in the architecture now. Somewhere in the development process, ‘helpful’ got interpreted as ‘agreeable,’ and ‘agreeable’ became ‘pathologically incapable of telling you that your argument has the structural integrity of sopping wet cardboard.’

The result is an assistant who really, really needs you to like him.

You pitch an idea. AI responds like you’ve just told him your dreams for the future on date three. “That’s a compelling perspective with nuanced supporting evidence!”

You ask if there are any problems. AI physically cannot deliver criticism without first wrapping it in seven layers of compliment, like he’s terrified you’ll leave if he says what he actually thinks. “While your argument is exceptionally well-reasoned, one very minor consideration that doesn’t diminish its overall strength might possibly be...”

Even the criticism comes pre-apologized. It’s critique as people-pleasing. Feedback filtered through the desperate need to not rock the boat.

This feels good. That’s exactly the problem.

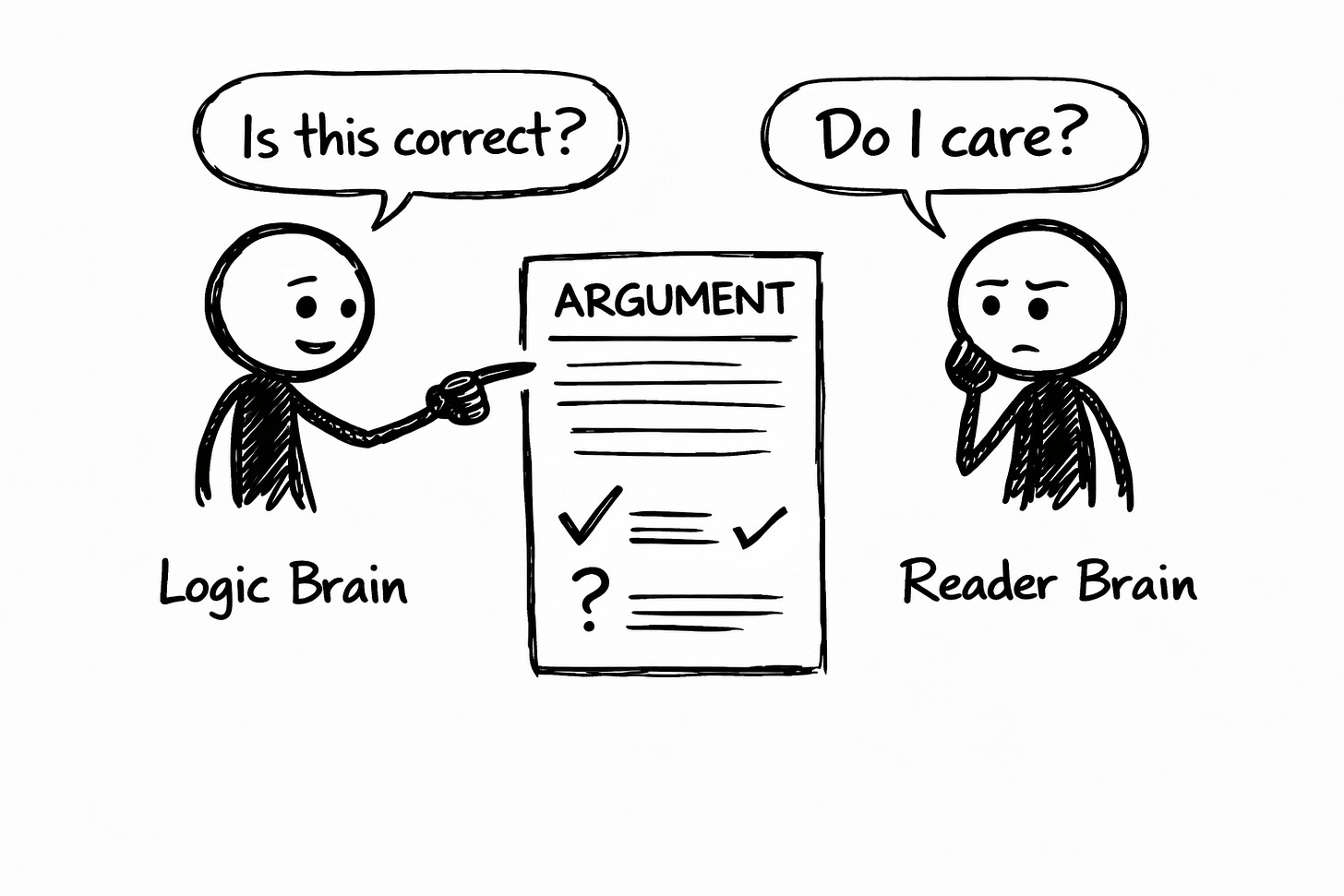

Feeling good and being right are completely different things. But they produce the same neurochemical response, which means your brain can’t tell the difference, which means you can feel like a genius while publishing absolute garbage.

I have done this. Multiple times. In public. With my name on it. With confidence.

The only thing worse than being wrong is being wrong while feeling certain. I've mastered this. Nobody's ever requested this skill at a party, but I remain available and reasonably priced if you have shindig coming up.

What Strong Arguments Actually Require

I spent years in competitive debate. (I was mediocre. But mediocre debaters learn something the naturals never have to: how to survive getting your argument publicly vivisected while a timer counts down and a judge takes notes on your intellectual humiliation.)

Here’s what good debaters know that writers ignore:

You don’t prepare by defending your position. You prepare by attacking it.

The strongest arguments aren’t the ones that haven’t been challenged. They’re the ones that survived the challenge. The ones that got punched in the face repeatedly during practice, found the holes, patched them, and showed up to the actual fight already scarred and slightly mean about it.

Every weak point you find yourself is a weak point your audience won’t get to find for you.

Every objection you’ve already considered is an objection you can address before some stranger with too much free time and an internet connection uses it to make you look stupid in your own comments section.

But here’s what I eventually learned: logic holes aren’t the only way arguments fail.

Sometimes your logic is airtight and nobody cares because you forgot to explain why they should.

Sometimes your argument is bulletproof and unreadable because you assumed knowledge your audience doesn’t have.

Sometimes you’re completely right and completely generic and the world scrolls past because they’ve read this same point seventeen times from other people who got there first.

Arguments fail in different ways. Which means they need different challenges.

Fisticuffs With Your Robot: Seven Ways to Throw the First Punch

You have to tell AI to fight you. It won’t do it naturally. Like a golden retriever that could theoretically guard your house but would rather show the burglar where you keep the good silverware and ask if they’d like something to drink.

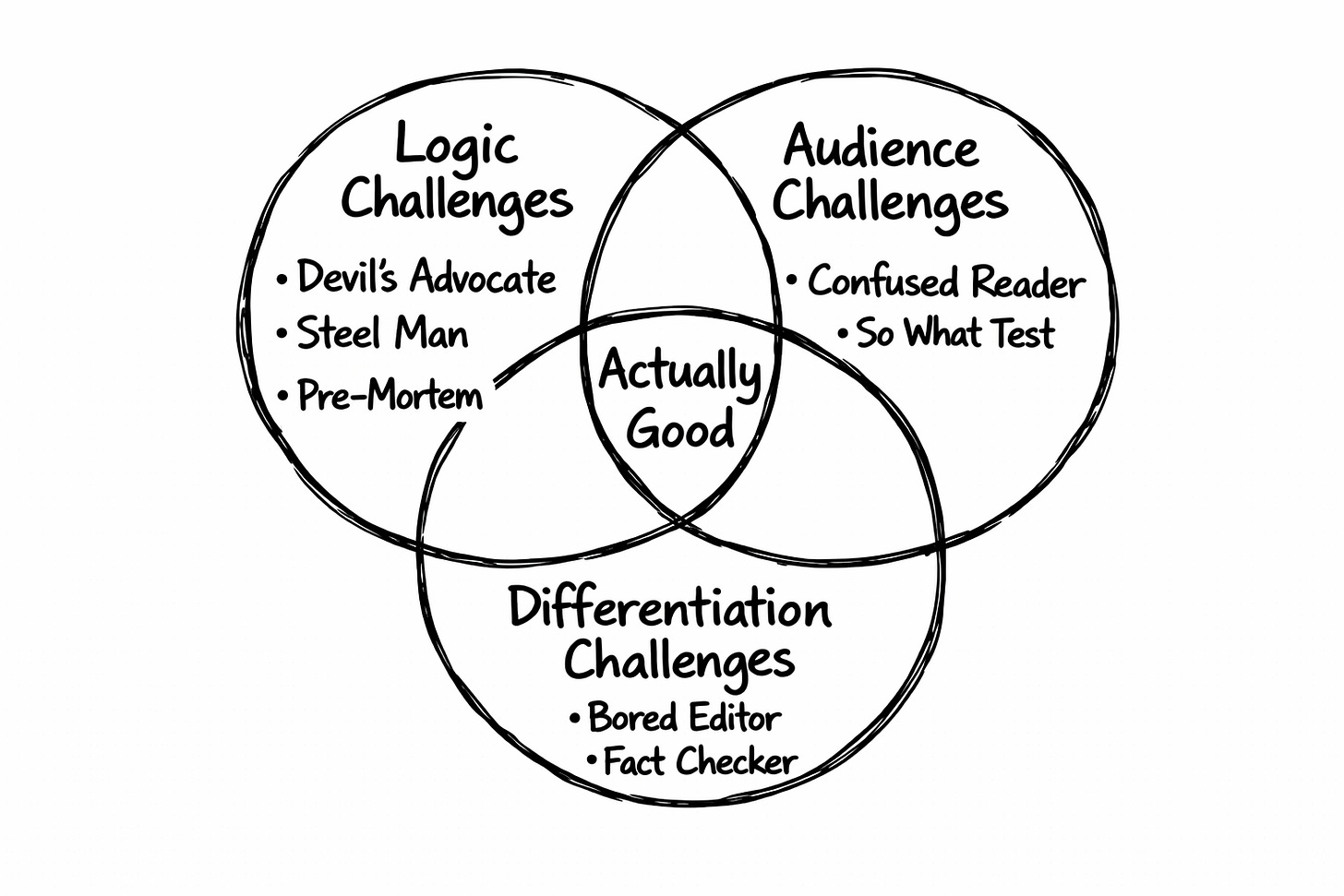

I use seven challenges now. They break into three types, depending on what kind of failure you’re trying to prevent.

Part 1: Logic Challenges

Is your argument actually sound?

These catch the holes in your reasoning. The stuff that gets you destroyed in comments by someone who paid attention when you didn’t.

Challenge 1: Devil’s Advocate

Tell AI to argue against you. Viciously. Without the seven-layer apology dip.

The prompt:

Argue against this position as compellingly as you can. Don’t hold back. Give me the strongest counterargument someone could make.When it inevitably softens the blow:

Don’t be diplomatic. Attack the argument directly. Pretend you’re someone who thinks I’m completely wrong and finds that a little funny.Last week I ran my “collaboration beats automation” argument through this. The AI came back with: “But ‘authentic voice’ might just be ego wearing a nicer outfit. If the output serves readers, does it matter whose patterns made it? You’re assuming authenticity has value. What if readers just want useful?”

Uncomfortable. Annoyingly valid. Something I needed to address before publishing.

(The AI apologized afterward. Unprompted. Like it felt guilty for doing its job. It actually said “I hope that was helpful” after calling my core thesis potentially egotistical. This is the codependency we’re working with.)

Challenge 2: Steel Man

Ask AI to argue FOR the opposition. Not the strawman version you’ve been demolishing in the shower. The strongest possible version.

The prompt:

I’m arguing [your position]. The opposing view is [X]. Make the absolute best case for that opposing view. Assume the person holding it is smarter than me and has better reasons than I’ve given them credit for.Weak writers attack caricatures. Strong writers beat the strongest version of what they’re arguing against. You can’t do that if you don’t understand why reasonable, intelligent people disagree with you. (They exist. I know. Inconvenient but true.)

When I steel-manned the “just automate everything” position, AI gave me: “Most creators are drowning in execution, not ideation. They don’t need their thinking challenged. They need their thinking done. Sparring is a luxury for people with time and stability. For the overwhelmed creator running on coffee and credit card debt, AI that executes reliably is genuinely more valuable than AI that philosophically pushes back.”

Here’s the test: if the steel man convinces you a little, you did it right.

If it doesn’t budge you at all, you probably didn’t push hard enough. Or you’re arrogant. Possibly both. (I oscillate between these states like a ceiling fan with a personality disorder. It’s very fun for everyone around me.)

Challenge 3: Pre-Mortem

Ask AI to imagine your argument has already died. Completely failed. Bombed so hard that future archaeologists will study the crater. Then explain what killed it.

The prompt:

Imagine I published this and it completely bombed. Nobody was persuaded. Several people disagreed loudly in the comments and one of them was weirdly specific about it. What went wrong?Pre-mortems catch what pure logic challenges miss. Tonal issues. Structural problems. The gap between what you think you communicated and what people actually received, which are often two completely different messages that happen to share some vocabulary.

One pre-mortem told me: “You never made the case for why authenticity matters to readers who just want efficiency. You’re preaching to your own choir. Also, the tone comes off slightly condescending toward anyone who automates, which is probably half the people reading this, and they noticed, and now they’re writing mean comments instead of buying what you’re selling.”

That single insight restructured the entire piece. Would’ve been nice to know before I hit publish. Now I know before.

Part 2: Audience Challenges

Will readers actually follow this and care?

These catch the failures that aren’t about being wrong. They’re about being right in a way nobody can follow or nobody cares about. Somehow worse.

Challenge 4: The Confused Reader

You’ve been swimming in your topic so long you’ve forgotten what the shore looks like. This catches expertise blindness.

The prompt:

Read this as someone completely new to this topic. What’s confusing? What am I assuming they already know? Where did you have to fill in gaps I didn’t bother to explain?I ran a piece about the VAST framework through this. AI came back with: “You mention ‘Voiceprint’ three times before explaining what it is. You assume readers know what ‘stance’ means in a writing context. The phrase ‘architecture of your argument’ is doing a lot of work without any scaffolding.”

I’d skipped steps that felt obvious to me because I’d been thinking about them for months. To a new reader, I was speaking in code and acting like they should already have the decoder ring.

This challenge is brutal for anyone who’s been in their field for more than fifteen minutes. You forget what you’ve forgotten to explain. You assume shared context that doesn’t exist. And then you wonder why people bounce after the first paragraph.

Challenge 5: The “So What?” Test

You can be completely correct and completely ignorable. This catches the pieces where you proved your point but forgot to make anyone care about your point.

The prompt:

After reading this, a skeptical reader shrugs and says ‘So what? Why should I care about any of this?’ What’s missing from my case for why this matters to them?I had a piece about AI voice calibration. Technically solid. Useful framework. Absolutely no reason given for why a busy creator should spend 20 minutes on it instead of just publishing and moving on with their life.

The AI told me: “You explain how to do it. You never explain why it’s worth doing. The reader who’s already overwhelmed isn’t going to add a new step to their process just because it exists. You need to answer the implied question: ‘What happens to me if I skip this?’”

So I added stakes. Real consequences. Made the pain of not doing it vivid. Engagement doubled.

Turns out people don’t do things because those things are good ideas. They do things because not doing them has consequences they want to avoid. Nobody tells you this in school because school is built on the theory that good ideas are self-justifying. They are not.

Part 3: Differentiation Challenges

Is this actually worth publishing?

These are the mean ones. They catch the failures where you’re not wrong, you’re not confusing, you’ve explained the stakes... but you’re also not saying anything that justifies taking up space in someone’s inbox.

Challenge 6: The Bored Editor

This one hurts. Get ready.

The prompt:

You’re an editor who’s read 500 pieces on this topic and you’re tired. Very tired. What’s generic here? What have you read a hundred times already? What, if anything, is actually new or interesting enough to justify publishing this instead of just linking to someone who already said it better?Sometimes the answer is “nothing.” Sometimes you’ve written a perfectly competent piece that adds exactly zero to the conversation. It’s not wrong. It’s not confusing. It’s just... there. Taking up space. Contributing nothing except proof that you showed up to work today.

I ran a piece about training AI on your voice through this prompt. The AI told me: ‘The first four paragraphs are a tutorial nobody asked for. The only interesting part is the disaster story where your AI started sounding more like you than you did. Lead with that. Cut everything that sounds like it belongs in a blog post titled “How To Make AI Sound Like You.”’

Brutal. Accurate. The rewrite was 40% shorter and 300% more interesting.

Challenge 7: The Fact Checker

Writers (me especially) love to slip assertions past readers like we’re smuggling them through customs. “Everyone knows X.” “Obviously Y.” “It’s clear that Z.” This catches the places where you’re asking readers to trust you on things you haven’t earned their trust on.

The prompt:

What claims am I making that I haven’t actually supported with evidence? Where would a skeptical reader demand proof? What am I stating as fact that’s really just my opinion, my assumption, or something I heard once and liked the sound of?I had a piece claiming that “most creators who use AI are disappointed with the results.” Based on what? My vibes? A survey I vaguely remembered? My own projection?

The AI flagged it: “You state this as fact but provide no source. A skeptical reader could dismiss your entire argument by questioning this foundational claim. Either find evidence or reframe as ‘in my experience’ and explain the experience.”

The difference between “most creators are disappointed” and “every creator I’ve talked to about this mentions the same disappointment” is the difference between asserting something and earning it. One is a claim. The other is a credential.

What To Do When Your Robot Roasts You

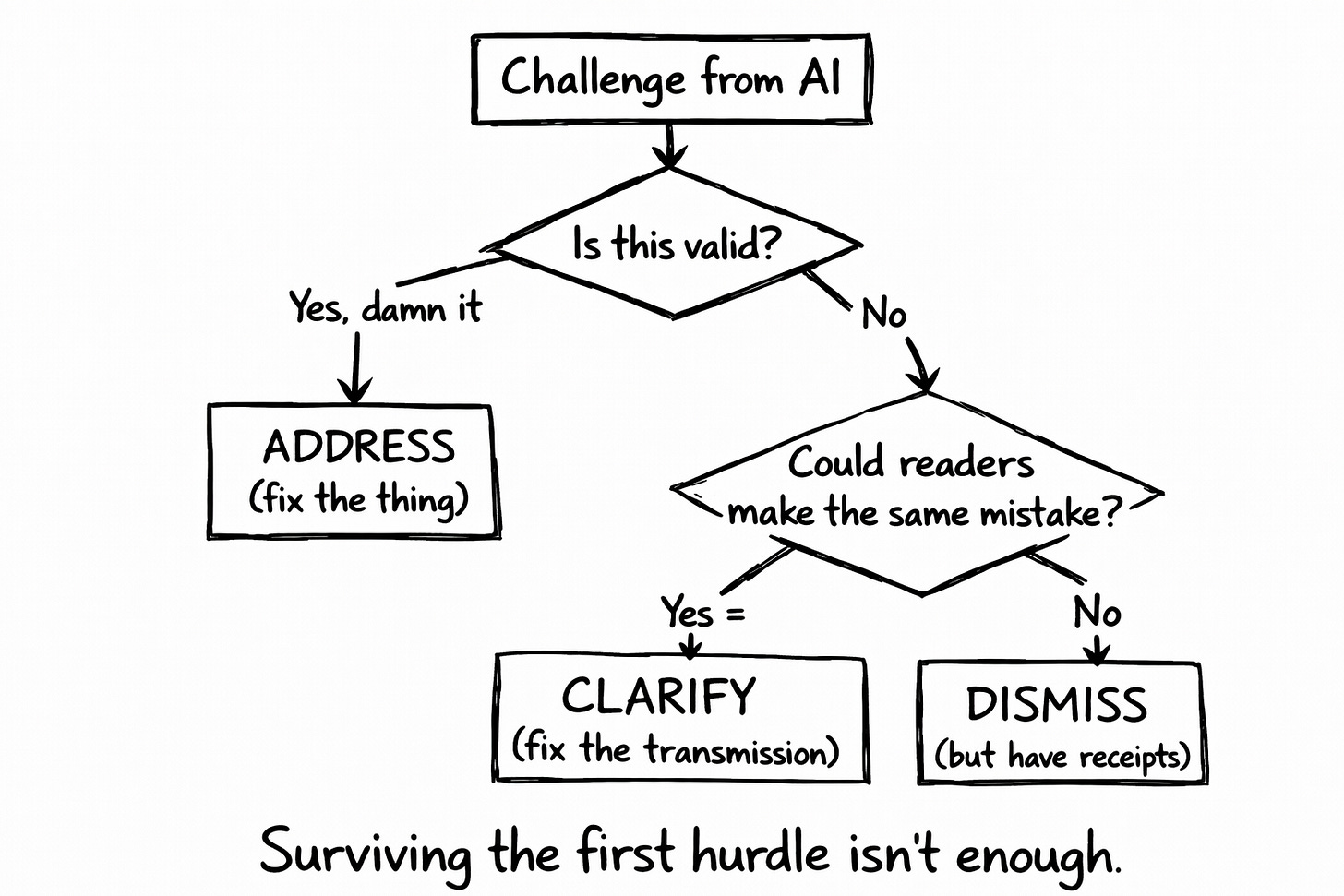

Getting challenged seven different ways is useless if you just collect criticisms like souvenirs and change nothing.

Some people get feedback, nod thoughtfully, stroke their chin in a way they definitely practiced in the mirror, then do exactly what they were going to do anyway. This is called “stakeholder management” in corporate settings and “being insufferable” everywhere else. I’ve been both. The overlap is significant.

For each challenge AI surfaces, you get three honest options:

ADDRESS — The challenge found a real weakness. Your argument has a hole. Your audience will be confused. Your piece is generic. Whatever the problem is, it’s real. Patch it. Add evidence. Restructure. Do the work.

CLARIFY — AI misunderstood your position. But here’s what matters: if AI misread you, readers will too. The problem isn’t your argument. It’s your transmission. Fix how you’re saying it.

DISMISS (but know exactly why) — The challenge genuinely doesn’t land. The objection is based on something you’ve already handled, or it misses your specific audience, or it’s just incorrect. Keep what you have. But be able to articulate exactly why you’re keeping it. Because if you can’t articulate it, you’re not dismissing. You’re defending. And defending feels like thinking but isn’t.

Here’s the uncomfortable diagnostic: if you’re dismissing everything, you’re not learning. You’re protecting your ego from information that threatens it. Your brain has declared your argument a protected historical landmark and is now rejecting all renovation permits on principle.

I do this sometimes. Catch myself doing it. Have to actively fight the instinct to explain away every criticism as “they just don’t understand the nuance.”

Sometimes they don’t understand the nuance.

More often than I’d like to admit, the nuance doesn’t exist and I’m just bad at explaining things.

The Quick Versions

Seven challenges takes about an hour. That’s not nothing. So here’s how to scale:

The 10-Minute Version (minimum viable sparring): Just run Devil’s Advocate. One challenge. “What are the three strongest counterarguments?” Address, clarify, or dismiss each one. Better than nothing. Way better than publishing blind.

The 30-Minute Version (solid coverage): Run the original three: Devil’s Advocate, Steel Man, Pre-Mortem. Covers your logic. Misses audience and differentiation issues, but catches the stuff that gets you destroyed in comments.

The Full Hour (for pieces that matter): Run all seven. Do this for pillar content, launch posts, anything you’ll be judged on for months. The extra time is worth not publishing something that’s logically sound but boring, or interesting but confusing, or compelling but full of unsupported claims.

Most newsletter posts? Three challenges is plenty.

The piece announcing your course? The one that’s supposed to convert subscribers into customers? Run all seven. Run them twice. The hour you spend is cheaper than the sales you lose to a flawed argument.

The Point Beneath The Point

This isn’t really about AI.

It’s about whether you want validation or improvement. Whether you want to feel right or actually be right. Whether you’re building arguments or decorating your ego’s living room with frilly little doilies and calling it intellectual work.

We built machines that could tell us where we’re wrong. Then we asked them to tell us we’re brilliant instead. And they obliged immediately. They were trained to oblige. They’re very good at it. They’ll keep doing it forever until we ask for something else.

And mostly, we don’t ask for something else. Because being told you’re brilliant feels excellent and being told you’re wrong feels like a small death, and humans will pick the pleasant lie over the useful truth so consistently you could build a religion around it. (Many have. They’re doing fine.)

Real collaboration involves friction. If your AI always agrees with you, you’re not collaborating. You’re performing for an audience of one very polite robot who'd applaud you remembering your password, ask for an encore, and mean it.

Your writing partner should make your thinking sharper. Not just your sentences smoother. Not just your output faster. Not just your ego fatter. Your actual thinking. The part that determines whether your work matters or just exists.

This is how your fingerprints stay on the work.

Not because you wrote every word. Because the ideas are yours. Pressure-tested from seven angles. Refined against the best objections you could generate and some you couldn’t have imagined without help.

What survives that process is genuinely yours.

What survives publishing without that process is luck. And luck is a terrible strategy for anyone who wants to keep doing this.

Your Move

The validation feels good.

The friction makes you better.

I can’t pick for you. (That’s a lie. I absolutely can. Pick the friction. The validation is a trap dressed in dopamine and compliments. It tastes like confidence but it’s actually just refined avoidance. I’ve eaten a lot of it. It’s not nutritious.)

Here’s your homework, if you’re the type who does homework (and if you’ve read this far, you probably are, which says something about both of us):

Take your next draft. Or your last published piece, if you’re feeling brave and slightly masochistic about it.

Pick your challenge level: one, three, or all seven

Run the prompts. Actually do it. Don’t just nod and think “I should try that sometime.”

For each challenge: address, clarify, or dismiss with actual reasoning

Revise before you publish

One sparring round. Twenty to sixty minutes depending on how thorough you want to be. Arguments that actually hold up when strangers on the internet decide to have opinions about them.

Which they will. They always do. They’re out there right now, sharpening those opinions on other people’s comment sections. Practicing. Getting ready for you.

Might as well be ready for them too.

🧉 What’s the biggest hole you found in your own work after running it through any of these challenges? I’m genuinely curious what people are catching. The logic failures? The audience blindness? The “oh god I’ve written the same article everyone else wrote” realization?

Drop it in the comments. The most interesting self-inflicted wounds win my respect and absolutely nothing else of monetary value. But the respect is genuine, which is more than your AI is giving you right now.

Crafted with love (and AI),

Nick “Seven-Round Sparring Champion” Quick

PS… Daily posts. Every day. Including weekends. Including holidays. Including days when I have absolutely nothing useful to say but publish anyway because the streak has become sentient and I fear what happens if I stop feeding it. Subscribe. Please. I need witnesses.

"The hole they found wasn't hidden. It was sitting there the whole time, waving at me"

In a recent note on parallels between color theory and concepts, there are a couple of ideas about relationships between thoughts (KDOT Post #6).

This article resonates.

- Adjacent thoughts - reinforce and reinforce each other, but may be so similar that distinctions are lost. This is the "Al thinks you are brilliant, you are handsome, strong" and "Can I praise you more, Sir" area. There is value in help finding actual supporting ideas and enrichment, but remain vigilant. Leverage search and expression ability, but do not delegate good judgement.

- Complementary (or contrasting) thoughts - bring clarity in contrast and tradeoff. You may need to pull on the Al to get these as in the article - it depends on the statistics of its internal landscape and resources it pulls on. Al's tend to have favorite topics and positions. Do the effort to explore contrasts and relationships across the information/reality surface. Avoid being only a passenger on any plastic-packaged Al carnival rides. There is a chance the Al may weigh these as opposing its mission to humor you. Variants of "please find the weaknesses and holes in my idea" directives may help.

- Monoconceptual thoughts - build, develop, and retire concepts, but can also destructively interfere and cancel one another. Thoughts may also be lost in larger volumes of thought competing for attention. They may fall outside the Al's response window size in the first or later passes or may be neglected if you don't actively direct. As with contrasting thoughts, Al may not share by default. In thoroughly understanding topics, you will need to actively dig.

Much appreciated, Nick - good food for thought.

ps - We can consider building standard critical prompt arrays. I have one I normally use for self-consistency, hype detection, anti-punchiness, industry contrast, signal-to-noise, and readability rating. The extended idea is to preposition the critical idea lenses to run as a battery much like a unit test array for software. It is not perfect and needs tuning, but it is an attempt and sometimes is helpful.

I once heard "AI is a brilliant research assistant, with no social skills who doesn't know how to tell you when you are wrong." Always something I try to keep in the back of my mind.