Your AI Doesn’t Need More Context. It Needs Better Context.

The creators getting the best AI output aren’t the ones with the biggest context windows. They’re the ones who know what to leave out.

The current meta for AI collaboration is “throw everything at it.”

Full books. Three-hour video transcripts. That Twitter thread you saved six months ago and never actually read. Seventeen browser tabs’ worth of “research” that’s really just organized procrastination with better personal branding.

The logic makes sense on paper. Context windows are getting massive. Models can process entire codebases now. So obviously the move is to shovel in as much raw material as possible and let the machine sort it out.

(This is also the logic behind swiping right on everyone. Theoretically increases your odds. Practically guarantees you end up on a date with someone who lists "fluent in sarcasm" as a personality trait.)

The entire approach is backwards.

The volume trap

More context isn’t better context. It’s often just more noise for the model to wade through while trying to figure out what you actually want.

When you dump a full book, four podcast transcripts, and your unorganized Notion database into a prompt, you’re not giving AI more to work with. You’re giving it more to get confused by. You’re substituting volume of input for clarity of intent.

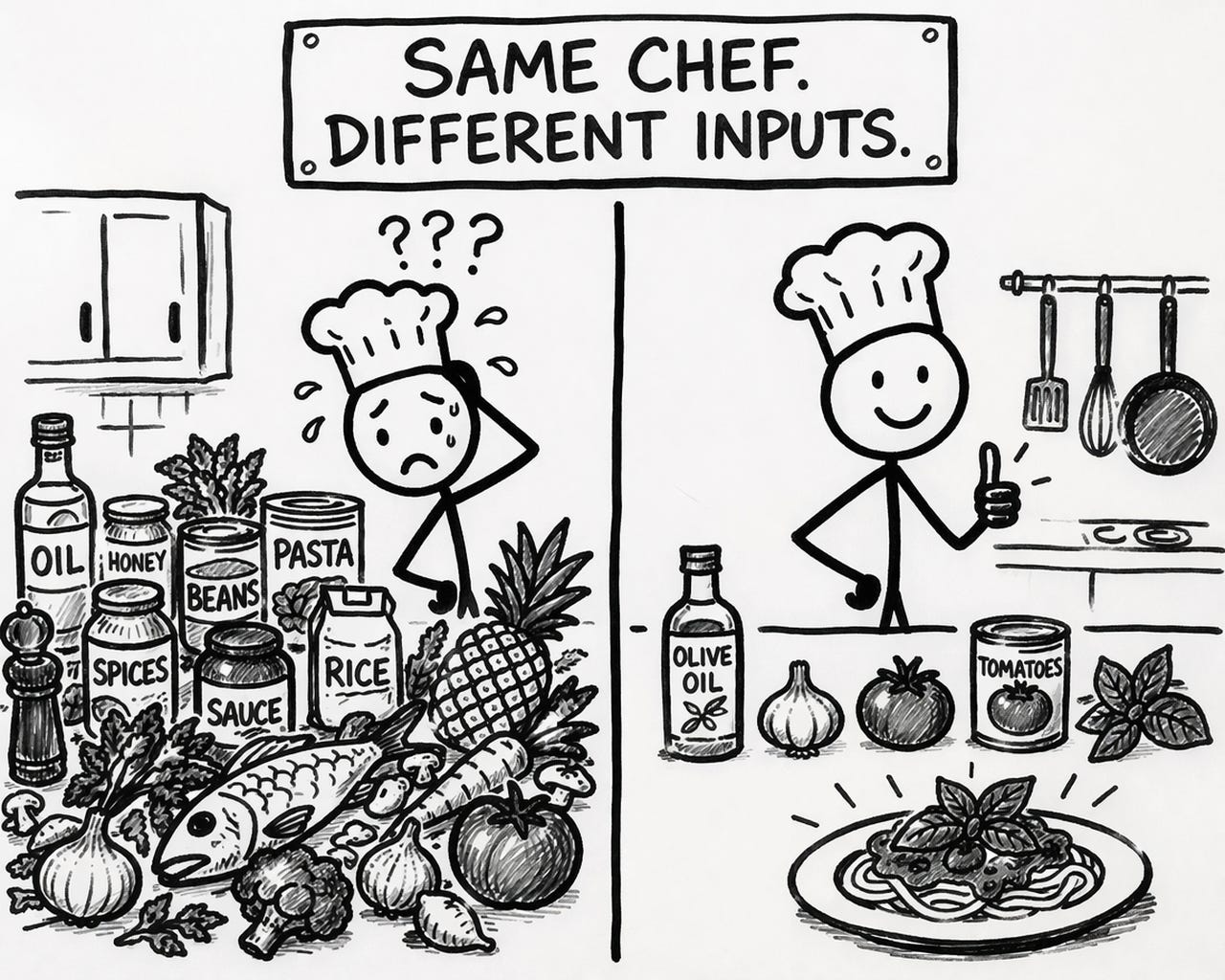

Imagine walking into a restaurant, dumping a grocery bag on the table, and telling the chef "you figure it out." That's what most people do with their AI prompts. And then they're surprised when the output tastes like airline chicken.

The people feeding AI less are getting more

The people getting the best AI output? They feed it less. But what they feed it is precise.

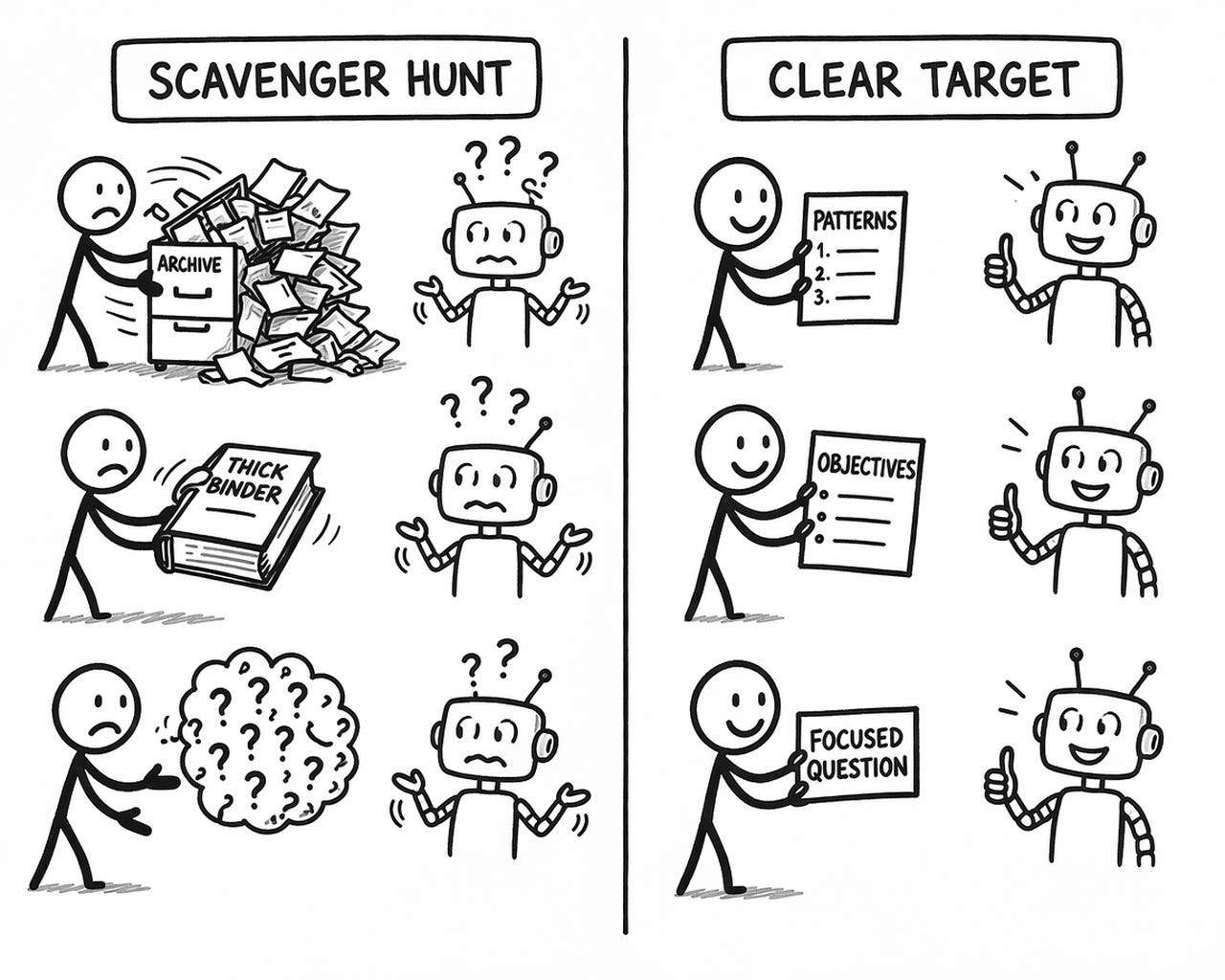

A writer hands over documented patterns instead of their entire blog archive. A strategist provides three specific objectives instead of a 40-page business plan. A researcher gives a focused question with clear parameters instead of “tell me everything about X.”

Same principle across every use case: give AI a clear target, not a scavenger hunt.

Why this matters more as models improve

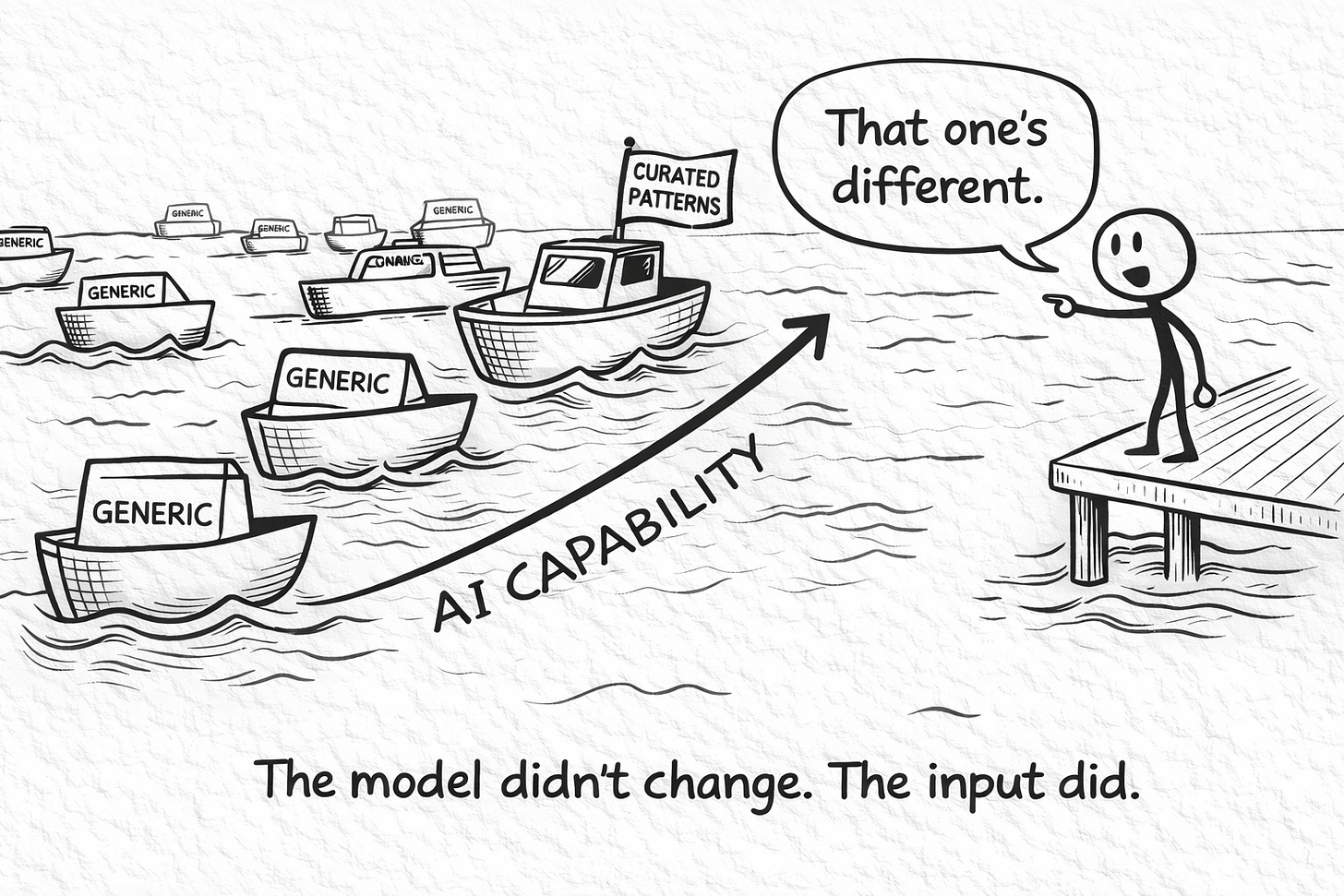

Models are getting better at processing massive inputs. Nobody’s arguing that. They’ll keep getting better. And that’s exactly why this matters more, not less.

Because a more powerful AI with garbage instructions doesn’t produce better output. It produces more confident garbage. Faster garbage. Garbage with better sentence structure and a LinkedIn-ready tone. (Congratulations. Your mediocrity now scales.)

“Here’s everything, figure it out” sounds efficient. It’s actually the sloppiest possible move. You’re asking the model to make every decision you didn’t want to make: what matters, what doesn’t, what tone to use, what to emphasize, what to ignore. And the model will happily make all those decisions for you. Congratulations. You’ve given a machine with no taste full creative control over your work and called it progress.

The result is technically writing the way a stock photo is technically a photograph. Everything’s in frame. Nothing’s real. You can’t prove it’s bad. You just can’t remember it five minutes later.

Here’s what’s actually happening as models improve: the floor is rising. Average output is getting better. More polished. Harder to distinguish from something a human who gives a damn actually produced. Which means the only way to stay above the rising floor is to be more specific about what you want, not less. More curated. More intentional about what goes in and what stays out.

Curation isn’t a nice-to-have anymore. It’s the whole skill. And almost nobody is treating it like one.

Try this before your next prompt

Next time you’re about to dump a massive pile of context into an AI prompt, stop. Three questions. That’s all.

What am I actually trying to get? Be embarrassingly specific. “A blog post” is not specific. “An 800-word breakdown of X for an audience that already understands Y, structured as problem-then-solution, ending with a concrete next step” is specific. If your brief sounds like a wish, you’ll get a wish’s worth of output.

What does “done well” look like here? Describe the finished product like you’re briefing a contractor, not making a wish. Length, depth, audience, purpose. The model will fill every blank you leave. And it fills blanks the way insurance companies write copy: safe, thorough, and completely devoid of a pulse.

What’s off limits? The constraints matter more than the instructions. Telling AI what NOT to do is how you keep your output from drifting toward the same sanitized, hedge-everything, offend-nobody sludge that every other unconstrained prompt produces.

Three questions. If you can answer them, you probably need 500 words of context, not 50,000.

🧉 Be honest: when you work with AI, are you a kitchen-sinker or a curator? And if you’re a kitchen-sinker, has it actually been working? Drop it in the comments.

Crafted with love (and AI),

Nick “Curator of Curated Curation” Quick

PS... Speaking of feeding AI the right patterns instead of everything: I spoke on the “AI Writing Without the Slop” panel at Cozora’s AI Virtual Summit today alongside Daniel Nest. If you missed it live, the recordings are available with the premium pass. CWAI.to/substacksummit

PPS... If you want to see what “curated context” looks like in practice, the Voiceprint Quick-Start Guide walks you through building your own. It’s free.

Totally hear you. I have done that. I am _doing_ that. I _am_ suffering for it. But, I _think_ the mysterious "vector store" (sounds so cool) is supposed to be the (tech) answer. Relying on "reading the context" or "determinative searches" is not sufficient for the AI to "get the picture". I think. Maybe.

"I think. Maybe." is basically the unofficial motto of the entire RAG ecosystem.

You're not wrong that retrieval is part of the answer. The part most people skip is that garbage in a vector store is still garbage. Just semantically indexed garbage.