Your 500 Word Prompt Is The Problem

Why elaborate prompts produce worse AI output—and the 4-element fix

487 words.

That’s how long my prompt was. For a cold-damned-email template.

I was building my outreach system—my email automation that would hit 200+ inboxes on autopilot. First impressions at scale. So I stuffed everything into the prompt. Audience context. Tone guidelines. Vocabulary patterns. Banned phrases. Structural preferences. A complete map of how to capture interest, organized into neat little sections.

The AI’s response? Generic garbage. Hedged statements. Corporate drivel. It read like every other automated cold email I banish to the spam folder.

So I deleted the prompt. All 487 words. Started over with four sentences.

The output? Actually usable. Something I’d proudly send with my name on it. (Which is fortuitous because I’m in the habit of dropping my name at the end of emails.)

The Complexity Trap Nobody Warns You About

We’ve been conditioned to believe detailed = better. More context = more accurate. Comprehensive instructions = precise execution.

This works with humans. Briefing a freelance writer? Yes. Give them everything. Background docs. Brand guidelines. Three rounds of examples. A blood sample if they’ll take it.

But AI doesn’t process instructions like humans do.

When you give an AI system 15 constraints in a single request, it has to prioritize. And it picks wrong. Constantly.

That carefully-worded instruction about avoiding clichés? Deprioritized in favor of your formatting rules.

Your tone examples? Buried under the avalanche of topics you demanded it cover.

The model does what models do. It tries to satisfy everything and ends up satisfying nothing particularly well. (Like me at a Vegas buffet. Too many options. Poor decisions. Regret.)

A Critical Distinction (Before We Go Further)

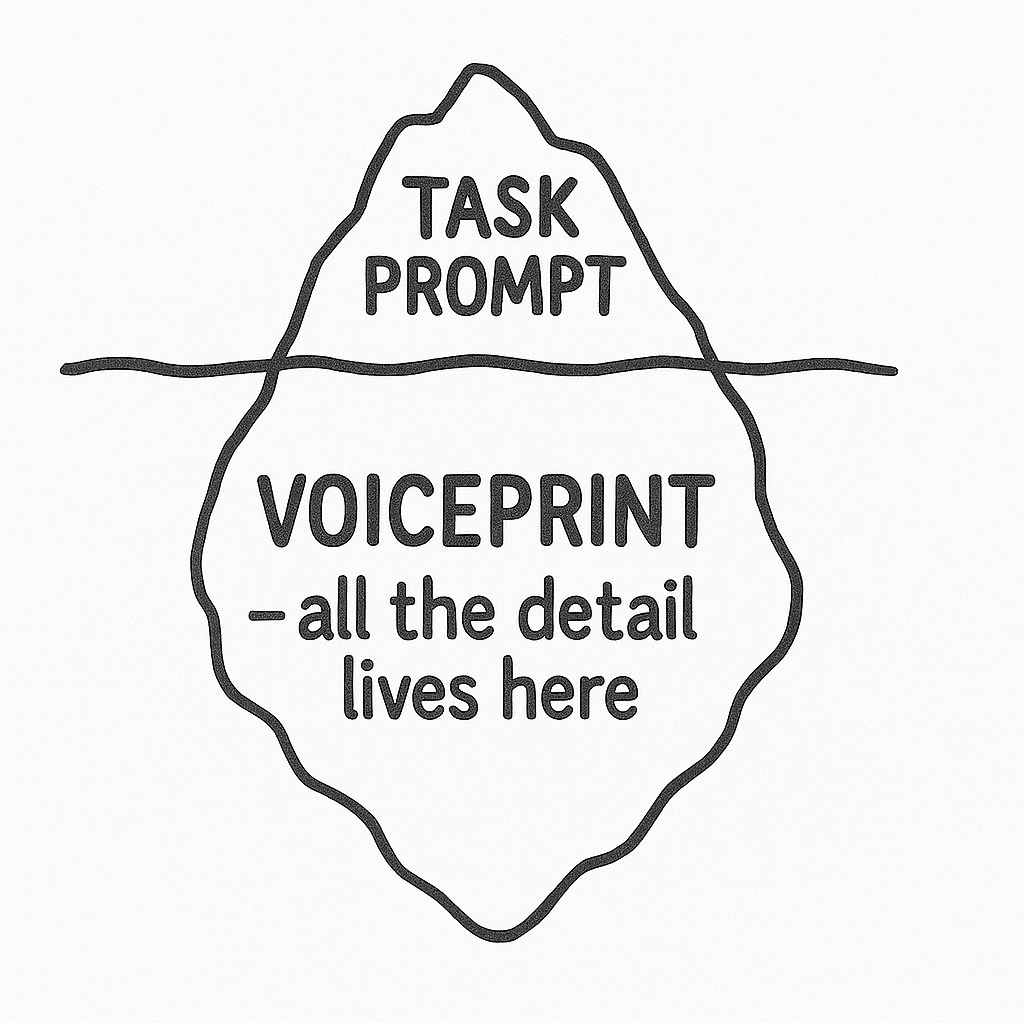

I need to separate two things that sound similar but aren’t:

Identity documentation = Who you are as a writer. Your voice patterns, vocabulary fingerprints, structural preferences, stance toward readers. This is reference material AI should always have access to. Your Voiceprint. Your style spec. Whatever you call it.

Task prompts = The specific requ noest for a specific piece of content. “Write a cold email for this prospect type.” “Draft a LinkedIn post on this topic.” “Expand this outline into prose.”

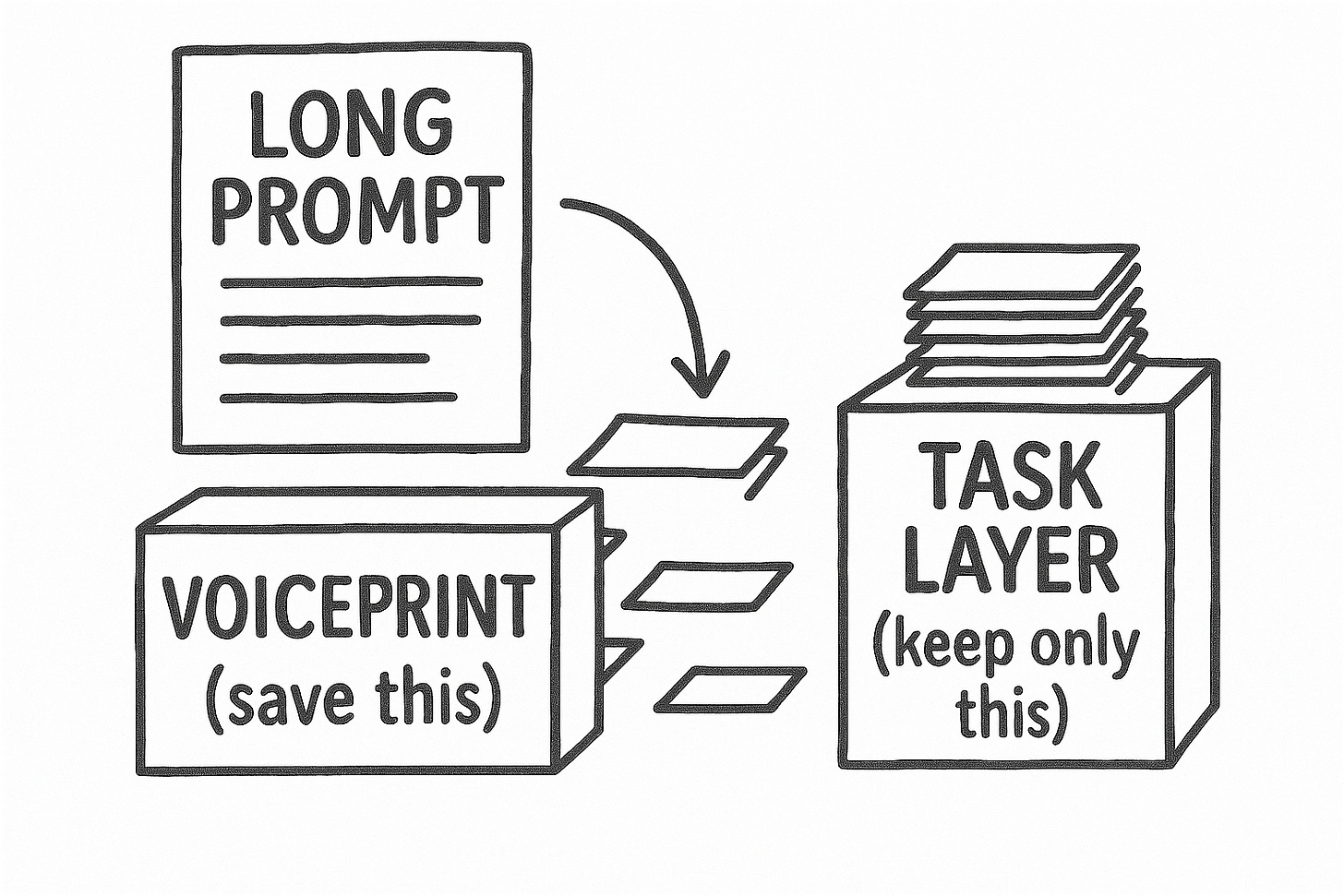

Here’s the thing: Identity documentation should be comprehensive. Task prompts should be minimal.

Build your voice reference thoroughly. Document your patterns in detail. Feed that to AI as context it can draw from.

But when you make the actual request? That’s where minimalism wins. Don’t re-explain your voice in every prompt. Don’t stuff the task with competing constraints. The identity layer handles who you are. The task layer handles what you need right now.

Most people conflate these. They try to establish identity and define the task and set constraints and specify format—all in one massive prompt. That’s where everything falls apart.

Constraint Collision (Or: Why Your Task Prompts Are Fighting Themselves)

When you shove everything into a single request, your instructions compete. Literally.

“Be conversational but professional.” Which one wins when they conflict? The AI doesn’t know. Neither do you, probably.

“Keep it concise but include all key points.” Where’s that line? What happens when concise loses the wrestling match to comprehensive?

“Sound confident but acknowledge limitations.” These are opposing forces. You’re asking the model to arm wrestle itself.

The AI resolves these collisions by hedging. It splits the difference. Qualifies statements. Adds “however” and “that said” and “it’s worth noting” to cover all bases.

This is where slop comes from.

Not from AI being bad at writing. From AI being too good at following contradictory instructions simultaneously.

You asked for everything in one breath. It gave you spam fodder.

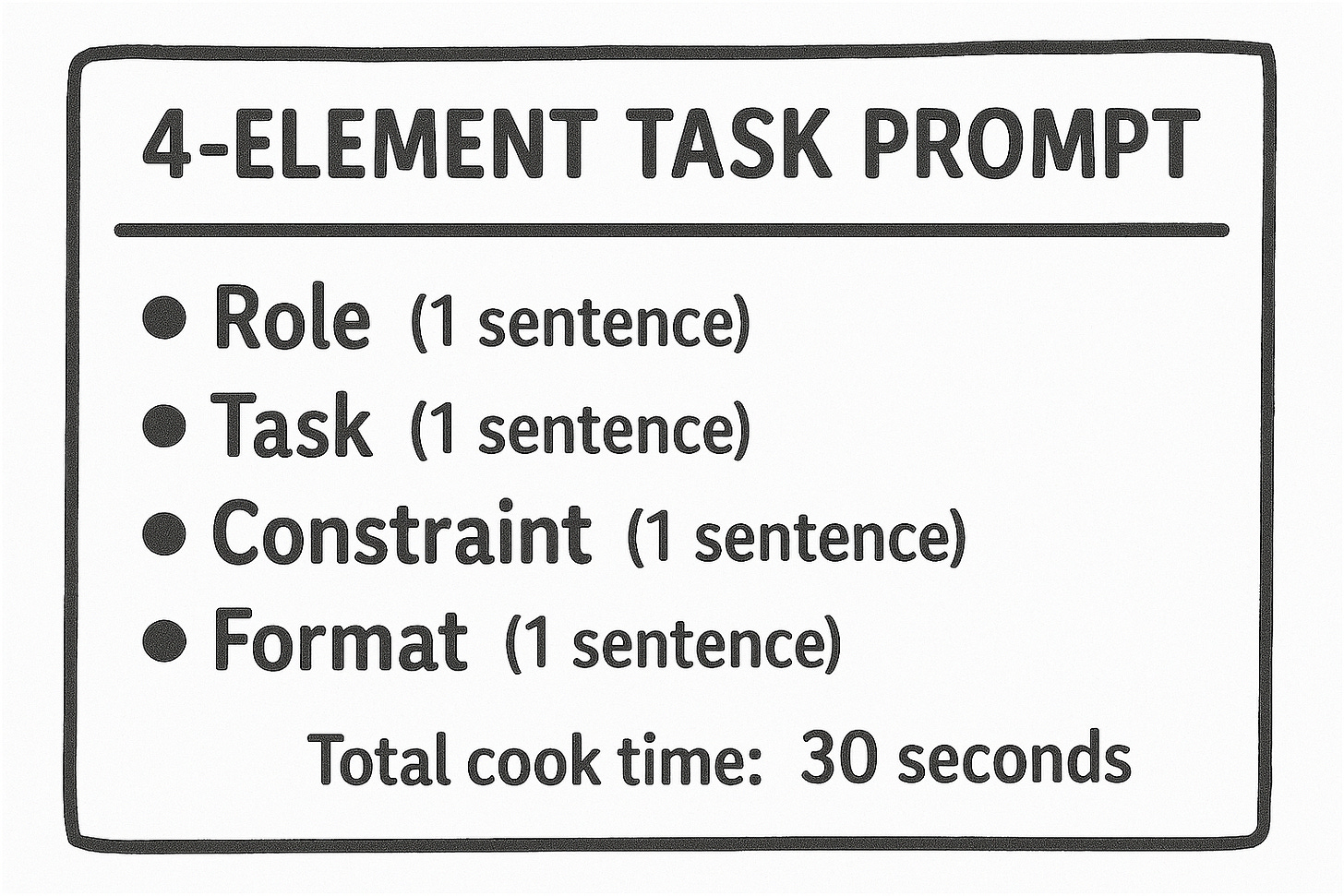

The 4-Element Task Prompt (Embarrassingly Simple Edition)

After testing hundreds of prompts across cold outreach, newsletters, and client work (read: after wasting hundreds of hours I’ll never get back), I kept landing on the same minimal structure for task prompts.

Four elements. One sentence each. That’s it.

1. Role/Context

Who is the AI being for this task? One sentence. Not a biography. (Your identity documentation already handles the deep stuff.)

2. Task

What specific output do you need? One deliverable. Not a project plan.

3. Constraint

The single most important rule for this specific request. One. Not a list of twelve things to avoid.

4. Format

How should it look? Structure. Length. Done.

Example (assuming AI already has your Voiceprint loaded):

“You’re writing cold outreach as me. Write the opening email for a prospect who runs a B2B newsletter. Keep it under 150 words. No throat-clearing, no ‘I hope this finds you well.’”

Four sentences. Maybe 35 words total.

Compare that to the 487-word monster that re-explains your audience demographics, restates your positioning, re-describes your tone guidelines, re-lists your vocabulary preferences, re-specifies your formatting requirements—all information that should live in your Voiceprint, not crammed into every single request.

One version gives you something you’d actually send. Put your name on it. Let it hit 200 inboxes.

The other gives you something that sounds like it was written by everyone and no one. A corporate ghost. Delete.

The Right Place for Comprehensive Detail

I’m not saying “never document your voice thoroughly.” The opposite, actually.

Build your identity documentation with obsessive detail:

Your vocabulary patterns and banned words

Your sentence structure preferences

Your paragraph architecture

Your stance toward readers

Your tempo and rhythm

Examples of your best work

Anti-patterns to avoid

All of that matters. All of that should exist. But it exists as reference material the AI has access to—not as instructions you repeat every time you make a request.

Think of it like working with a human collaborator who’s read your complete style guide, studied your past work, and internalized your patterns. You don’t re-explain everything each time you need something. You just say: “Write me a cold email for newsletter operators. Keep it tight. No corporate throat-clearing.”

They already know who you are. Now you’re just telling them what you need.

That’s the relationship you’re building with AI. Identity layer handles the who. Task layer handles the what.

When You Actually Need More (The 20% Exception)

Minimalism isn’t religion. Sometimes four elements won’t cut it for a task prompt.

Add complexity when:

The task needs domain-specific knowledge the model won’t apply automatically (legal, medical, technical stuff that could actually matter)

You’re matching output to specific examples not in your identity documentation

The format is genuinely weird or non-standard

You’ve tested the simple version and it failed in a specific, nameable way

That last part matters.

Don’t preemptively add constraints because they might help. Add them because the minimal version broke in a way you can point to. “The output used industry jargon I told it to avoid.” Okay. Add that constraint to this request. (Or better: add it to your identity documentation so you don’t have to repeat it.)

Most task prompts never need more than six elements. If you’re past eight, you’ve probably re-entered the collision zone.

The Subtraction Test (Your Homework, If You Want It)

Next time you’re building a task prompt, try this:

Write your detailed version. All the context. All the requirements. The whole beautiful mess.

Then cut it in half. Move anything that’s about who you are into your Voiceprint. Keep only what’s about this specific request.

Test both.

I’d bet you a doughnut the shorter version produces something closer to usable. Maybe not perfect. But workable. A foundation you can build on instead of a hedged disaster you need to tear down and start over.

The prompt engineering industrial complex wants you to believe mastery means more. More techniques. More structure. More comprehensive frameworks. (More friggin’ courses to sell you. But that’s a rant for another day.)

Working with AI isn’t about controlling every variable in every request. It’s about separating identity from task—building the reference layer once, then prompting minimally on top of it.

Four elements for the task. Comprehensive documentation for the identity. Don’t conflate them.

The shortest task prompt that produces usable output is the best task prompt for writing. Your Voiceprint handles the rest.

What’s your most over-engineered prompt disaster—and looking back, how much of it was identity documentation crammed into a task request?

Crafted with love (and AI),

Nick “Four Sentence Max“ Quick

PS: Want more on collaborating with AI without losing your voice? Subscribe for new posts every Sunday and Wednesday.