The Humanizer Tool Scam That’s Costing Creators Millions

You're not getting flagged for using AI. You're getting flagged for being boring.

The Humanizer Tool Scam That’s Costing Creators Millions

AI detectors don’t detect AI. They detect average.

I have a friend who produces content like a factory produces plastic straws.

Disposable. Efficient. Utterly indistinguishable from every other plastic straw that’s ever existed. Technically functional. Environmentally catastrophic. Killing approximately six hundred turtles per post.

(That statistic is made up. The spiritual damage is not.)

He chases trends the way a golden retriever chases squirrels—no strategy, no direction, just pure dopamine-fueled pursuit of whatever moves. Algorithm shifts? He pivots. New platform feature? He’s there. Viral format of the week? Already filming.

The man is a one-person slop factory. And I say this with genuine affection. (He’d say the same about me, though his criticism would involve the phrase “leaves money on the table” repeated with increasing desperation.)

Last month, he cornered me at a coffee shop—the kind of ambush you can’t escape without being rude—and spent forty-five minutes trying to convince me that I needed to subscribe to a “humanizer” tool.

“Your stuff will never see the light of day,” he said, with the fervor of someone who’s just discovered a new religion. “The algorithms are cracking down. Detection is everywhere. You need this or you’re done.”

He pays $47 a month for software designed to make AI content sound more human.

I’ll just let that sentence sit there for a second. Let it marinate. An artificial intelligence tool. To make artificial intelligence content. Sound. Less. Artificial.

(We are a species that invented the Pet Rock, the Shake Weight, and five-year extended warranties on devices with an eighteen-month lifespan. We will absolutely buy software to un-robot our robot words. The market provides.)

He ran one of my newsletters through his miracle software—without asking, because boundaries are for people who aren’t “trying to help”—and triumphantly showed me the results.

“See? You’d flag at 67% AI-generated. You need this.”

I didn’t have the heart to tell him what that percentage actually meant.

What Detection Actually Detects (Spoiler: Not What You Think)

Here’s the thing my friend doesn’t understand, and neither does the billion-dollar industry that’s taken his credit card hostage:

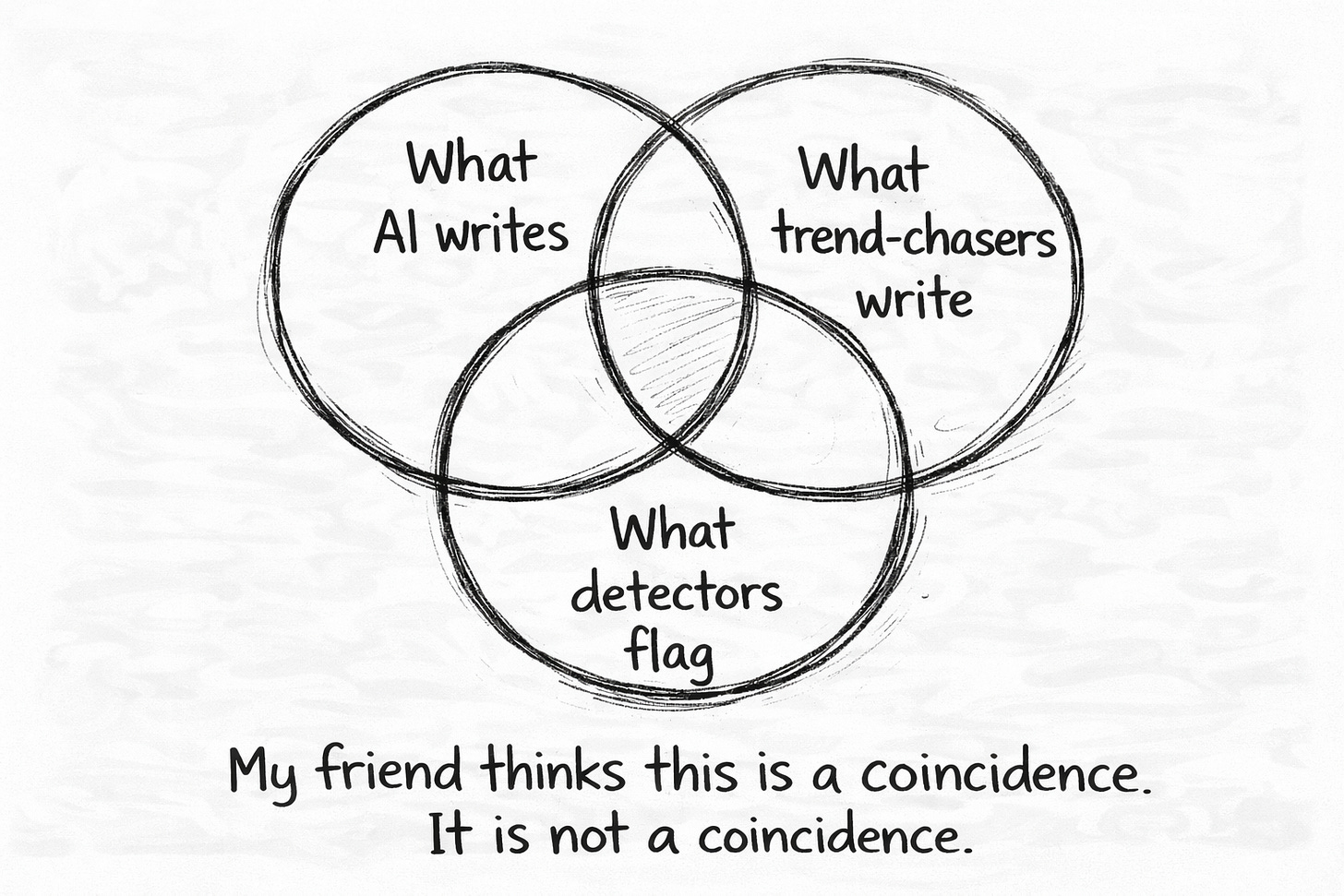

AI detectors don’t detect AI.

They detect average.

That’s it. That’s the whole con. A simple truth dressed up in enough technical language to seem sophisticated, sold to panicked creators who think the robot police are coming for their content.

Detection tools work by measuring how closely your text clusters around the statistical mean. The words most writers use. The structures that appear over and over across millions of documents. The predictable, safe, expected patterns that accumulate like sediment at the bottom of a very boring lake.

Text that hugs that mean? Flagged.

Text that deviates meaningfully? Passes clean.

The detector has no idea who or what wrote the words. It doesn’t care. It’s not some digital bloodhound sniffing for the scent of silicon. It’s a statistical model asking one question: Does this sound like everyone else?

Which means—and I need you to sit with this—you can write convergent garbage entirely by hand, with your own human fingers and your sad human brain, and get flagged as AI-generated.

You can also produce genuinely distinctive work with AI assistance and pass completely clean.

Some of my own early newsletters flagged at 70%+ AI-generated. Written entirely by hand. By me. A person who now teaches people how to not sound like AI. This was long before AI was released on the public like a plague with a marketing budget—back when ChatGPT was still a twinkle in Sam Altman’s eye and the rest of us were producing slop the old-fashioned way.

(It’s like a personal trainer getting winded on the stairs. Humbling doesn’t begin to cover it.)

I’d optimized myself into the exact center of the bell curve. Years of chasing “best practices,” copying what worked, smoothing every edge until my writing was statistically indistinguishable from the mean. I’d become the average the detection tools would later learn to measure.

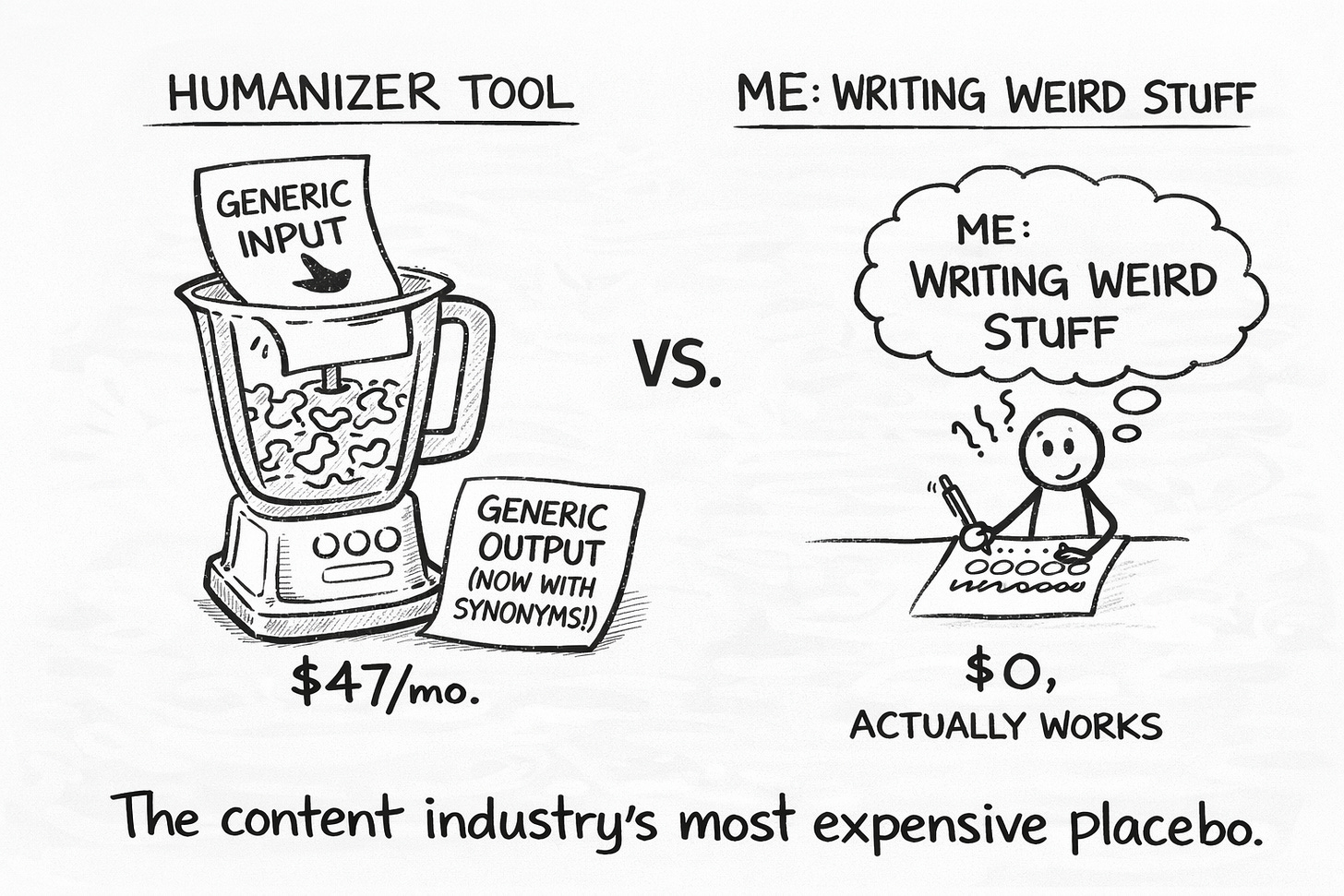

The humanizer software he’s paying for? It takes his already-average content and shuffles it into a different flavor of average. New synonyms, same blandness. Restructured sentences, same gravitational pull toward the middle.

He’s paying $47 a month for a tool that converts generic into different generic—like a money laundering service for boring.

The Beautiful Stupidity of the Humanizer Industry

Let me explain what my friend is actually paying for.

Humanizer tools take your text and:

Swap words for synonyms. Which means they replace one common word with another common word. “Optimize” becomes “enhance.” “Streamline” becomes “improve.” You’re still in the same linguistic neighborhood. You’ve just moved from one beige apartment to a slightly different beige apartment.

Restructure sentences. Into different configurations of the same fundamental blandness. The words are rearranged like furniture. The room is still ugly.

Add “variation” through randomization. Which is noise pretending to be signal. Static pretending to be music. The written equivalent of putting sunglasses on a corpse and calling it a weekend at Bernie’s.

What humanizer tools don’t do is make your writing sound like you. They have no idea who you are. They only know what average looks like—and they’re exceptionally skilled at helping you get there from slightly different directions.

My friend runs everything through his humanizer. It works. His content passes detection. It also passes right through readers without leaving a mark. Undetectable and unforgettable are not the same thing, but he’s nailed one of them.

(He’s also started using phrases like “synergize your content strategy” unironically. The tool is working exactly as designed. Just not in the way he thinks.)

The Uncomfortable Math My Friend Won’t Accept

Let me pull back the curtain on what’s actually happening under the hood.

AI generates text by predicting the most probable next token. “Most probable” means statistically average—the words and patterns that appear most frequently in training data. When an AI writes, it’s essentially asking: What would most people say here?

And then it says that. Over and over. Forever. The mathematical mean made manifest. The platonic ideal of the middle of the road.

Detection tools measure the same thing from the other direction. They ask: Does this text cluster suspiciously close to what most people would say?

Here’s where it gets philosophically uncomfortable:

He’s spent years chasing what works until “what works” is all that’s left. He is the average the detector is measuring.

He uses AI to generate content. Then he uses AI to humanize the AI content. Then he wonders why detection tools (which are also AI) keep flagging him. It’s algorithms all the way down, and he’s paying $47 a month to spin in the loop.

(There’s a sci-fi story in here somewhere. Man spends decade becoming indistinguishable from robot. Buys robot tool to seem less robotic. Robot tool succeeds in making him indistinguishable from different robot. Man declares victory. Credits roll. Nobody learns anything.)

When I write something that flags at 67%, it’s not because I’m secretly an AI. It’s because parts of my writing drift toward the mean. The generic transitions I haven’t murdered yet. The safe vocabulary I default to when I’m tired. The places where I’ve smoothed myself out instead of leaning into my specific weird.

The detector isn’t wrong. Those parts are average. That’s useful information.

My friend sees the same feedback and reaches for his credit card.

What The People Who Never Worry About Detection Know

My friend is terrified of AI detection. Checks every piece. Runs everything through multiple tools. Pays for software to “fix” what the other software flagged.

I haven’t thought about detection in months.

Not because I’m arrogant. (Okay, not only because I’m arrogant.) But because the writers who never worry about detection all share one characteristic:

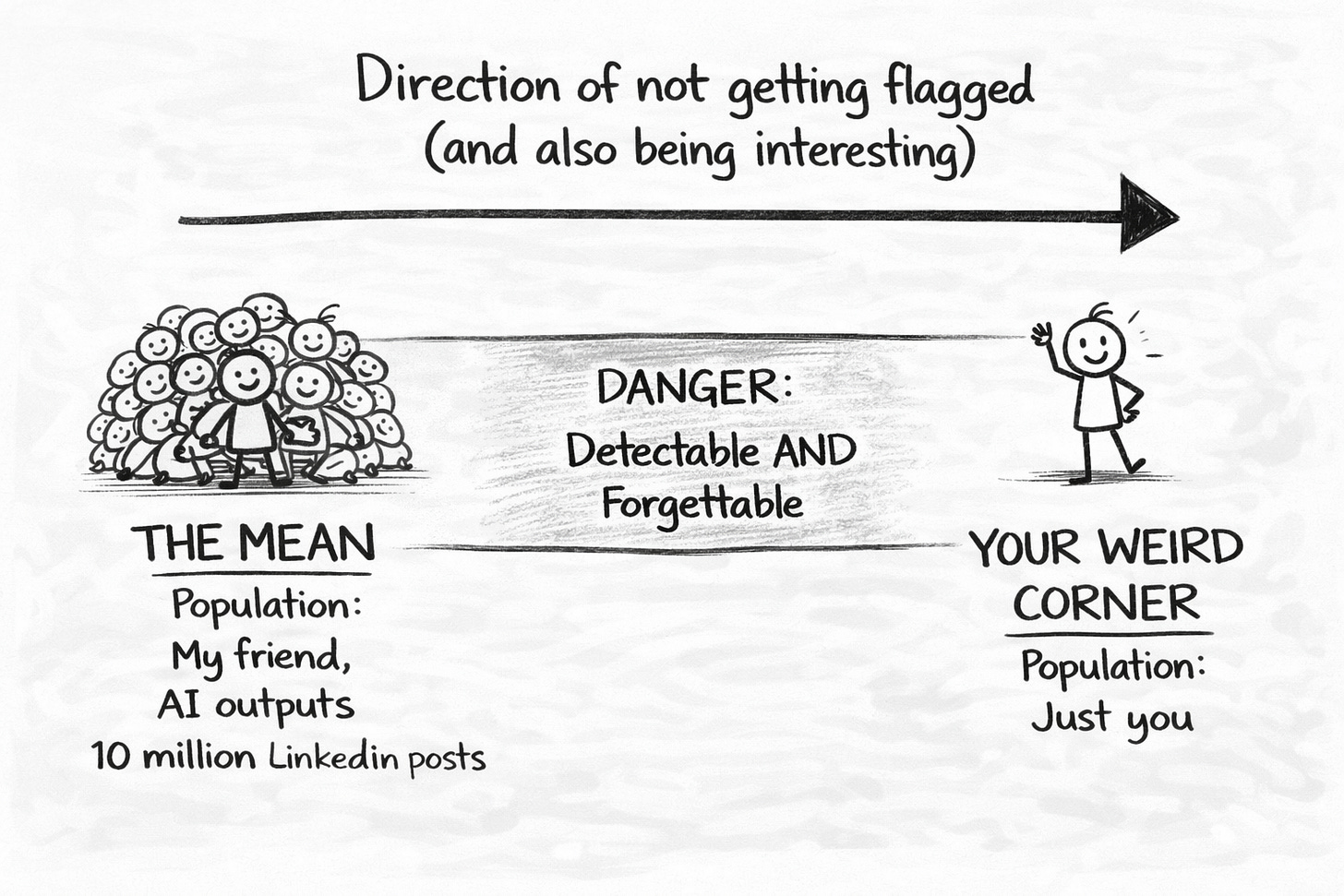

They’re too weird to flag.

Not random-weird. Not chaos-weird. Not “let me use seventeen exclamation points and call it personality” weird.

Distinctively, consistently, recognizably weird in their own specific direction.

This is divergence: writing that deviates from the statistical mean in patterns that are yours. Your vocabulary tics. Your structural obsessions. The rhythm that sounds like you reading aloud to a room that may or may not be listening.

Divergent writing doesn’t beat detection. It makes detection irrelevant.

Because it doesn’t cluster where detectors look. It’s off in its own corner of the statistical landscape, being strange in the exact way only you know how to be strange.

Let me show you the difference:

My friend’s style: “The key to growing your audience is showing up consistently with content that resonates. It’s not about being everywhere—it’s about being intentional with where you spend your energy.”

What divergence sounds like: “AI didn’t change how I write. It changed what I don’t have to write anymore—the research rabbit holes I’d fall down like Alice if Alice had ADHD and a deadline, the outline scaffolding I’d overthink until it collapsed under its own pretension, the first-draft garbage I used to stare at for an hour wondering if this was the moment I finally became a fraud. The actual sentences? Still mine. Still weird. Still full of parentheticals that would make my high school English teacher reach for the bottle of Southern Comfort she kept in her desk drawer.”

One clusters toward the mean so tightly it practically has a gravitational field. The other has friction, texture, specificity.

Guess which one passes detection without trying.

My friend would run both through his humanizer. The tool would “fix” them both. They’d both come out different-but-still-generic.

He’d pay $47 for the privilege.

The Three Questions Worth More Than Any Subscription

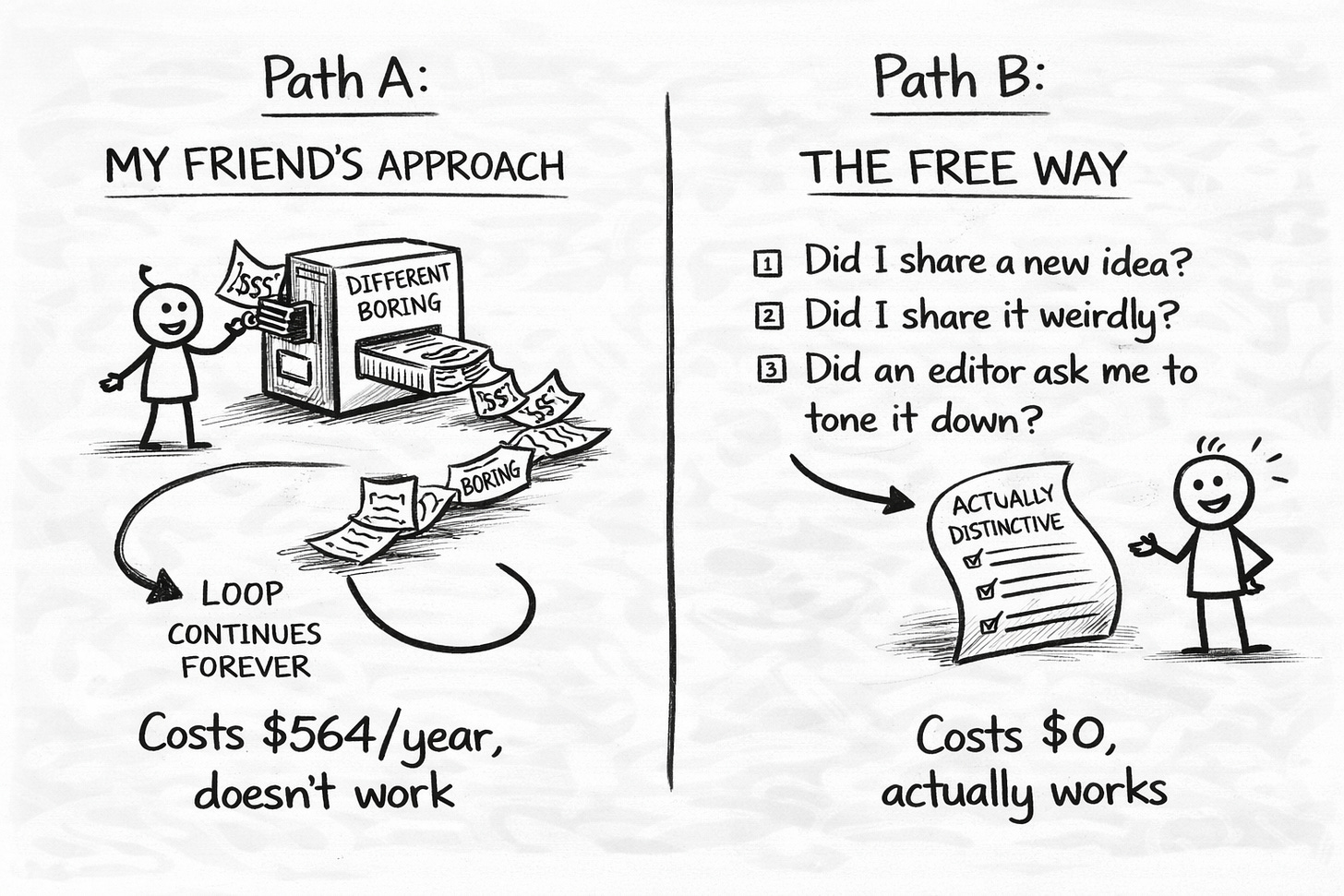

My friend keeps asking if I’ve signed up for humanizer software yet.

Every time I tell him the same thing: I have something better. It’s free. And it actually works.

Before I publish anything, I ask three questions:

Question One: Where does this sound like anyone could have written it?

Find at least two moments. These are the convergence points—the places where I’ve drifted into the gravitational pull of Everyone Else’s Ideas, Expressed In Everyone Else’s Words.

I rewrite them with my patterns. My vocabulary. My rhythm. The way I’d actually explain this if I were three drinks in at a dinner party and someone made the mistake of asking.

(My friend’s answer would be “nowhere.” He’s been swimming in average so long he thinks it’s air.)

Question Two: Where does this sound unmistakably like ME?

Find at least three moments. If I can’t point to three places where my fingerprints are visible—where a reader who knows my work would recognize my voice—I’ve converged too far.

I keep adding divergence until I can say: “That. That’s mine. Nobody else would have said it quite like that.”

(My friend would struggle here too. His fingerprints have been optimized off. Focus-grouped away. A/B tested into oblivion. He writes like a composite sketch of what content is supposed to be.)

Question Three: Would a reader who knows my work recognize this as mine?

Not because of the topic. Not because my name is on it.

Because of how it feels to read. The texture. The way the sentences land. The specific flavor of strange that only I produce.

If the answer is no, I’m not done yet.

This takes five minutes. It costs nothing. It works forever.

And it does something my friend’s $47 monthly subscription never will: it makes me better, not just different-flavored average.

What I Can’t Tell My Friend

Here’s the part I don’t say to him, because he’s not ready to hear it:

The humanizer tool isn’t his problem. The humanizer tool is a symptom.

His problem is that he’s spent years systematically removing everything distinctive about his content in service of What Works. He’s optimized for algorithms that reward sameness. Chased trends that pull everyone toward the same center. Copied formats that were copied from formats that were copied from something that was probably interesting once, before it got photocopied into oblivion.

He’s become a human content average.

And no amount of synonym-shuffling software will fix that.

The detector is telling him the truth: You’ve written a lot of words that add up to a whole lot of nothing.

That’s not a bug to be patched. That’s a feature request his soul has been filing for years.

(God, that’s bleak. But it’s also true. And sometimes truth shows up in 8-inch heels you can’t walk in but already bought.)

My friend will probably keep paying his $47 a month. He’ll keep chasing algorithms. He’ll keep wondering why his “optimized” content feels hollow even when it sometimes performs.

And somewhere, a detection tool will keep flagging him… not because he’s using AI, but because he’s stopped being himself so effectively that the difference no longer matters.

The robots were never coming. The averageness was already there.

Be honest: have you ever paid for a humanizer tool? No judgment. (Okay, a little judgment. But mostly curiosity.)

Crafted with love (and AI),

Nick “Still Not Paying for Humanizer Tools” Quick

PS…This newsletter is free. Humanizer tools are $47/month. One of these actually helps your writing. Sundays and Wednesdays guaranteed. Daily when I’m feeling unhinged.

Nick illustrates how a subscription tool doesn't live up to its billing:

"My friend runs everything through his humanizer. It works. His content passes detection. It also passes right through readers without leaving a mark."

In other words, the humanizer is like digital Metamucil. Your heart thanks you, but your mind wants more, urging you to find divergent writers. See his article for more great -- and free -- insights.