The Human-AI-Human Sandwich

You're one missing layer away from AI output that actually sounds like you wrote it.

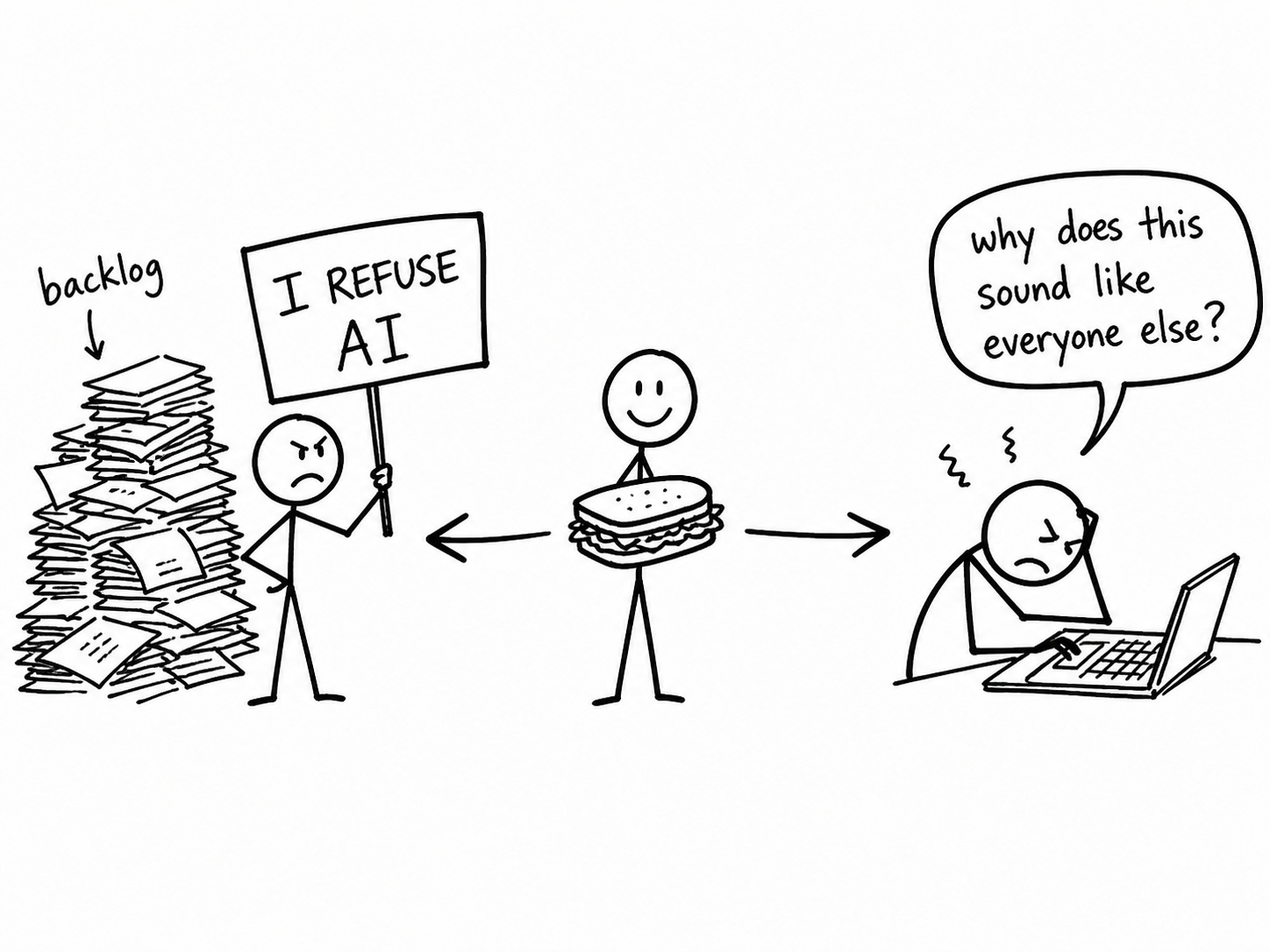

Two types of creators are quietly failing the work they actually came here to do.

The first kind refuses to use it. A principled stand. Authenticity as armor. The slow, righteous burn of manual production while slop factory operators publish three posts before breakfast and sleep like babies. (I respect this position the same way I respect people who refuse to use microwaves. The conviction is real. The potatoes are cold. But at least their soul is intact, probably.)

The second kind surrendered so completely they’ve developed a kind of Stockholm syndrome about it. Type a prompt. Accept the output. Hit publish. Look confused when it performs like a form letter someone left in a parking lot. They used AI the way a drunk uses a lamppost. For support, not illumination. Then they blame the lamppost.

Creating work you’re proud of, at a pace that doesn’t wreck you, is the actual goal.

Not “using AI better.” Not “optimizing your content workflow.” Not any of the phrases that make this sound like a logistics problem when it’s a creative one.

Both failures share a cause. One missing layer.

The model that actually works is a sandwich. Human on top. AI in the middle. Human on the bottom. There is no accompanying course for $997. No countdown timer. No “founding member” pricing that expires at midnight and somehow resets by morning. No testimonial from someone named Chad who 10x’d his output in 30 days and has chosen not to share any verifiable details about that. Simple truths are profoundly disappointing to people who paid for a course about them. AI is not the writer. And autocomplete left unsupervised produces the kind of sentence that a medieval peasant would correctly identify as a bad omen.

Layer 1: The Original Thought

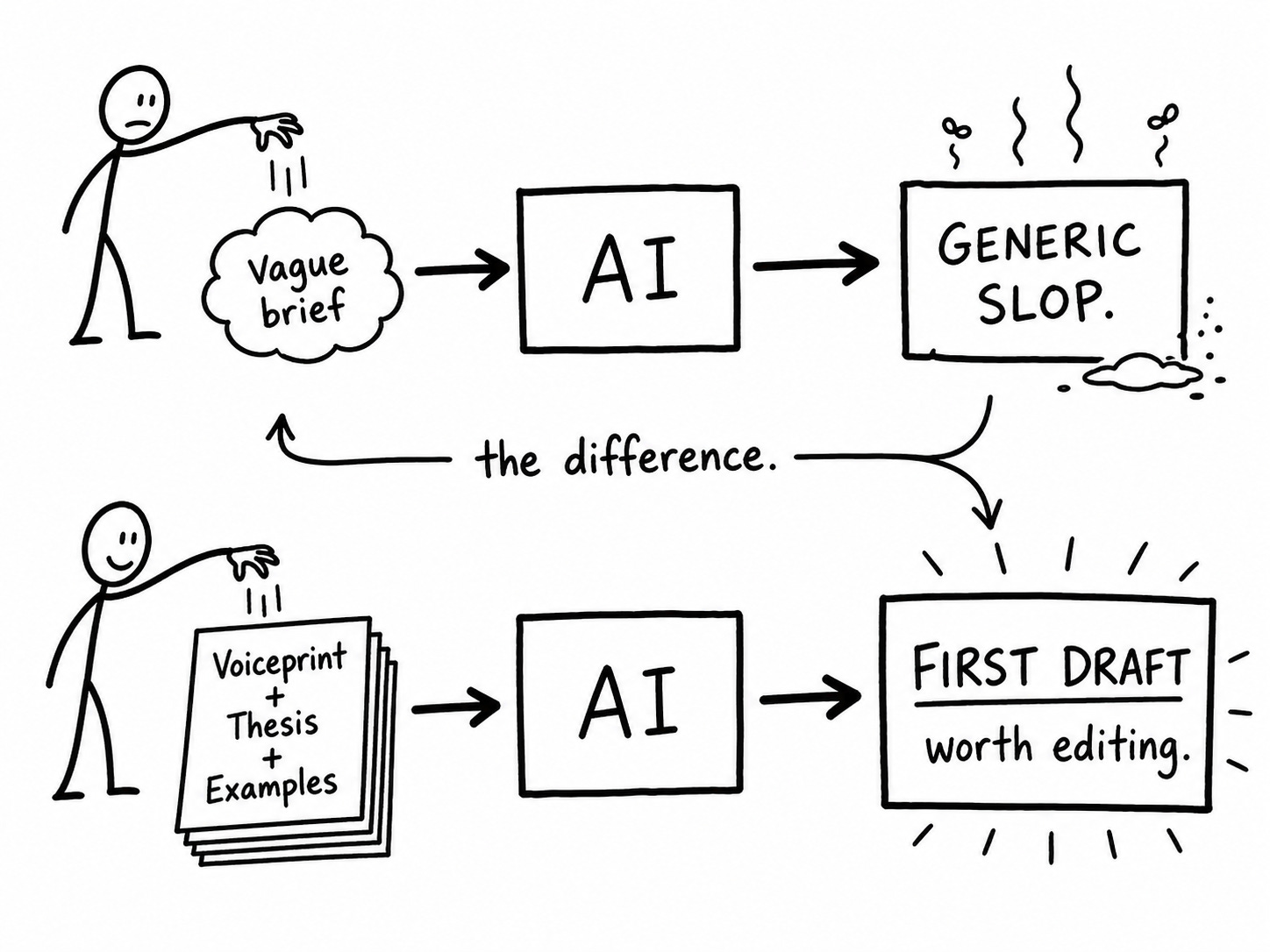

Nobody wants to hear this, so I’ll say it plainly: the generic output is not AI’s fault.

It’s yours.

You typed “write me a post about productivity and AI” and you got back a post about productivity and AI. Competent. Forgettable. Completely interchangeable with the several thousand other posts published on that exact topic this week by people who also typed a vague brief and are also confused why their content performs like the second page of Google search results. (There are approximately 7.5 million articles published every single day across all platforms. “AI and productivity” is not an underserved niche. It is a traffic jam with a content calendar.)

AI does not invent what you do not give it. This seems obvious. It is being treated as a mystery. It pattern-matches against an almost incomprehensible volume of human text and produces the most statistically plausible continuation of whatever you handed it. Hand it vague, you get average. Hand it generic, you get slop. Hand it the documented, mechanical, specific version of how you actually think and write, and you get something worth developing.

This layer has two jobs. Skip either one and the machine runs fine. The output just has no reason to be yours specifically.

Your Voiceprint. The VAST framework is what turns “write like me” from a useless instruction into something AI can actually follow. You’re not saying “be conversational.” You’re documenting the words you never use, the way you open a section, the relationship you have with your reader, the rhythm your sentences run before they release into something complicated. Every writer has these patterns. Most can’t name them. VAST makes you name them, which turns vague intention into actual instructions. (”Be conversational” is what you say when you have instructions to give and have chosen not to give them. AI will fill the gap with its best approximation of human warmth, which reads like someone who studied friendliness from a manual and passed the exam but has never been to a dinner party.)

Your actual thesis. The irreplaceable part. The angle only you bring to this piece, on this day, given what you actually believe. Not a synthesis of existing takes. Not a summary of what everyone else already said, redistributed by you in a slightly different sequence. The thing you’d say if you were explaining this to a smart friend who would push back if you were being lazy. (The friend who agrees with everything you say is charming. They are not improving your thinking. They are improving your mood. There is a difference, and it shows up in your analytics dashboard.)

Here’s what this looks like in practice.

Sloppy brief: Write a post about how creators should use AI for their content.

Sharp brief: I’m writing about the Human-AI-Human Sandwich. The argument is that most creators either refuse AI entirely or surrender to it completely, and both fail the actual creative goal. The angle: AI is the transmission, not the engine. The piece has three layers. What you bring before AI touches it. How you configure the session. What you bring after. Each layer has a specific job. The failure mode for each is that creators skip it.

The output difference is not subtle. (I’ve run this experiment with embarrassing frequency. It’s become, at this point, my personality. My chihuahua has started looking at me differently.)

The generic was already inside the first brief. The second brief leaves no room for it.

Layer 2: The Translation

This is where AI enters.

You’ve done the thinking. The original thought exists. Now you hand it to AI and the rendering begins. What comes back is a translation of your thinking into draft form. The quality of that translation depends entirely on how complete your instructions are before you send anything.

Most people type what they vaguely want and are then surprised to receive exactly what they vaguely deserve.

Complete instructions have three parts.

Your Voiceprint. Load it first, before anything else. AI reads context in sequence, and you want your documented voice to be the first thing it absorbs before it sees what you’re asking it to write. (Without it, AI writes the way someone talks when they're trying very hard not to say anything that could be used against them later.)

Your deliverable spec. This is where most instructions fall apart. A brief tells AI what you want to say. A deliverable spec tells AI what you’re actually building. Three questions, in order.

What’s the situation? Why does this piece exist, what prompted it, and where does it live? A newsletter post landing in someone’s inbox at 7am on a Tuesday has a different job than a pillar post someone finds through search six months from now. AI calibrates everything differently depending on context. Give it the context.

What’s the job? Not “inform readers about X.” The single thing this piece needs to accomplish. What should the reader know, believe, or do differently after reading the last sentence? One answer. If you have two answers, you have two pieces.

What does done look like? Format, length, structure, platform. Conversational prose or subheadings. Eight hundred words or two thousand. A pillar article or a LinkedIn post or a cold email. These are not stylistic preferences. They are structural constraints that determine what AI produces. Leave them out and AI guesses. AI guessing format produces something technically complete that fits nowhere in particular.

Three examples of your best work. Not your most popular pieces. The ones that sound most like you at your sharpest. AI reads examples more faithfully than it reads instructions. Instructions tell it what to do. Examples show it what you mean. (Three is the number. Two is usually enough to find a pattern. Four starts producing diminishing returns and occasionally makes the output too rigid to be useful. Three.)

Load all three parts. Then send the brief.

What comes back is not a finished piece. It is a translation. Close in places, missed in others, with something lost that only you will notice. That is not a flaw in the process. It’s exactly why Layer 3 exists.

Layer 3: The Reclamation

Something always gets lost in translation.

Not because AI failed. Because every brief is a compression of a bigger vision, and something always stays behind in the squeeze. The draft is close. Close isn’t done.

That’s what this layer is for. Not review. Not quality control. Not “checking AI’s work” like a tired substitute teacher handing back essays she didn’t read. Reclamation. You’re taking back what got lost and making the piece unmistakably, specifically, undeniably yours.

Three questions. In order. No skipping. No “I’ll do it next time.”

Does this sound like me? Not “is this good.” Good is not the test. AI is very good at “good” now, which is precisely the problem, and worth sitting with for a moment before moving on. Read the draft out loud. (Mandatory. Not advisory. Not “when I feel like it.” If it sounds like someone narrating a training video in your living room, it will sound like someone narrating a training video on every screen it lands on. And your readers will leave. Quietly. Efficiently. The way people leave bad parties. Not with confrontation. Just with a sudden discovery that they have somewhere else to be.) Where does it drift? Where did AI smooth the edges that should stay jagged? That’s where you cut and rewrite in your own hand. That’s where you earn the byline.

Did anything die in the translation? Your brief had a specific angle, a sharp one, because you did the work in Layer 1. Does the output execute that angle, or did AI hedge it into something palatably agreeable to a broad hypothetical audience that does not exist and would not subscribe anyway? A thesis that makes everyone comfortable makes no one think. Find where it softened what you meant to say sharp. Say it sharp. The audience that wanted it soft was never going to be your audience.

What’s the line I’d actually use? AI’s phrasing is a first offer. You’re allowed to counter. Every sentence that doesn’t quite land the way you’d land it is a sentence waiting for your version. Your version is almost always better. Not because AI is bad at this. Because nobody writes like you, except you.

Five minutes. That’s all the reclamation takes. I’ve skipped it. I’ve published without it. Somewhere in my back catalog there’s a small collection of posts I’m technically proud of and privately embarrassed by. They sit there like a relative who showed up to Thanksgiving in a novelty tie reeking of Fireball nips he picked up at a gas station and has been working through since the parking lot. You can’t uninvite them. You can only hope nobody looks too closely.

The honest thing to say at the end of this is that the sandwich requires more effort than either extreme. More setup than “let AI do it.” More structure than “write it yourself and suffer through the timeline.” And most of that effort is front-loaded, which means it feels heavy before it feels useful. Which is why most people skip it, and then wonder why the output sounds like it was written by a consultant who learned about creativity at a two-day seminar in consultant who learned about creativity at a two-day seminar at a Ramada hosted by a guy named Brad who said "ideate" without irony and meant it.

What the sandwich gives back is time in the places that actually matter. The thinking phase. The angle phase. The “what am I actually trying to say here and does it matter” phase. That is the phase that cannot be outsourced. That is the phase that makes everything downstream worth reading. The machine runs on what you put in. Both ends.

Your fingerprints don’t end up on the piece by accident. You have to put them there twice, deliberately, on purpose. The clanker cannot want the piece to be good. It can only make it plausible. Wanting it to be good is the most important job in the process and it belongs entirely to you. Always has. The AI just made it easier to forget that for a while.

🧉 Which layer do you keep skipping: the input, the configuration, or the second human pass? Drop it in the comments. I’m asking because I care about where you’re stuck. Also because I have a bet with myself and the comments are how I find out if I won.

Crafted with love (and AI),

Nick “Three Inputs and a Prayer” Quick

PS... The sandwich method works better when it's been calibrated. The Ink Sync Workshop is the calibration. Free. Easy to implement. One session and the clanker stops producing that aggressively reasonable prose that sounds almost like you but isn't.

PPS... The subscribe button cannot want things. It can only wait. It has been waiting. This is its whole existence. Don’t leave it like this.