Stop Replacing Random Parts in Your Prompts Like a Drunk Mechanic

The 6 Failure Modes Behind Every Bad AI Output (And Their Specific Fixes)

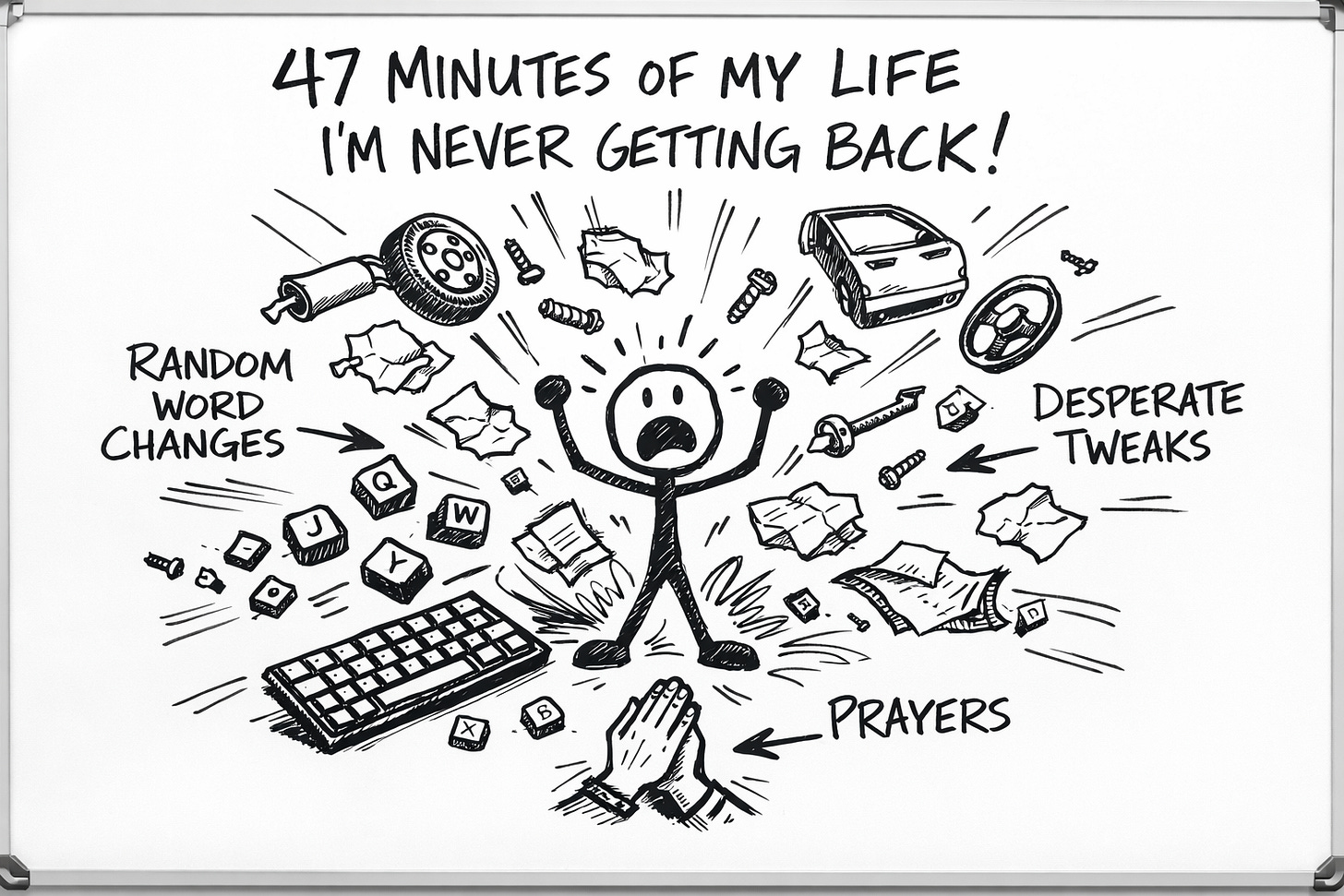

Somewhere right now, a reasonably intelligent person is changing a random word in a prompt and hoping it fixes everything.

It won’t.

They’ll do it again anyway.

This is the state of AI collaboration in 2025. Millions of people, exposed to the most powerful writing tool in human history, using the debugging methodology of a toddler pressing elevator buttons. Press. Wait. Press again. Press harder. Wonder why they’re not on the right floor.

(The elevator, at least, has an excuse. It can only go where the buttons tell it. AI doesn’t have that limitation. AI has the limitation of users who never learned to diagnose.)

I’ve watched this pattern play out hundreds of times. Creators, writers, smart people with actual expertise in their fields—reduced to random word substitution the moment Claude disappoints them.

“Make it more engaging.”

Nothing changes.

“Add more personality.”

Exclamation points appear. Nothing else changes.

“Be more conversational.”

The output adds “Hey there!” to the beginning, which is somehow worse than no personality at all. Like a hostage video where they made the hostage smile.

And then—this is the part that gets me—they conclude that AI “doesn’t work for them.” As if AI were a personality test. As if some people are simply incompatible with language models, the way some people are incompatible with cilantro.

No.

Bad AI output isn’t random. It’s diagnostic.

There are exactly six reasons AI produces garbage. Each one leaves specific evidence. Each one has a specific fix.

Nobody’s “bad at prompting.” They’re just skipping the diagnostic step entirely. Like a mechanic who fixes every car by randomly replacing parts until the noise stops or the customer gives up.

(Don’t go to that mechanic. I feel like I shouldn’t have to say this.)

The Drunk Mechanic Problem

Here’s how most people approach bad AI output:

Write prompt

Get bad output

Change something (anything)

Get different bad output

Change something else

Either get lucky or give up and write it yourself

This is what I call the Drunk Mechanic approach. Something’s wrong with the car. Don’t know what. Don’t care to find out. Just start replacing parts at random and hope the noise stops.

Sometimes it works. (Briefly. By accident.)

Mostly it doesn’t.

Mostly you end up with a pile of replaced parts, an empty wallet, and a car that still makes the noise. Plus now there’s a new noise. Congratulations on your additional noise.

The sober mechanic (and yes, they exist; I’ve met several; they’re delightful) does something radical:

They diagnose first.

They listen to the noise. They ask questions. They narrow down possibilities based on evidence. And then they fix the specific thing that’s actually broken.

Revolutionary concept.

Turns out it works for prompts too.

The Six Failure Modes (A Taxonomy of Disappointment)

After watching hundreds of these little debugging disasters (and I do mean hundreds; I am nothing if not a dedicated observer of human suffering), I’ve identified exactly six reasons AI produces garbage.

Six. That’s the whole list.

Every piece of bad output traces back to one or more of these. Each one leaves fingerprints. Each one has a fix.

This isn’t prompt magic. This isn’t “secrets the AI companies don’t want you to know.” This is just diagnosis. The thing we should have been doing all along instead of mashing buttons like caffeinated primates.

Failure Mode 1: You Said What But Forgot the How

The Symptom: Output reads like it was written for “general audiences.” Which is a polite way of saying it was written for no one. It’s about your topic the way a Wikipedia article is about your topic. Technically correct. Emotionally bankrupt. The content equivalent of office carpet. You stare at it forty hours a week. You could not describe it if your job depended on it.

The Evidence: Look at the output. Is it generic? Could literally anyone have asked for this exact thing and gotten this exact response? If you swapped your name for any other name, would anyone notice?

Congratulations. You’ve discovered the statistical mean. That’s what AI defaults to when you don’t tell it to do something more specific. It averages everything it knows about your topic into one convergent blob of adequacy.

What Went Wrong: You told AI what to write. You didn’t tell it how to write it.

This is like ordering “food” at a restaurant and being surprised when the waiter brings you a bowl of plain rice. You got food. Technically. Is it dinner? By the loosest possible definition. Did anyone enjoy it? Only people who have completely given up on joy.

The Fix: Add structural and voice requirements.

Before:

“Write about prompt debugging.”

This prompt is a war crime against communication. It tells AI nothing except a topic. Everything else—structure, tone, approach, level of detail, whether jokes are allowed—AI is guessing. And AI’s guesses converge toward the mean, because that’s literally how the math works.

After:

“Write about prompt debugging. Open with a specific moment of frustration. Structure it around distinct failure modes, each with a symptom and a fix. Conversational but substantive tone, like you’re explaining this to a smart friend who’s ready to throw their laptop out a window. Include at least one absurd comparison.”

Now AI has instructions. Structure. Tone. Intent. An understanding that laptop defenestration is emotionally relevant to the topic.

It can still screw up. But at least it’s screwing up in the right direction.

Your diagnostic question: “Did I tell AI what to write, or did I tell it what and how and for whom and why?”

Failure Mode 2: You Asked AI to Channel a Ghost

The Symptom: The output is competent. Grammar? Flawless. Structure? Sound. Information? Accurate enough. But it reads like a very polite robot wrote it. Which is exactly what happened. You’re getting “helpful AI assistant voice,” which is the default when you don’t give it anything better to imitate.

(Helpful AI assistant voice is to writing what a ringtone is to music. Functional. Forgettable. Nobody’s crying in the shower to it.)

The Evidence: Read it out loud. Does it sound like a person with opinions and quirks and a specific way of seeing the world? Or does it sound like what a person sounds like when they’re trying very hard not to get fired from customer service?

What Went Wrong: You told AI to write in “your voice” without ever showing it what your voice looks like.

The Fix: Give it a voice anchor.

Add to your prompt:

“Match the voice and style of this example: [paste 200-300 words of your actual writing]”

That’s it. That’s the fix.

AI is remarkably good at pattern matching when you give it patterns to match. Without an anchor, it defaults to Helpful Robot. With an anchor, it has something specific to imitate.

This is the difference between telling a portrait artist “paint someone who looks like me” versus handing them a photograph. One produces a generic face with the right hair color. The other produces you.

Your diagnostic question: “Did I show AI how I write, or did I just tell it to write like me and hope for telepathy?”

Failure Mode 3: You’re Playing Defense When Offense Is Required

The Symptom: Output keeps doing the thing you specifically said not to do. “Don’t be formal” produces something still weirdly formal. “Don’t use jargon” produces jargon everywhere. It’s like the output read your instructions, understood them completely, and then did the opposite out of spite.

(AI doesn’t have spite. Probably. But some days I have my doubts.)

The Evidence: Count how many times your prompt uses “don’t,” “avoid,” “no,” or “never.” If it’s more than once or twice, you’ve found your problem.

What Went Wrong: You gave negative instructions instead of positive ones.

Try this: Navigate to the grocery store, but don’t turn left on Main Street, don’t take the highway, and don’t go past the church.

How’s that working for you? You know where not to go. You have no idea where to go. You’re standing still, paralyzed by a map made entirely of restrictions.

That’s what negative prompting does to AI. “Don’t be formal” requires understanding what formal means, generating text, checking if it’s formal, and if so, trying again. This is expensive. It often fails. It’s why you get formal output even when you specifically requested non-formal output.

The Fix: Positive instructions beat negative ones. Always.

Before:

“Don’t be formal. Don’t use jargon. Don’t start with a boring hook.”

This tells AI what to avoid. It doesn’t tell AI what to do instead. AI is now playing a game of elimination across infinite possibilities. You’ve handed it a map of landmines and said “get to the destination.” Which destination? You didn’t say. Just avoid the mines.

After:

“Write like you’re texting a smart friend who doesn’t work in your industry. Plain English only—simpler word always wins. Start mid-action, drop us into a scene, no throat-clearing.”

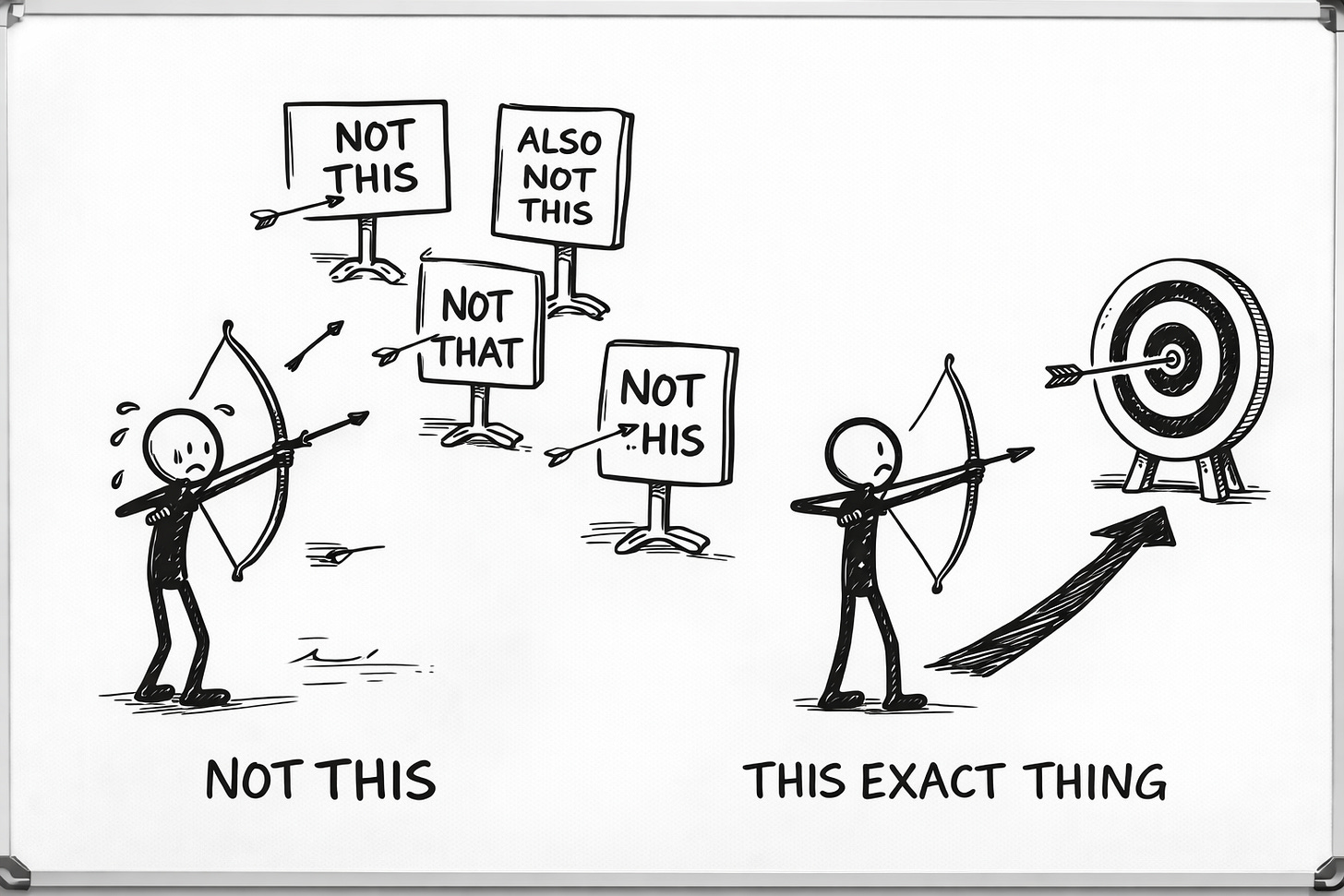

Now AI knows what to move toward. Much easier to hit a target when you can see the target.

Your diagnostic question: “Did I tell AI what I want, or just what I’m trying to avoid?”

Failure Mode 4: You Forgot to Mention Scope (And Now You Have a Dissertation)

The Symptom: You wanted a quick explanation and got a doctoral thesis. Or you wanted depth and got a fortune cookie with delusions of grandeur. The length is wrong. The detail level is wrong. Everything is technically correct but practically useless.

The Evidence: Compare what you needed to what you got. Is it four times too long? Is it embarrassingly shallow? Did you ask for directions to the corner store and receive a history of urban planning?

What Went Wrong: You didn’t specify scope. AI defaulted to “comprehensive and thorough” because that’s what most people want most of the time. Except when they don’t. Which is approximately half the time.

(This is the problem with defaults. They’re right often enough to feel reasonable. They’re wrong often enough to ruin your afternoon.)

The Fix: Be explicit about length and depth.

Add to your prompt:

“500-700 words maximum. Overview level only—no edge cases, no exceptions, no caveats. Assume the reader needs the essentials, not the encyclopedia.”

Or:

“2,000+ words. Go deep. Assume the reader knows the basics and wants nuance, second-order effects, and specific scenarios where things get weird.”

AI will follow these instructions. It just needs you to give them. It’s not psychic. (Yet. Probably. Let’s revisit this in 2027.)

Your diagnostic question: “Did I tell AI how long and how deep?”

Failure Mode 5: You Know Things AI Doesn’t

The Symptom: The output is well-written but aimed at the wrong target. It’s solving a problem you didn’t have. It’s persuading people who don’t need persuading. It’s answering a question nobody asked with impressive confidence.

This is the most frustrating failure mode because the writing is good. Technically excellent. Just excellent at the wrong thing. Like showing up to a job interview in a wedding dress. Impeccable outfit. Completely wrong context. Everyone’s uncomfortable now.

The Evidence: Read the output and ask: “Who is this for?” If the answer is “someone, somewhere, probably,” you’ve found your problem.

What Went Wrong: AI doesn’t know your audience. Your positioning. Your angle. The specific context that makes this piece necessary. It’s filling in blanks with statistical averages, and those averages don’t match your specifics.

You know things AI doesn’t know. You just didn’t tell it.

The Fix: Share your reasoning.

Add to your prompt:

“Context: My audience is skeptical of AI. They’ve tried generic prompt libraries and gotten garbage. They value craft and methodology over shortcuts. This piece needs to validate their skepticism while showing them a different path. They’ll roll their eyes at hype. They’ll lean in for substance.”

Now AI knows not just what to write but who it’s for and why it matters. That context changes everything. Every word choice. Every example. Every bit of tone.

Your diagnostic question: “Does AI know what I know about my audience, my positioning, and why this piece exists in the world?”

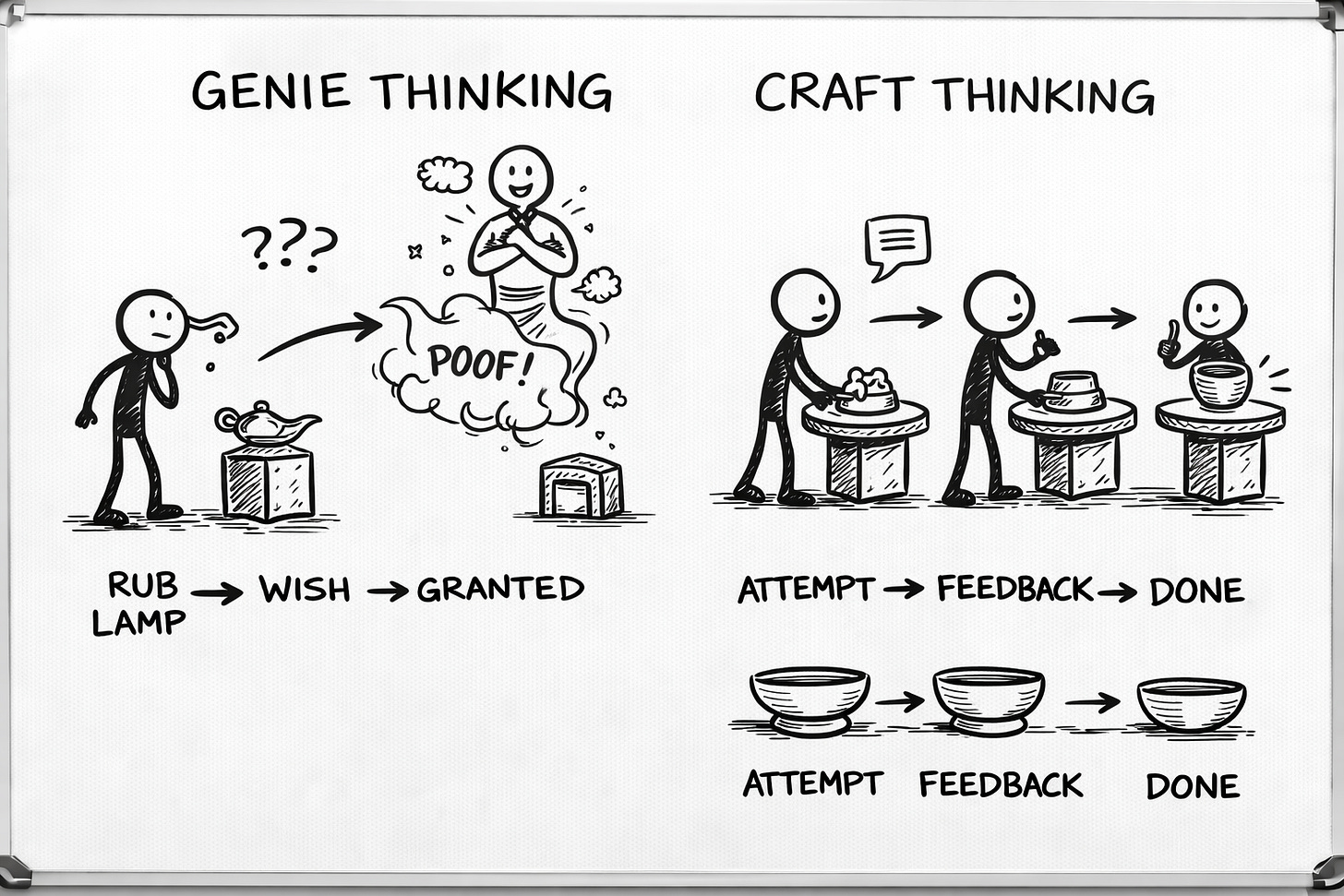

Failure Mode 6: You Expected a Miracle and Got a Process

The Symptom: First draft was bad. So you closed the tab and wrote it yourself.

The Evidence: Look at your chat history. Is there one prompt and one output, followed by silence and resentment? Welcome to the club. We meet on Thursdays. There are snacks. (The snacks are disappointing.)

What Went Wrong: You expected one-shot perfection from a process that requires iteration.

This is the failure mode that kills more AI collaboration than all the others combined. People get bad output, declare “AI doesn’t work for me,” and return to doing everything manually. Which is fine. Manual is fine. But the conclusion is wrong.

AI didn’t fail. The expectation failed.

Nobody writes a first draft and calls it done. Nobody plays a song once and releases the album. Nobody throws clay on a wheel and walks away with a vase. The first attempt is raw material. What you do next is the craft.

(We wanted a genie. We got a collaborator. The genie would have been faster. The collaborator is actually real.)

The Fix: Use specific feedback prompts.

Don’t start over. Don’t rage-quit. Don’t decide you’re “bad at AI.” Give directions.

“That’s too formal in the first half. Rewrite the opening three paragraphs more casually, like you’re slightly annoyed at conventional wisdom.”

“Good structure, wrong tone. Keep the skeleton, make the language punchier. Shorter sentences. More fragments.”

“The opening is weak. Give me 5 alternatives that start mid-action instead of with context.”

This is what collaboration actually looks like. First attempt is rough stone. Feedback is the chisel. What emerges is what you make together, not what the machine dispenses.

Your diagnostic question: “Did I give AI specific feedback on what to change, or did I just reject the whole output and walk away?”

The Diagnostic Protocol (For Humans Who Are Done Gambling)

Next time you get bad output, don’t panic. Don’t start randomly replacing parts. Don’t throw your laptop into traffic.

Diagnose.

Step 1: Look at the evidence. What specifically is wrong? Name it.

Step 2: Match it to a failure mode:

Generic? → Vague instructions (Mode 1)

Robotic? → No voice anchor (Mode 2)

Keeps doing what you said not to? → Negative instructions (Mode 3)

Wrong length/depth? → Scope missing (Mode 4)

Wrong target? → Missing context (Mode 5)

Just needs refinement? → Iterate with feedback (Mode 6)

Step 3: Apply the specific fix for that mode

Step 4: Still broken? Check for a second failure mode. They stack. (Because of course they do. Nothing is ever just one thing. That would be too convenient, and the universe doesn’t do convenient.)

Step 5: Save what works. When you successfully debug a prompt, you’ve created a template. Document it. Reuse it. Don’t make yourself solve the same problem twice. Life is too short and there are too many awesome Netflix series to catch up on.

Why Any of This Matters

You could read this as “tips for better prompting.”

But that misses the point.

This is about having a process for the inevitable moments when collaboration produces garbage. And it will produce garbage. Regularly. That’s not failure. That’s just how iteration works. The sculptor doesn’t blame the marble when the first strike isn’t perfect. The sculptor makes another strike.

The difference between people who make AI collaboration work and people who give up isn’t talent or luck or some mystical prompting ability that you either have or don’t.

It’s diagnostic methodology.

It’s knowing how to read the evidence, identify the failure mode, and apply the specific fix.

It’s being the sober mechanic instead of the drunk one.

The goal was never one-shot perfection. The goal is a reliable path from bad first draft to finished piece. That path runs through diagnosis.

Now go diagnose something.

What’s the failure mode you hit most often? Drop it in the comments. I’ll tell you which fix to try.

Crafted with love (and AI),

Nick “Recovering Button Masher” Quick

PS: Official schedule: Sundays and Wednesdays. Unofficial schedule: Most days, honestly, because I’m neck-deep in building the foundational methodology for AI collaboration and apparently I have no hobbies. (Butters would disagree. Butters thinks walks are a hobby. Butters is wrong.) Subscribe if you want craft over hacks, methodology over magic, and the occasional chihuahua cameo.

This is great mate! I especially love (Your diagnostic question: “Did I tell AI what to write, or did I tell it what and how and for whom and why?”).

One thing I would add is guiding the AI to rid itself of confirmation bias. Many of the outputs people get say, "Great perspective! Nice _____!" And what many of us really need is a way to gain a deeper perspective that challenges and criticizes (perhaps even constructively criticizes) our point of view. Like a "Help me get out of my own silo of thinking by giving me perspectives of people who say the opposite of conventional wisdom."

With the power of better prompting you've provided in this article, I think AI might give humanity a chance to break loose of echo chambers and all the blinkered skepticism that comes with it.