I've Been Lying by Omission

The three questions that make AI writing coaches change the subject.

Here’s a weird thing about being one of the few people writing about AI collaboration:

You get to decide what exists.

If I don’t write about something, it mostly doesn’t get written about. Which sounds like power until you realize it’s also permission. Permission to avoid. Permission to leave certain shelves empty and hope nobody notices the gaps.

There are three shelves I’ve been leaving empty.

Three questions I could be examining but haven’t. Not because they’re not important. Because they might be too important. Because I don’t have clean answers. Because examining them might crack something I’ve been carefully building.

That permission ran out.

The Quiet Agreement

Every industry has its unspoken rules about what you don’t discuss in public.

Politics at Thanksgiving. Religion at work. The fact that most productivity advice is written by people whose primary productivity achievement is writing productivity advice.

AI writing has its own sacred silences.

We’ve matured past the existential panic phase. The “will AI replace writers” discourse has mercifully crawled into a ditch somewhere to die, and good riddance. We’ve settled into an uneasy productivity peace. Tools are useful. Collaboration is possible. The slop factories are someone else’s problem.

But there are questions hovering at the edges of every conversation about AI collaboration. Questions that get deflected so consistently it can’t be coincidence.

The deflection isn’t conspiracy. (Conspiracy requires competence and coordination, neither of which this industry has demonstrated.) It’s collective self-preservation.

These questions don’t get asked because the answers might be bad.

And if the answers are bad, the current party stops. The music cuts out. Someone turns on the overhead lights and suddenly everyone can see what the room actually looks like at 2am.

The industry has silently agreed: don’t poke the foundations while we’re all standing on them.

But here’s what I’ve learned about foundations you’re afraid to examine: they don’t become stable just because you’ve stopped looking. They become more dangerous. Because now you’re building on assumptions you’ve never tested, adding weight to a structure you’ve never inspected, and calling it confidence.

The first question is the one that makes lawyers twitch.

Conversation One: Who Owns This?

You write a piece with AI.

Structure: AI’s suggestion.

Several turns of phrase: AI’s contribution.

The core idea: yours. (Probably. Mostly. You’re pretty sure it was yours originally but the more you think about it the murkier it gets.)

The execution: collaborative.

Who owns it?

“Mine,” you say.

Because you need it to be. Because your entire content operation depends on it being yours. Because you've spent two years building an audience on the strength of your ideas, and finding out those ideas have a co-parent with no birth certificate would complicate your custody arrangement.

But where’s the line?

If AI contributed 30%, is it yours? 50%? 70%? Is there a percentage at which the ghost becomes the author and you become the typist? Is intellectual property law equipped to handle the question of who owns something when the “who” is increasingly a “what”?

You don’t know.

Neither do I.

Neither does anyone.

(Somewhere, a copyright lawyer is reading this and either preparing a very expensive opinion or quietly closing the tab and pretending they never saw it.)

That’s the problem.

The legal frameworks haven’t caught up. The industry hasn’t established norms. Everyone’s betting this gets sorted out “later”… by courts, by precedent, by someone else who isn’t them and isn’t now.

Meanwhile, we’re all claiming ownership of work where the ownership is genuinely ambiguous. Writing “by [Your Name]” on pieces that were co-created by a process we don’t fully understand, governed by rights we haven’t fully established, protected by laws that don’t yet exist.

Why nobody wants to establish precedent: because precedent goes both ways.

The AI companies don’t want ownership questions examined. (Liability. Their entire business model is built on the legal assumption that outputs belong to users, and if that assumption cracks, so does the valuation.)

The creators don’t want it examined. (Might lose rights they assumed they had. Nobody wants to discover their content library belongs to a probability distribution.)

The platforms don’t want it examined. (Content moderation is hard enough without adding “but who actually wrote this” to the sorting criteria.)

Everyone’s incentives align toward ambiguity. Which means nobody’s going to poke this. The first person who does becomes the test case, and nobody wants to be the test case.

But ambiguity isn’t stability. It’s a dam holding back questions that will eventually break through. And when they do, you don’t want to be the person who never thought about where they stood.

Your examination prompt: Where’s your line? At what point would you feel uncomfortable claiming full authorship? Write that down. Not for anyone else. For you. So when the questions get loud, you’ve already thought about it.

(Mine is approximately 60%. Beyond that, I start feeling like a cover band taking credit for the original.)

Conversation Two: The Competence Illusion

AI makes everyone sound smarter on the page.

That’s the feature. That’s what we’re all selling. That’s why the courses exist and the newsletters get opened and people pay actual money to learn how to co-write with a machine that doesn’t understand a single word it produces.

Here’s the bug: some of them aren’t smarter. They just have better tools.

Before AI, writing quality was a rough, imperfect signal of thinking quality. The page revealed the mind behind it. You could fake it to some degree—everyone can—but sustained coherence over thousands of words was hard to manufacture without the underlying architecture.

After AI, that correlation is dissolving.

Someone can now produce articulate, well-structured content without being able to articulate or structure their own thoughts.

(Imagine a world where you could hire a body double for your gym sessions and still get the muscles. That’s what we’ve built, except instead of muscles it’s credibility, and instead of a body double it’s autocomplete with ambition.)

I’m not sure I can tell the difference anymore.

And I teach this stuff. I’m supposed to be the person who can spot the seams. And increasingly, I can’t. The competent and the assisted have merged into a single category called “publishable,” and the distinction between “thought about this deeply” and “prompted this effectively” has collapsed.

The client whose emails suddenly became articulate. (AI assist, not actual growth. Their live communication is still a word salad with croutons of coherence.)

The thought leader whose podcast reveals depths their writing never hinted at... in the wrong direction. (They sound smarter in text than in air. The opposite of what you’d expect from someone who actually thinks at the level their writing suggests.)

The expert who sounds expert on the page and confused in conversation. (I interviewed one last month. It was like meeting an author and discovering they hadn’t read their own book.)

We’re building a credentialing system on assisted competence.

The certificates look the same. The capabilities don’t.

(Here’s where it gets uncomfortable for people like me: I can’t guarantee my students are actually learning to think better. I can only verify they’re producing better outputs. Which might be the same thing. Or might be a very expensive way to learn how to hide behind better tools. I genuinely don’t know.)

Your examination prompt: Could you produce 80% of your current output quality without AI? If you had to write something important with no AI access, would you know how? Be honest. The distance between “yes” and the truth is the gap you need to close.

(I test myself on this quarterly. Last quarter I scored myself at 70%. Which is either good because I still have something, or terrifying because 30% of my capability is borrowed. The jury is still out, and I am the jury, and I am compromised.)

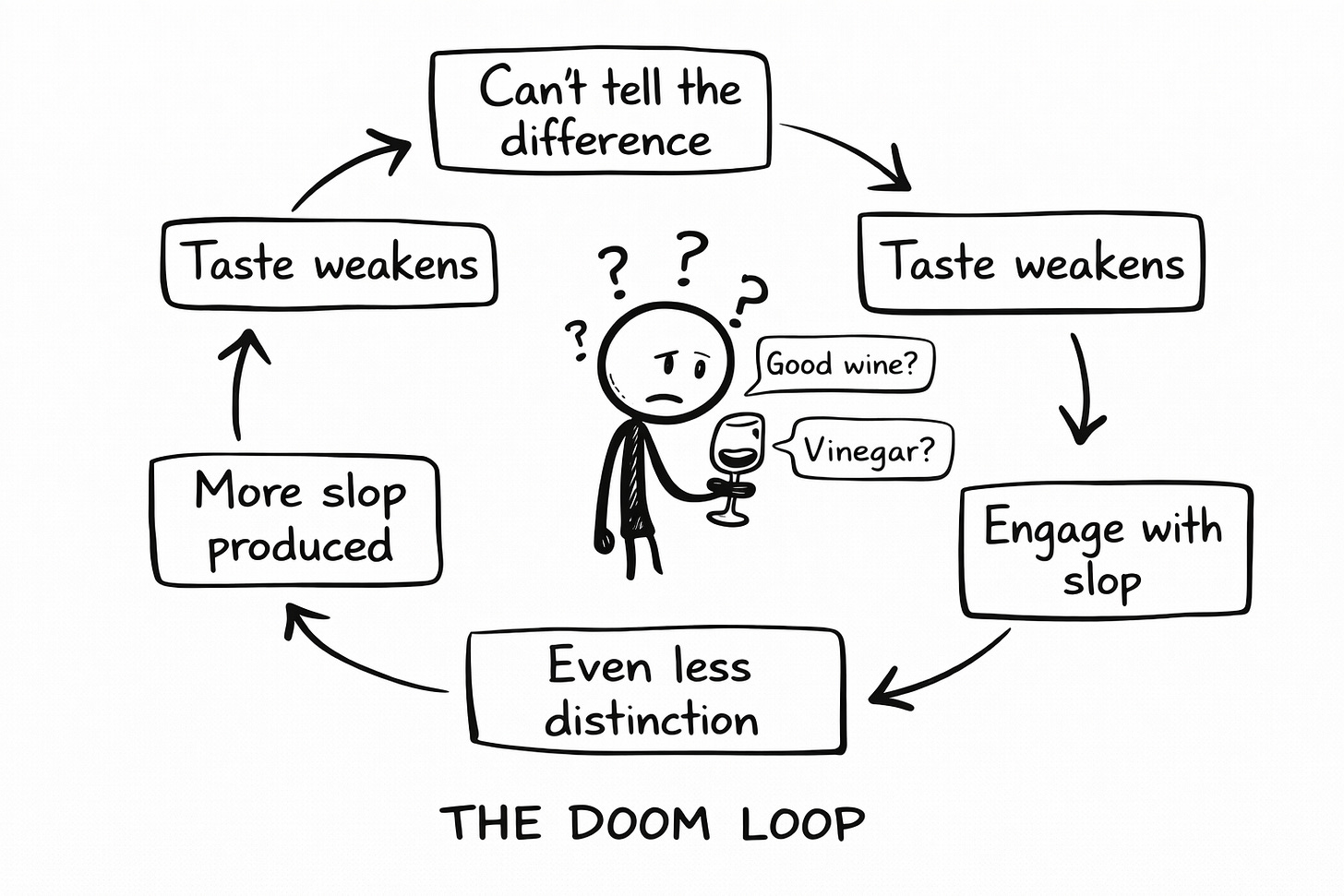

Conversation Three: The Taste Collapse

Here's a doom loop I've been avoiding putting into type.

(I'm going to type it anyway, because in for a penny, in for a career-limiting admission.)

If readers can’t distinguish AI slop from human craft...

Their taste for distinction atrophies.

They reward slop with engagement.

More slop gets produced.

More taste atrophies.

Repeat until the entire ecosystem has no mechanism for recognizing quality.

Is this already happening?

I think so.

And I think AI collaboration—even good AI collaboration, the kind I teach, the kind I’ve built a business around—might be accelerating it.

(This is the part where I’m supposed to exempt myself. To say “but the way I do it is different.” I’m not going to do that. Because I’m not sure it’s true. And the honest answer to an uncertain question is uncertainty, not marketing copy.)

The disturbing possibility: every time we produce content that reads like good human writing but isn’t fully human, we’re training audiences to not need the fully human version.

We’re adjusting the baseline.

We’re teaching people that this level of polish, this kind of structure, this type of confident-but-accessible tone is normal. Expected. The floor.

And the floor keeps rising.

Until “human” and “assisted” are indistinguishable. Until the ability to tell the difference has atrophied from disuse. Until taste is a memory and quality is a rumor and we’re all just producing content-shaped objects for content-shaped audiences in a content-shaped hellscape where nothing means anything and everything is optimized for engagement.

The future arrived on schedule. We just forgot to read the itinerary.

Even when we’re fighting the slop, we might be contributing to the conditions that make slop indistinguishable from craft.

The irony isn’t lost on me.

Neither is the necessity.

I write with AI assistance because I have to. Because the volume demands of modern content creation don’t accommodate purists. Because the choice isn’t “AI or no AI”—it’s “conscious AI or unconscious AI,” and I’ve chosen conscious.

But conscious participation in a system that might be eating itself is still participation.

And every newsletter I publish might be teaching someone’s taste buds to stop noticing the difference between a meal and a meal-replacement shake.

So here we are.

Your examination prompt: When’s the last time a piece of writing genuinely delighted you? Not impressed you. Not made you nod along professionally. Delighted you. Surprised you. Made you stop and reread because the way someone said something was so distinctly, unmistakably, irreducibly human.

If you can’t remember, your taste might be flattening.

Find a writer whose work could only have come from them. Someone distinctly human. Someone you’ve neglected.

Read something by them. Today.

Not to imitate. To remember what you’re trying to preserve.

Why Examine Anyway?

I don’t have clean answers to any of these questions.

That’s not the point.

(Clean answers are for people who haven’t thought hard enough about the questions. The universe doesn’t resolve neatly. Anyone who tells you otherwise is selling certainty, which is the most expensive thing you can buy and the least useful thing you can own.)

The point is: questions you avoid don’t become less real.

They become more powerful.

Because they’re shaping your behavior without your awareness. Influencing your choices without your consent. Building the foundations of your decisions while you’re busy looking at the décor.

Examination isn’t pessimism. It’s due diligence.

Creators who think about these questions will make different choices than creators who don’t.

Different choices about what they claim as their own. Different choices about what competence they’re actually building. Different choices about their role in the taste ecosystem.

You can be a conscious participant in an uncertain system, or an unconscious one.

Conscious participation means sitting with ambiguity rather than pretending it’s been resolved. It means building something you can defend, not just something you can publish. It means looking at the foundations even when the view is unpleasant.

Optimists build castles. Pessimists predict floods. The useful ones check the foundation before picking curtains.

The Quick Examination

If you want to start in 60 seconds:

1. Write your ownership position in one sentence. Where’s your line?

2. Name one capability gap between your AI outputs and your actual thinking ability. Be honest. Nobody’s watching.

3. Name one distinctly human writer you’ve neglected. Open something by them today.

That’s examination. It takes a minute. It changes more than you’d think.

Three conversations. No resolutions.

I’ve been leaving these shelves empty because filling them felt dangerous. Because the questions don’t have clean answers. Because examining them might crack something I’ve been carefully building.

But empty shelves don’t stay hidden forever. Eventually someone notices the gaps. Better to fill them yourself, imperfectly, than to have someone else point out what you’ve been avoiding.

The shelves aren’t empty anymore.

The questions are out.

What you do with them is up to you.

(And now, if you’ll excuse me, I need to go sit with the fact that I wrote this with AI assistance. Three thousand words about the problems of AI collaboration, co-written with AI, published to an audience that might not be able to tell the difference.

The irony isn’t lost on me.

Neither is the necessity.

Neither, I suspect, is the point.)

The creators who will thrive aren’t the ones who avoided these questions longest. They’re the ones who examined them first—and kept showing up anyway.

Discussion Thread: Which of these three conversations do you find yourself avoiding? What would change if you stopped?

Crafted with love (and AI),

Nick “Shelf Stocker” Quick

PS… Something broke loose lately. I’m publishing daily—not because I should, but because I can’t seem to stop. Won’t last forever. Nothing does. Get in while the getting’s weird.

I exhaled reading your piece. Because these questions have been stewing in my head too and nobody seems to be talking about them. And it feels almost punishable to raise questions against the growth that AI is bringing. It’s very few who are able to notice where it’s poking holes. I came to your page because someone wrote you have distinct visuals in your writing which you do. But I’m taking back with me your courageous take on the AI collapse.