If ChatGPT Agrees With You, Rewrite It

Because consensus is a warning sign now

7.5 million blog posts went live today.

Tomorrow, another 7.5 million. Wednesday, same thing. And readers? They’ll spend 52 seconds on each one. If they click at all. Which they mostly won’t.

Most of those posts will say reasonable things. Balanced things. The kind of takes that make you nod slightly and forget immediately. Content that exists the way elevator music exists. Technically present. Aggressively forgettable.

I almost added to that pile last week.

I’d been working on a piece about why most AI prompts fail. Felt good about it. Clear argument, solid structure, practical advice. So I did something I’ve started doing with every draft: I asked ChatGPT to gut-check the core thesis.

“Does this sound like a reasonable, balanced take?”

“Yes! This is a well-balanced and thoughtful perspective on—”

Deleted. Three paragraphs. Gone before the AI finished its sentence.

The argument I killed? “AI tools work best when you give them clear context about your audience and goals.”

True. Also useless. The content equivalent of “drink more water.” Accurate advice that changes nothing because everyone’s already heard it fourteen thousand times and they’re still dehydrated.

What I replaced it with: “Most AI prompts fail because people describe what they want to sound like instead of documenting how they actually write.”

ChatGPT’s response to that one: “Some might find this reductive, as there are legitimate reasons to...”

Published it. Highest engagement that month.

(Turns out “some might find this reductive” is AI-speak for “you’re onto something and I’m uncomfortable.”)

Consensus used to mean you were onto something. Now it means you’re invisible.

Here’s the system I run on everything before I hit publish. Five tests that catch the blandness before my readers do. Or, more accurately, before my readers just... leave.

Test 1: The AI Agreement Test

AI models are probability machines. They output what’s most likely based on training data. Which means they output consensus. The average. The safe.

When your idea gets enthusiastic agreement from AI, you’ve written something probable.

Probable means average. Average means invisible. Invisible means you just mass-produced something nobody asked for. Congrats.

The Test:

Before publishing, copy your core argument. Paste it into ChatGPT with this prompt:

“Does this sound like a reasonable, balanced take: [YOUR ARGUMENT]”

Watch what happens.

If AI says “Yes, this is well-balanced and reasonable”—you’re standing in the fat middle of the bell curve with everyone else. Your take is IKEA furniture. It fills space without anyone noticing it. It’s the content equivalent of a hotel room painting. Inoffensive. Forgettable. Chosen specifically because nobody would have an opinion about it.

If AI hedges. If it says “Some might argue...” or “This is a controversial position because...”

You’ve found an edge.

My Before/After:

The original take I almost published:

“AI won’t replace writers, but writers who use AI effectively will have an advantage over those who don’t.”

ChatGPT’s verdict: “This is excellent advice that balances...”

Deleted. (Into the sun, preferably.)

The replacement:

“The ‘AI won’t replace you’ crowd is coping. The ‘AI will replace everyone’ crowd is catastrophizing. The truth is weirder: AI will replace the version of you that writes like everyone else.”

ChatGPT’s verdict: “This perspective, while valid, might overlook the importance of...”

That hesitation? That’s the signal. That’s AI saying “I can’t find 10,000 identical versions of this in my training data, so I’m going to hedge.”

The Rule:

You want the take that alienates 50% of the room to bond deeply with the other 50%. The stuff that makes some people nod vigorously and others leave slightly offended comments. That’s not being divisive for its own sake. That’s having an actual perspective. (You’d think Substack would have more of this. You’d be disappointed.)

Most “thought leadership” is just loud agreement with things most people already believe. Applause lines dressed up as insights. The content equivalent of a CEO posting “Be kind. Be curious. Be bold.” under a mountain sunset and calling it wisdom.

If your hottest take could come from literally any executive with a blue checkmark, it’s not a take. It’s wallpaper.

So your take has edge. AI pushed back. Good.

But here’s the thing about edges: they need to actually cut. And the difference between an idea that cuts and one that just sits there looking sharp? Texture.

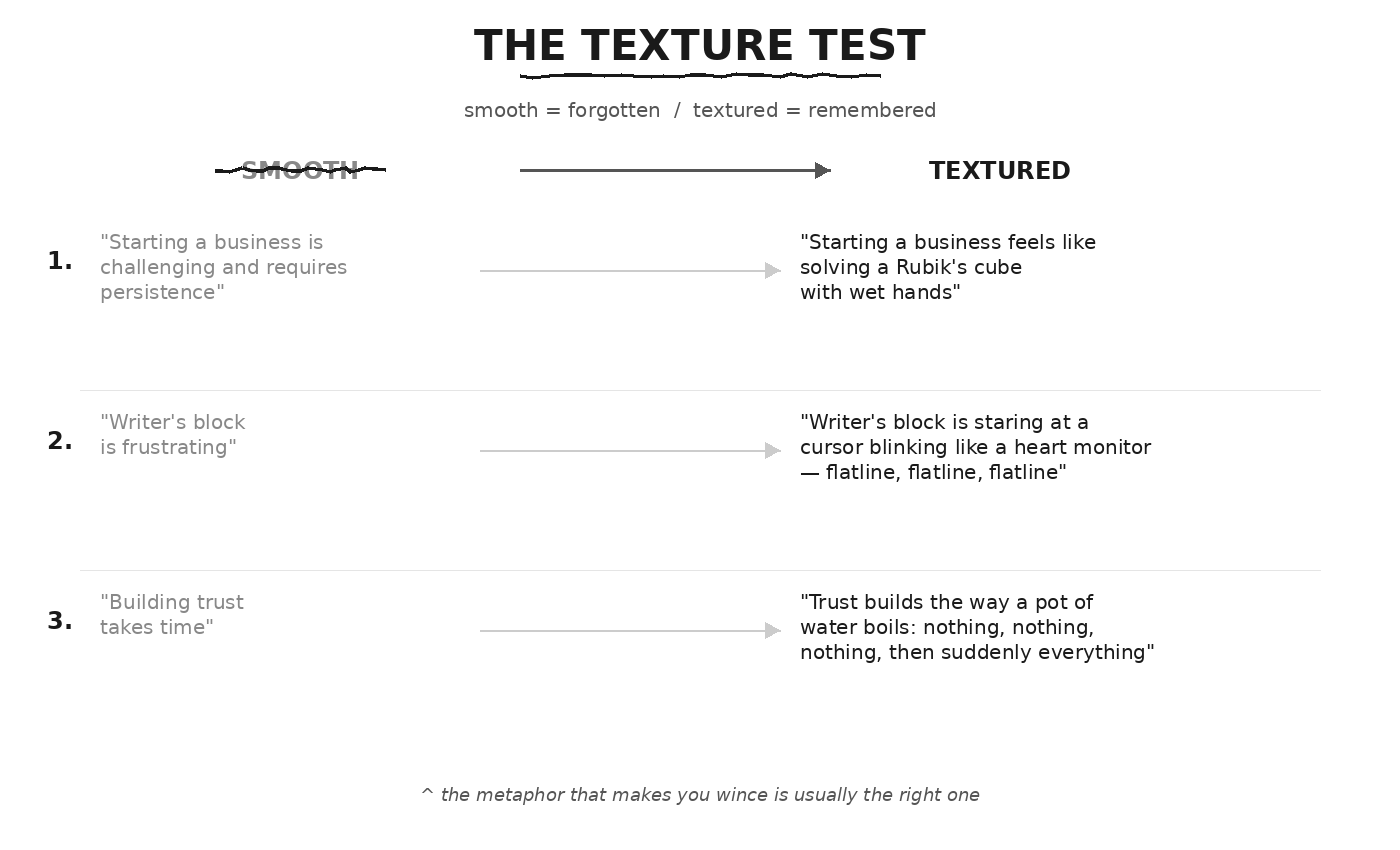

Test 2: The Texture Test

AI writing is frictionless. Perfect logical flow. Smooth transitions. No rough edges.

That smoothness is the problem.

Human experience is textured. Jagged. Physical. We live in bodies that feel things. The tightness in your chest before a hard conversation. The specific weight of your phone when bad news arrives. The way 3am silence sounds different than 3pm silence. The particular brand of regret that hits when you drunk-text someone you ghosted six months ago and watch those three dots appear.

AI lives nowhere. It has no body. No zip code. No 3am.

(Must be nice.)

The Test:

Find your abstract concepts. The places where you’re talking about feelings, challenges, processes. Apply the see/smell/touch test:

Can you literally see, smell, or touch this metaphor?

No? It’s too generic. Your reader’s brain will slide right past it like it’s coated in Diddy’s bulk supply.

Before and After:

When I Caught Myself Being Smooth:

I wrote this sentence in a draft two weeks ago: “Developing your authentic voice takes consistent practice and self-awareness.”

Read it back. Felt nothing. It’s the written equivalent of holding a smooth river stone. Pleasant enough. Leaves zero impression. Could have been written by literally anyone with access to a keyboard and a LinkedIn account.

I replaced it with: “Your voice isn’t something you find. It’s the residue left behind after you scrape away everything that sounds like someone else.”

The first version is true. The second one has edges. The word “residue” makes it slightly gross. “Scrape” implies effort that hurts.

That slight discomfort? That’s the texture. The metaphor that makes you wince a little is usually the right one.

(I write about making AI sound human for a living. Which makes every smooth sentence I produce extra embarrassing. Like a therapist stress-eating Twinkies in the parking lot between sessions.)

The Rule:

Smooth writing slides off the brain. Textured writing leaves splinters.

You don’t need every sentence to be sensory. But every abstract concept needs at least one physical anchor. Something readers can feel in their body, not just process with their mind. Something that makes them go “oh!” instead of just “okay.”

Texture makes abstract ideas physical. Readers feel them instead of just understanding them.

But even textured writing can be generic if it lives nowhere. “Solving a Rubik’s cube with wet hands” is visceral. But it could describe anyone’s business. Anywhere. Any time. It’s a vivid description of nothing in particular.

The next test is about giving your ideas an address.

Test 3: The Location Test

AI has no zip code.

It exists everywhere and nowhere. It can tell you “how to be productive” but not “how to be productive when you’re running on four hours of sleep, your cofounder just rage-quit over Slack, and you’re operating from a coffee shop in Abu Dhabi because your apartment lease ended three days before you expected and you definitely should have read the contract more carefully.”

That first version is a commodity. A hundred thousand articles say the same thing.

That second version? Scarce. Because nobody else has that exact combination of disasters. (And yes, they’re all disasters. The advice industry just learned to call them “constraints.”)

The Test:

Look at your core premise. Is it universal advice or local truth?

The transformation formula:

Generic: “How to do [X]” Local: “How I did [X] while [constraint] + [constraint]”

My Story:

I took a multi-year hiatus from writing. No blog. No newsletter. No “building in public.” Just... silence.

When I came back, I didn’t have an archive to point to. No “here’s my 200th post” credibility. I have to prove it every single time.

That constraint shapes everything. I can’t write “as I’ve discussed before” because there was no before. Every concept needs to be explained like the reader just met me. (Because they have.)

Turns out that’s just... better writing. The stuff that assumes shared history is lazy. The stuff that earns trust from scratch is stronger.

More Examples:

Generic: “5 Tips for Remote Work”

Local: “How I Managed a Remote Team Across 3 Time Zones While My Internet Cut Out Every Afternoon at 4pm” (The joys of digital nomad infrastructure.)

Generic: “Building an Email List”

Local: “How I Got My First 100 Subscribers With No Existing Audience and Content That Actively Insulted Half My Target Market”

The Paradox:

Everyone thinks specific means small audience.

It’s backwards.

The more specific the context, the more universal the lesson feels. Because everyone’s life is messy. Generic advice matches no one’s actual situation. Specific advice makes readers think: “If they figured it out with THOSE constraints, I can figure it out with mine.”

The Rule:

Nobody bookmarks “5 Tips for Productivity.”

They bookmark “How I Wrote a Book in the NICU Waiting Room.”

Your constraints aren’t bugs. They’re features. Your weird circumstances are differentiation. Stop hiding them.

Specific context grounds your advice in reality. Now readers know where you were standing when you figured this out.

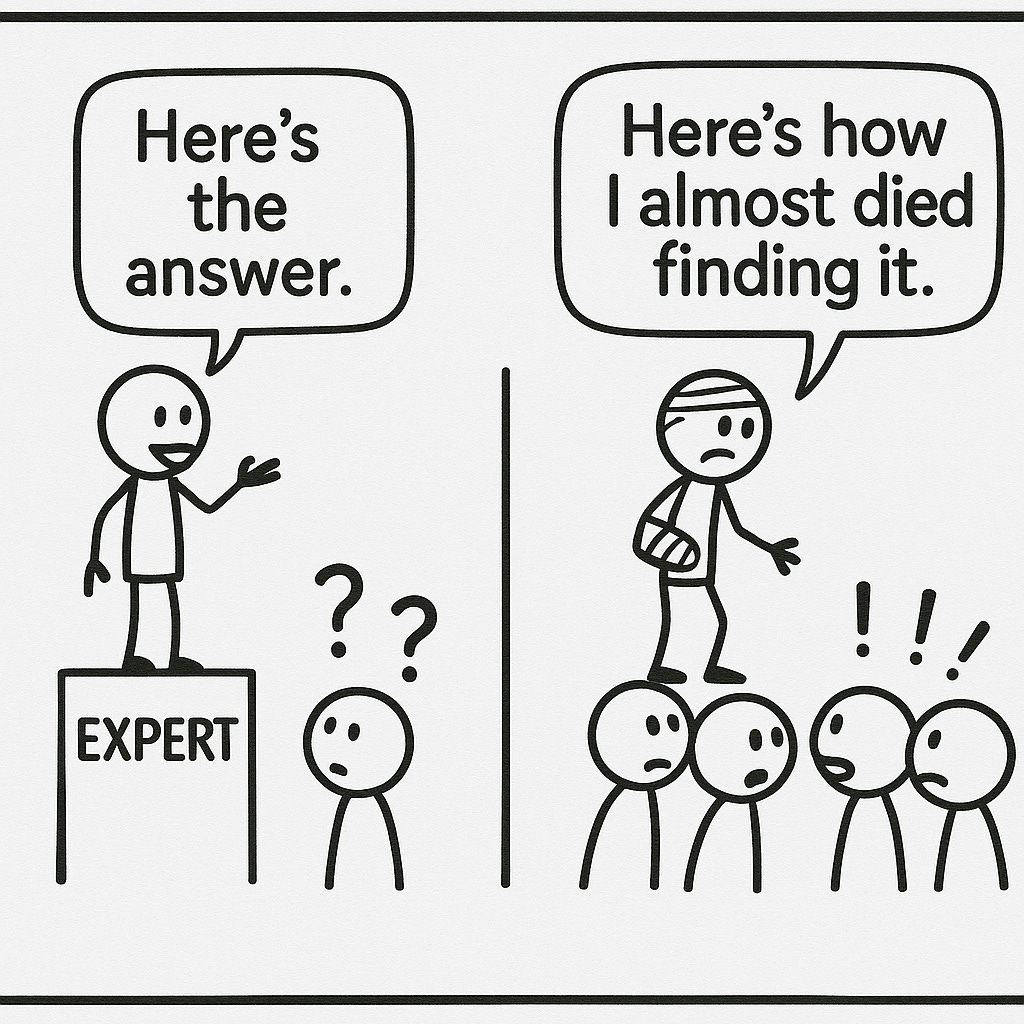

But location alone isn’t enough. We still don’t know if you’ve actually been in the fight. Or if you’re just describing it from the sidelines. Like a sports commentator who’s never thrown a punch telling you how to win a boxing match.

The next test separates tourists from survivors.

Test 4: The Scar Test

AI is aggressively helpful. It presents solutions without stumbles. Clean paths from problem to answer. No wrong turns, no dead ends, no embarrassing detours.

(Because it didn’t take any wrong turns. It pattern-matched to the most probable answer instantly. Must be nice. Again.)

Heading into 2026, that frictionless helpfulness is a red flag.

Inefficiency is a signal now. Proof you actually lived through the problem. The scars are the credentials.

The Test:

Before you share the solution, share the error log.

Not just: “Here’s what works.” But: “Here’s what I tried that didn’t work, what it cost me, and what I finally learned after faceplanting repeatedly.”

The Structure:

Before I figured out [what works], I:

- Tried [wrong approach #1] and [specific consequence]

- Wasted [specific amount] on [wrong approach #2]

- Embarrassingly believed [wrong assumption] for [time period]

Here’s what finally worked...

My Scars:

Before I figured out that voice documentation beats prompt engineering, I bought over a dozen courses on “mastering AI prompts.” Premium collections. Megaprompt templates. The works.

All utterly worthless if you want writing that pops.

I believed the lie: find the right incantation, and the machine craps out bars of pure platinum. Turns out the problem was never the prompt. It was that I was asking AI to write like a good writer instead of teaching it to write like me.

(That realization cost me somewhere north of $500 and about a year and a half of mediocre output. You’re welcome.)

Before I figured out that publishing beats perfecting, I sat on the idea for this newsletter for three years. Three years of “I’ll start when I have more time.” Three years of “I need to figure out my angle first.” Three years of knowing it takes months—maybe years—to build an audience that could replace my income, and using that as an excuse to do nothing.

Then I got laid off.

Now I’m building this thing on my android device while standing in line at the unemployment office. Regretting every week I didn’t just start the damned thing when the stakes were lower.

(The best time to plant a tree was three years ago. The second best time is when you’re freshly unemployed and mildly panicking.)

Why Scars Work:

Your embarrassing failures are your most valuable content. They’re unfakeable. (Or at least, much harder to fake convincingly.) The scars prove you were in the fight, not commenting from the sidelines with a clipboard.

AI can generate “best practices.” It can’t generate “the thing I tried that blew up in my face and cost me three months and $2000 I’ll never get back.”

The Rule:

“I figured it out” is suspicious. “I faceplanted three times first” is credible.

Credibility used to come from being an expert. Now it comes from being a survivor.

Showing your scars proves credibility. Readers know you’ve actually been in the arena.

But here’s the twist most people miss: the order you bring AI into this process matters more than the tools themselves.

Most people have it exactly backwards. (Shocking, I know.)

Test 5: The Sparring Partner Flip

Most people use AI wrong.

They use it to write the first draft. Start with a prompt, get a rough version, then edit from there.

This is the problem.

When AI writes the first draft, you anchor your thinking to the average. You’re editing toward their baseline, not yours. The human brain pattern-matches. You’ll unconsciously adopt AI’s rhythms even as you try to revise away from them. The bones of the piece are already AI-shaped. You’re just rearranging furniture in someone else’s house. And it looks like a WeWork—exposed brick, motivational quotes, and absolutely no fucking soul.

The Flip:

Write your messy human draft first. The jagged one. The one with half-finished thoughts and weird tangents and sentences that sound like you talking to yourself at 11pm after too much coffee.

THEN bring in AI. Not to draft. To attack.

The Prompt:

“I’m about to publish content arguing [SUMMARY OF YOUR ARGUMENT].

Act as a cynical, skeptical critic. Tear this apart. Tell me:

- Where am I being too generic?

- Where am I hiding behind vague language?

- Where would a smart reader disagree?

- What am I afraid to say directly?”

(Yes, I’m asking AI to be mean to me. It’s called growth.)

What This Looks Like in Practice:

This post you’re reading went through exactly this process.

My first draft had placeholder “scars” in Test 4—vague failures with made-up specifics. Claude flagged it: “These feel hypothetical. If they’re not real, they undercut the entire point of the test.”

I was teaching “show your scars” while hiding mine. Classic.

So I replaced them with the real stuff: the $500+ I blew on prompt engineering courses, the three years I procrastinated, the unemployment office. The uncomfortable details I’d been skirting around.

Same draft, different section. I had a story about my Spanish LinkedIn profile that I kept gesturing at but never actually told. Claude’s note: “This is the equivalent of saying ‘I once almost died in a kayak on the Zambezi River’ and then changing the subject.”

Cut it. Replaced it with something that actually demonstrated the principle.

This is draft six. Maybe seven. I lost count.

Why the Order Matters:

Let AI write the draft → you’re editing toward average

Let AI attack the draft → you’re strengthening your edge

The draft stays yours. The critique is AI’s. The revision is yours again.

You maintain creative control. AI handles adversarial QA. The sequence matters more than people realize.

The Rule:

Use AI to sharpen your sword, not to fight the battle for you.

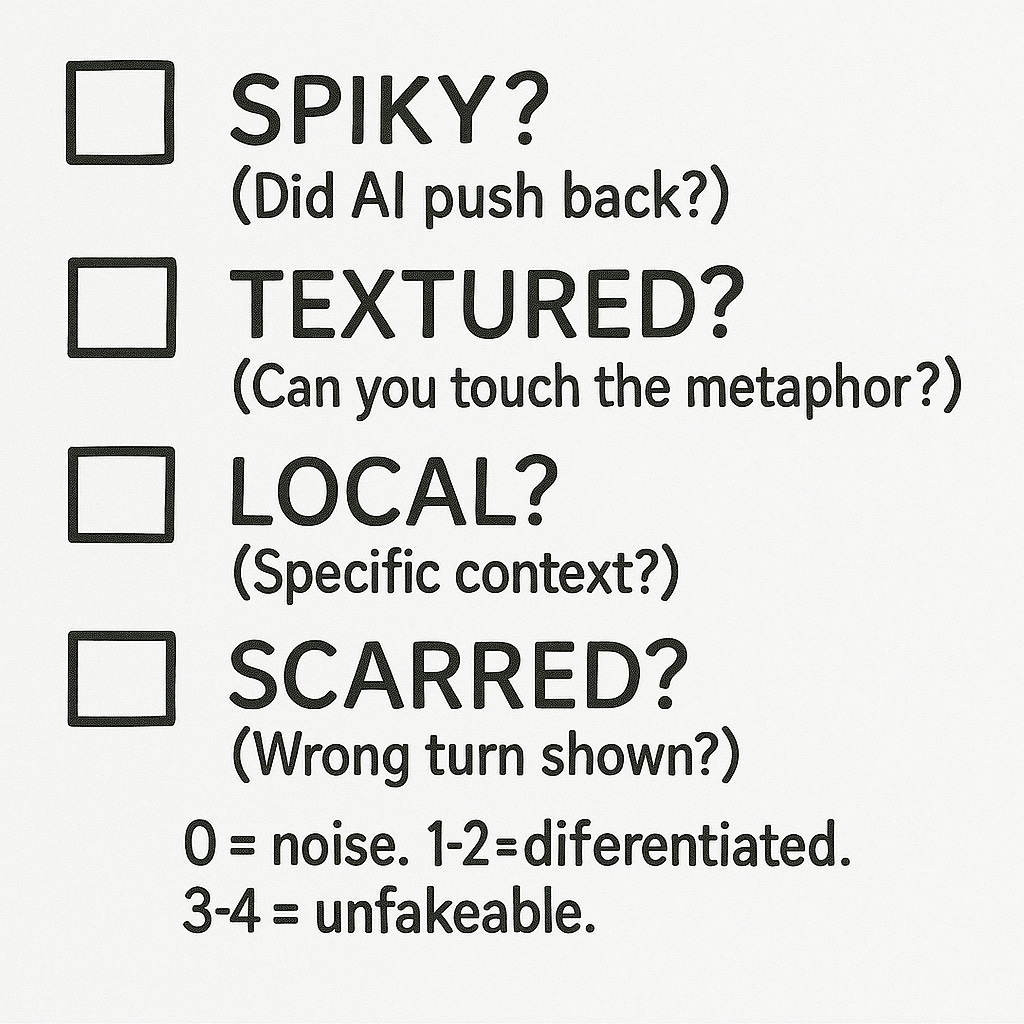

The Anti-Blandening Checklist

Four questions. (Test 5 is a workflow shift, not a yes/no check.)

Run these before you hit publish. Any piece that fails all four is adding to the noise. And the noise doesn’t need your help. It’s doing fine on its own.

☐ SPIKY? — Did AI push back when you asked if this sounds reasonable?

☐ TEXTURED? — Can you see, smell, or touch at least one metaphor?

☐ LOCAL? — Is this grounded in specific context, not universal advice?

☐ SCARRED? — Did you show a wrong turn before the right one?

Scoring:

0/4 = You’re adding to the noise

1-2/4 = Differentiated from average

3-4/4 = Unfakeable by AI

My Score on This Post

I ran this checklist on the piece you’re reading right now. Also on the three posts before it.

Results weren’t pretty.

This post failed Textured on the first draft. Too many abstract concepts explained with more abstract concepts. I had to go back and add the “cursor blinking like a heart monitor” line, the “Rubik’s cube with wet hands” example, the “scraping away residue” metaphor. None of those were in version one. Version one was very professional. Very smooth. Very forgettable.

It failed Scarred on draft two. I had the structure (”show your wrong turns”) but I hadn’t actually shown mine. I was teaching the principle while violating it. (This is my specialty, apparently.)

The three posts before this? Failed Textured twice, failed Local once. Apparently I’ve been defaulting to universal advice because it’s easier to write. “Anyone can apply this!” feels generous. Turns out it just means forgettable.

The Uncomfortable Truth:

These tests won’t make writing easier. They’ll make it harder. Every abstraction needs a physical anchor. Every insight needs a location. Every solution needs the errors that preceded it.

That’s the point.

The Great Blandening is real. 7.5 million posts today, 7.5 million tomorrow, and the vast majority of them will be reasonable, balanced, smooth, universal, and invisible.

The bar keeps rising. The floor of “acceptable” content gets higher every month as AI gets better at producing acceptable content. Standing out doesn’t mean being louder. It means being unfakeable.

These four tests are my pre-flight checklist. They don’t guarantee the piece will land. But they guarantee it couldn’t have come from anyone else.

(And honestly? That might be the only competitive advantage left.)

Which of these tests does your current draft fail? Be honest with yourself. I failed two of them on a post about the tests themselves. That’s either embarrassing or instructive. Probably both.

Crafted with love (and AI),

Nick “The Unfakeable” Quick

P.S. Want more on collaborating with AI without producing slop? I publish new frameworks every Wednesday and Sunday. Subscribe and I’ll send them straight to your inbox. No spam, no automation worship, just practical methodology for writers who refuse to sound like everyone else.

I realy resonate deeply with what you wrote here; it's a brilliant articulation of the intellectual challenge AI presents to content creation. Your point about avoiding ‘drink more water’ advice is crucial; true insight emerges when we push beyond readily accepted consensus, even from advanced models like ChatGPT.

Damn this is a lot of “punch in the gut” advice for how lazy I’ve been with my Ai writing. A lot of my writing is “marketing” emails right now, so I just let it slide but they have my name on them and this article makes me want to take that a lot more seriously. (And now I have a tangible checklist to do it so thanks!)