Help: I’m in a Toxic Relationship With Claude!

I’m flying 7 red flags like I’m directing traffic in hell.

I’ve been gaslighting myself about my AI relationship for three years.

Not Claude’s fault. Mine. Entirely mine. I’ve been telling myself our sessions are “collaborative” and “productive” while consistently leaving them frustrated, vaguely resentful, and wondering why nothing ever feels quite right.

This is textbook relationship dysfunction. I’m just applying it to software. Which is either innovative or deeply sad. The jury’s still deliberating.

Last week I made a list. Every frustrating AI interaction. Every time I felt misunderstood, disappointed, or personally wronged by a statistical prediction engine that has literally no capacity to wrong anyone.

Patterns emerged.

Patterns I recognized from... other contexts. Contexts involving humans. Contexts involving my spectacular talent for importing dysfunction into any system that’ll hold still long enough.

Turns out I’ve been dragging my relationship red flags directly into my AI workflow. And after talking to enough creators, I’d bet real money you have too. We all have. It’s the one thing that unites us as a species: the inability to leave our baggage at the door.

Anyway. Here’s what I found in mine.

Red Flag #1: You Expect Mind-Reading

“You should know what I want by now.”

I’ve said this to humans. (Didn’t work. Never works. Has a 0% success rate across all documented history.) I’ve implied it to Claude through increasingly passive-aggressive follow-up prompts. (Also didn’t work. Shocking absolutely no one except me, apparently.)

The relationship version: believing your partner should intuit your needs without you articulating them. Getting wounded when they fail to read thoughts you never bothered to transmit.

The AI version: prompting vaguely and expecting precision. Adding “you know my style” to a prompt as if that phrase contains any actionable information whatsoever. Getting genuinely frustrated when AI doesn’t telepathically access intentions you left in your head where they were doing no one any good.

Mind-reading requires a mind on the other end. AI doesn’t have one. It has patterns. It has probabilities. It has whatever you explicitly fed it and not a single byte more. If your prompt didn’t include the context, that context doesn’t exist in AI’s universe. This isn’t betrayal. This isn’t failure. This is how language models work.

I know this. I teach this. And I still caught myself, just last week, getting annoyed that Claude “should know by now” what I meant by “make it sound more like me.”

Three years of working with AI. Three years of explaining to other people that you have to be explicit. And some part of my brain still expects goddammed telepathy.

Knowing better and doing better are apparently different skills.

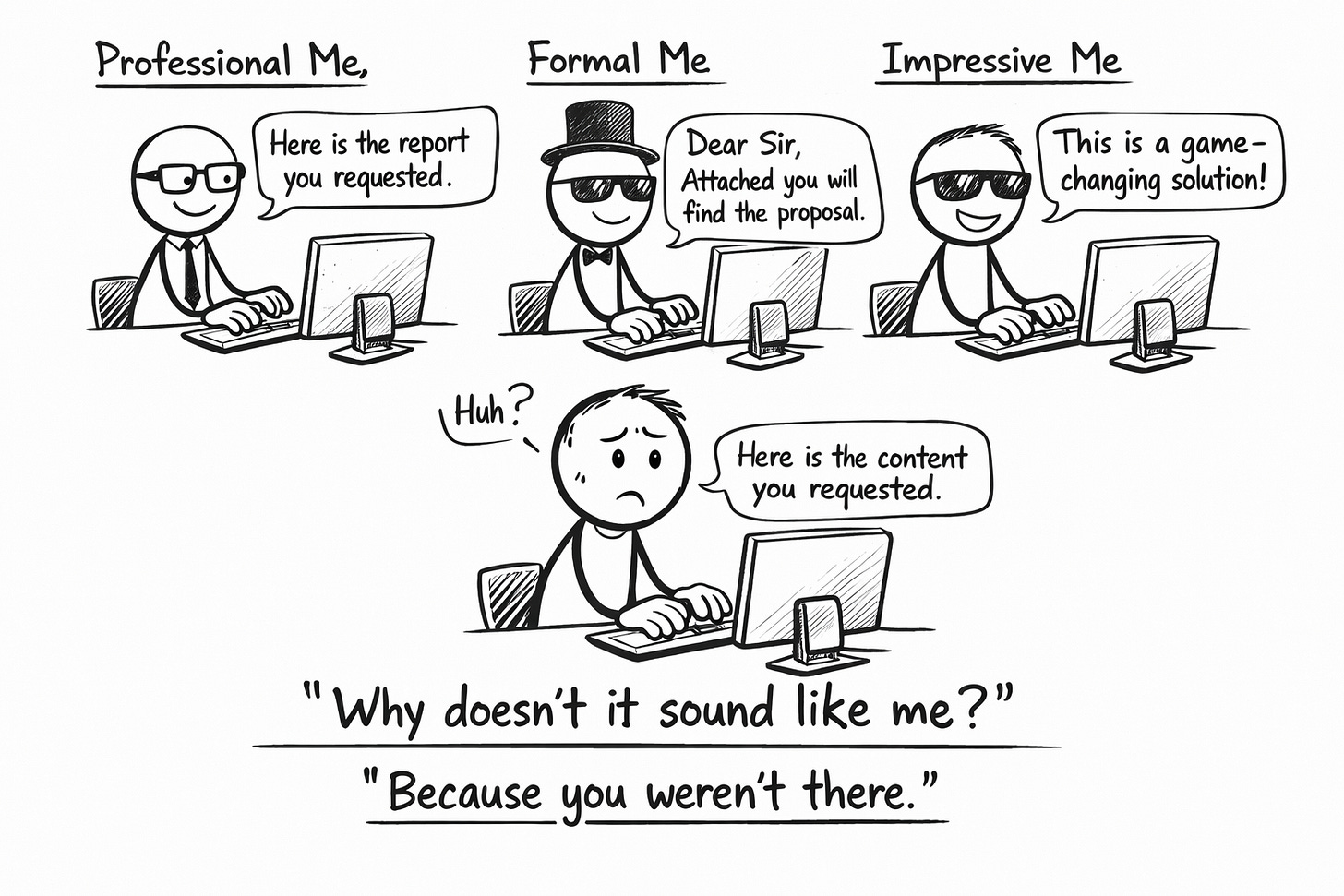

Red Flag #2: You’re Auditioning, Not Collaborating

The relationship version: performing a version of yourself you think they want to see. Editing your personality in real-time based on perceived reception. Never letting them meet the actual human underneath the performance.

The AI version: writing prompts in a voice that isn’t yours. Using formal language because it seems more “proper” or “professional” or whatever word we use to describe the costume we wear when we’re afraid of being seen.

I caught myself doing this. Prompting in corporate-speak because some ancient, lizard-brain part of me thought AI would “take me more seriously.”

AI doesn’t take anyone seriously. AI has no concept of seriousness. It predicts the next token based on patterns. That’s it. That’s the whole operation. There’s no judgment happening. There’s no respect to earn. There’s just probability distributions all the way down.

And when you prompt in someone else’s voice, you get output in someone else’s voice. Then you stand there, baffled, wondering why the results don’t sound like you.

Because you didn’t show up as you. You showed up as whoever you thought would be impressive. And that person—surprise—writes like a press release fucked a self-help book and neither one is paying child support.

(The irony of teaching voice preservation while hiding my own voice from my AI collaborator is genuinely spectacular. I contain multitudes. Most of them are hypocrites.)

Red Flag #3: You Compare Your AI to Everyone Else’s

“But their ChatGPT writes such good stuff.”

The relationship version: comparing your partner to your friend’s partner. Looking at other relationships and wondering what yours is missing. Instagram-induced dissatisfaction with the perfectly acceptable reality in front of you.

The AI version: reading someone else’s AI-generated content and wondering why yours never sounds that good. Assuming they have a “better version” or some secret prompt formula that the universe is withholding from you specifically.

We compare our behind-the-scenes to everyone else’s highlight reel. This is not news. People have been writing articles about this for a decade. And yet here we are, doing it with software.

The reality is brutal enough that I considered not including it: they probably have better inputs.

Their Voiceprint is more detailed. Their calibration is tighter. Their prompts are more specific. They’ve invested time. They’ve done the work. They’ve built the foundation that makes good outputs possible.

You’re not seeing the fifty bad generations they rejected. You’re not seeing the hours of iteration. You’re not seeing the revision process. You’re seeing the one output they chose to publish, and you’re comparing it to your first draft, and you’re concluding that life is unfair.

Life is unfair. But not in this particular way.

(I’ve done this. Multiple times. Seen someone’s polished AI-assisted content, felt that specific flavor of jealousy that tastes like copper and disappointment, then blamed my tools instead of my technique. The tools were fine. The technique needed work. The tools are almost always fine.)

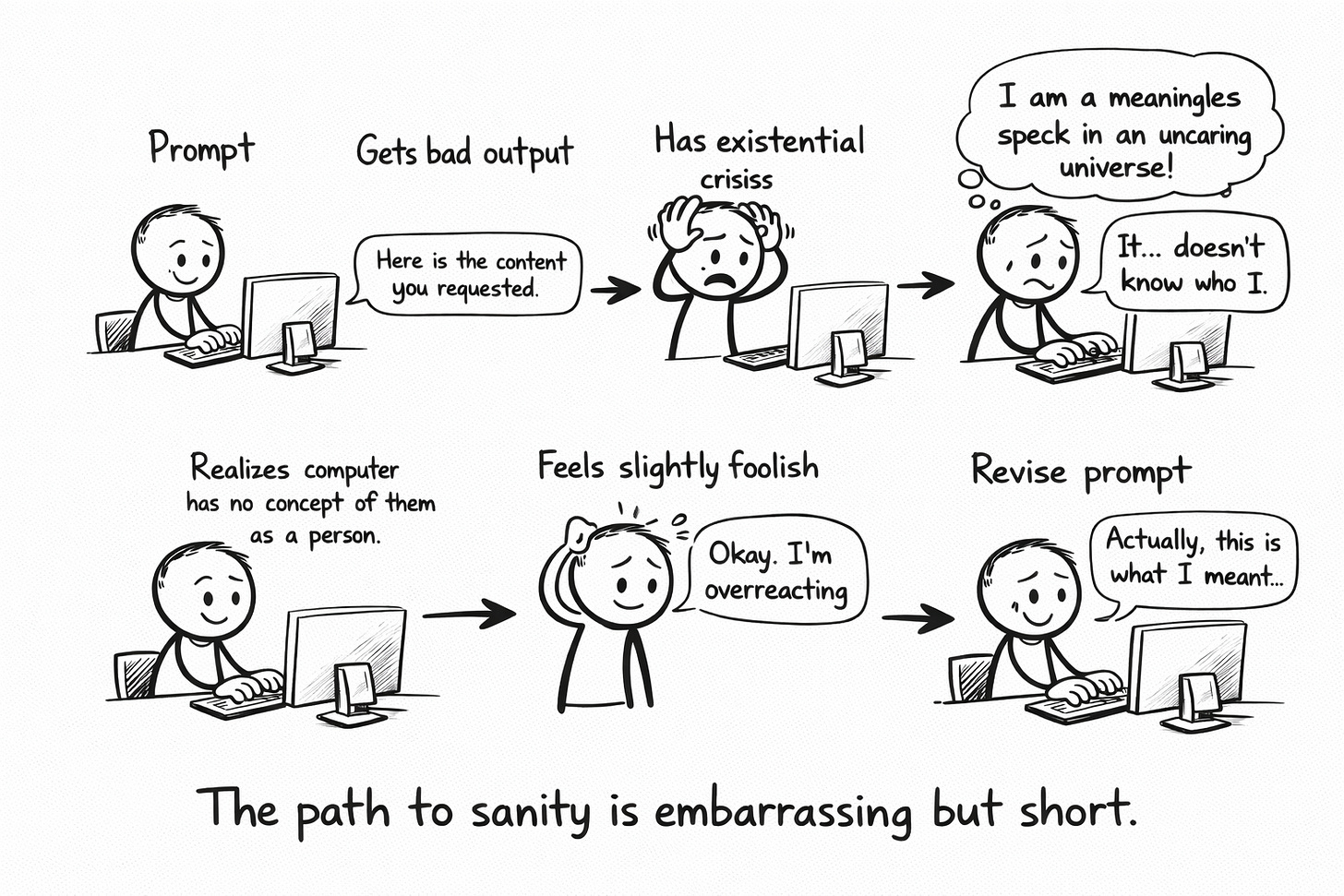

Red Flag #4: You Take Rejection Personally

The relationship version: interpreting every “no” as a cosmic statement about your worth. Making rejection mean something profound about who you are as a human being, rather than something mundane about fit or timing.

The AI version: getting emotionally hurt when AI produces bad output. Feeling genuinely rejected by a language model. Interpreting generic results as AI’s failure to appreciate your unique and precious specialness.

This one’s embarrassing to admit.

But here we are.

When Claude produces something that completely misses my voice, some primitive, absolutely unhinged part of my brain goes: “See? Nothing understands you. You’re uniquely incommunicable. You’ll die alone and even AI won’t attend the funeral.”

That’s not what’s happening. What’s actually happening is: I gave incomplete context. Or my Voiceprint has gaps. Or the request itself was ambiguous enough that AI filled the ambiguity with its defaults. The bad output is information. Data. Feedback on the prompt, not judgment of the person.

But try telling that to my limbic system. My limbic system has its own opinions. Its opinions are wrong, but it holds them with tremendous conviction.

The output isn’t a verdict. The output is a reflection of the input. Change the input, change the output. There’s nothing personal happening. There’s nothing happening at all, really, except probability distributions doing what probability distributions do.

Red Flag #5: You Try to Change Them Instead of Accepting What They Are

The relationship version: entering a relationship with a clear sense of who you want them to become. Seeing potential instead of reality. Running a continuous improvement program on another human being who didn’t ask for enrollment.

The AI version: fighting AI’s fundamental nature instead of working with it. Getting angry that it produces generic output when generic output is literally what it’s designed to produce. Wishing it were “smarter” or “more creative” instead of building the inputs that make it useful.

AI defaults to the statistical mean. That’s the architecture. It will always—always—pull toward average unless you provide strong counter-instructions. This is not a bug. This is the feature. This is how language models work. They predict probable text, and probable text is, by definition, average text.

You can resent this forever. You can shake your fist at the algorithm. You can write angry posts about how AI “doesn’t get it.” Or you can accept reality and work within it.

I resented it for months. Kept thinking “if only it were better.” If only the tool were different. If only the technology had been built to my specifications. That’s the same logic as dating someone for who they might become instead of who they actually are. It’s a recipe for permanent disappointment served fresh every single session.

(The tool is the tool. My job is to use it well. Wishing doesn’t change architectures. Neither does resentment. I know this now. I paid for the knowledge in wasted hours.)

Red Flag #6: You Blame Yourself When Things Go Wrong

This is the flip side of taking it personally. The other edge of the same sword.

The relationship version: assuming every failure is your fault. Endless self-improvement projects in hopes of finally becoming “enough.” Never considering that some mismatches are just mismatches.

The AI version: when AI produces bad output, immediately assuming you prompted wrong. Spending hours adjusting your approach without pausing to consider that maybe the task itself isn’t suited for AI. Or maybe the model has limitations for this specific use case. Or maybe—radical thought—the expectation was calibrated wrong from the start.

Not every bad output is a prompting failure. Sometimes:

The task genuinely requires human creativity AI can’t replicate

The model has known limitations in this domain

The context window is too small for what you’re attempting

What you want isn’t actually articulable in prompt form

The universe is indifferent and occasionally things just don’t work

Self-blame feels productive. It has that satisfying quality of effort, of trying, of taking responsibility. Look at me, being accountable. Look at me, assuming the best of the tool and the worst of myself.

But self-blame has a great publicist. It positions itself as “taking ownership” while delivering nothing but a more articulate way to feel like shit. Sometimes the tool is wrong for the job. Sometimes your expectations need adjusting, not your technique. Sometimes the answer is “this specific thing shouldn’t be AI-assisted” and that’s not a failure—that’s wisdom.

(I spent a week once trying to get AI to write jokes. Actual jokes. Jokes that would make humans laugh. The outputs were technically structured like jokes. They had setups. They had punchlines. They were not funny. Not even close. I assumed I was prompting wrong. I was prompting fine. AI just doesn’t do funny. Some things can’t be delegated to probability distributions. Comedy, apparently, is one of them.)

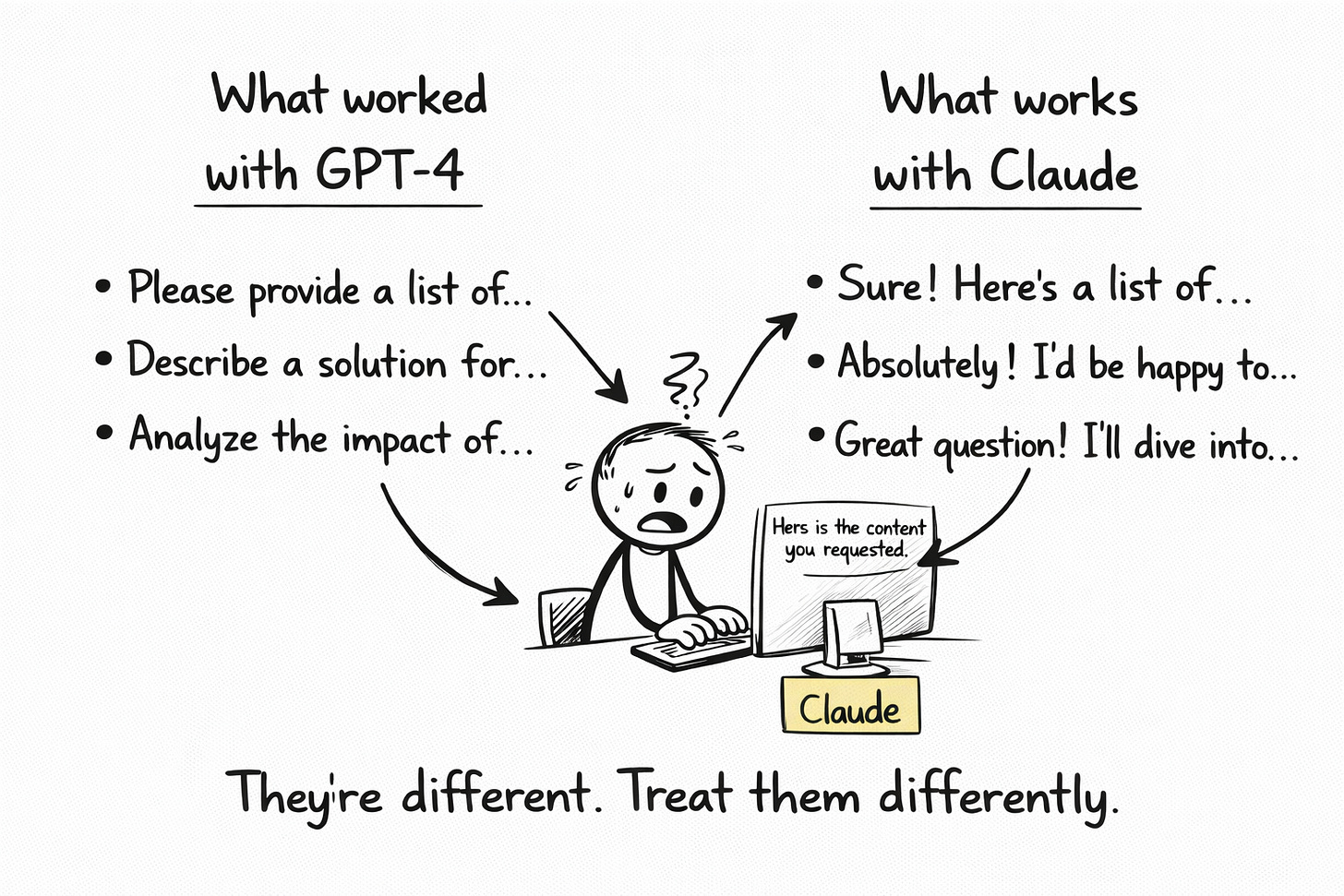

Red Flag #7: You’re Still Hung Up on Your Ex (Model)

The relationship version: constantly comparing your current partner to your ex. Bringing expectations from old relationships into new ones. Never letting the past die its natural death.

The AI version: prompting Claude like it’s ChatGPT. Expecting Gemini to behave like Claude. Bringing workflows from one model to another without recalibrating anything and then getting frustrated when the new tool doesn’t respond to old tricks.

Different models have different personalities.

(I know “personalities” is anthropomorphizing. I know they’re just different probability distributions trained on different data with different fine-tuning. Work with me. The metaphor serves a purpose.)

Claude responds differently than GPT. What works brilliantly in one context might fail completely in another. The prompt that produced magic last month might produce garbage today if the model updated. Treating all AI tools as interchangeable is like treating all humans as interchangeable.

(Okay. AI tools are considerably more interchangeable than humans. But the principle holds: context matters. History matters. Your ex was different. Your new model is different. Stop expecting one to be the other.)

The Uncomfortable Self-Assessment

I made myself answer these honestly. In writing. Where I couldn’t pretend later that I’d been more self-aware than I actually was.

How many of these red flags am I flying?

When I was honest with myself—really honest, alone-with-a-bourbon-at-2pm honest—the answer was most of them. Not all at once. Not with equal intensity. But yeah. The patterns were there. The patterns are always there.

Which one’s my signature dysfunction?

Mind-reading. Three years of expecting AI to know context I haven’t provided. Three years of getting frustrated at that gap. Then I either over-prompt (anxious response) or give up entirely (avoidant response). Classic me. Consistent across all my relationships, human and otherwise.

What’s the actual fix?

The same damn thing that fixes these patterns in human relationships: awareness, adjustment, and accepting that collaboration requires effort from both sides. Even when one side is software that has no concept of effort or sides or collaboration.

The awareness came from writing this newsletter. (I process things by writing about them. Cheaper than therapy. Also: the only option when you're uninsured in Latin America and your Spanish isn't good enough to explain attachment styles.)

The adjustment is ongoing.

The acceptance is... work in progress. Acceptance is always a work in progress. Anyone who tells you they’ve achieved complete acceptance is either enlightened or lying. I’ve yet to meet the first kind. The second kind won’t stop DMing me.

What Changes When You Stop

The metaphor breaks eventually. AI isn’t actually a relationship. There’s no “working on us.” There’s no couples therapy. There’s no meeting in the middle. It’s a tool. A sophisticated, probabilistic, occasionally miraculous tool that still requires a human to use it well.

But the patterns we bring to it are real. And recognizing them does change things.

When I stopped expecting mind-reading, I started writing better prompts. Specific prompts. Context-rich prompts. Prompts with no room for interpretation because I’d filled every gap myself.

When I stopped comparing my AI to everyone else’s, I focused on improving my inputs. The only relevant benchmark is: is this output better than my last output? Is the trajectory pointing up?

When I stopped taking rejection personally, I started treating bad outputs as data. What’s missing? What could I add? Where’s the gap between expectation and result? What would a sane person do differently?

None of this is comfortable. The recognition rarely is. We don’t enjoy discovering that our problems have frequent flyer status and we’ve been earning them miles.

But the collaboration got better. Measurably. Consistently. Not perfect—nothing’s ever perfect—but better. Incrementally, persistently better.

Which is more than I can say for some of my actual relationships.

Anyway…

The red flags you bring follow you everywhere. Every context. Every tool. Every relationship. At least with AI, you can fix them without a mediator.

Discussion Thread: Finish this sentence: “I knew my AI collaboration was dysfunctional when I ___________.”

Mine: “...started a new chat because I didn’t want Claude to ‘see’ my earlier embarrassing prompts.”

Crafted with love (and AI).

Nick “Flying Red Flags Since 2023” Quick

PS…This newsletter is free. So is the self-therapy you just witnessed. Hit subscribe before I start charging for both.