23 Missing Women, 6 Timelines, and the AI That Finally Shut Up and Listened

What I learned building an audio drama that should’ve collapsed

Teaching AI to write like you is hard.

I’ve spent two years solving that particular problem. Documenting voice patterns so granularly that AI can’t wiggle back to its defaults. Calibrating AI through feedback loops until the output stops sounding like a press release written by someone who’s never had a bad day. Building workflows that produce content sounding like you wrote it. Because you pretty much did, with AI handling the grunt work while you handled the soul.

It works. For newsletters, articles, social posts, educational content, the methodology holds. I teach it. People use it. The output sounds like you. Specifically, recognizably, couldn’t-be-anyone-else but you.

Then I tried to teach AI to write like twenty-three people who don’t exist.

And the whole system cracked in places I didn’t expect. In ways that (once I stopped swearing at my monitor and started paying attention) made everything I’d built significantly better.

How Fiction Broke the System

I’m producing an audio drama called Signal Loss. Six interwoven storylines. A season-long mystery. Twenty-three missing women who vanished after posting to a Reddit thread describing a shelter that only exists between 2 and 4 AM. A quantum physics subplot. A tale where mass belief doesn’t just describe reality. It creates it.

(Yes, I’m aware that sounds like I’ve been licking toads. The toads are relevant. Metaphorically. There are no actual toads in the script. Though now I’m wondering if there should be.)

I sat down with every AI collaboration technique I’ve spent two years refining. Every workflow. Every calibration method. Every voice documentation system I teach for actual money to actual people who trust me.

They fell apart in about forty-five minutes.

Not because they’re wrong. Because they were designed for a specific kind of problem, and fiction is a different kind of problem in the way that juggling chainsaws is a different kind of problem than juggling tennis balls. The physics are the same. The consequences of dropping one are not.

Fiction broke the system at the exact weak points I didn’t know existed because nothing had stress tested them before.

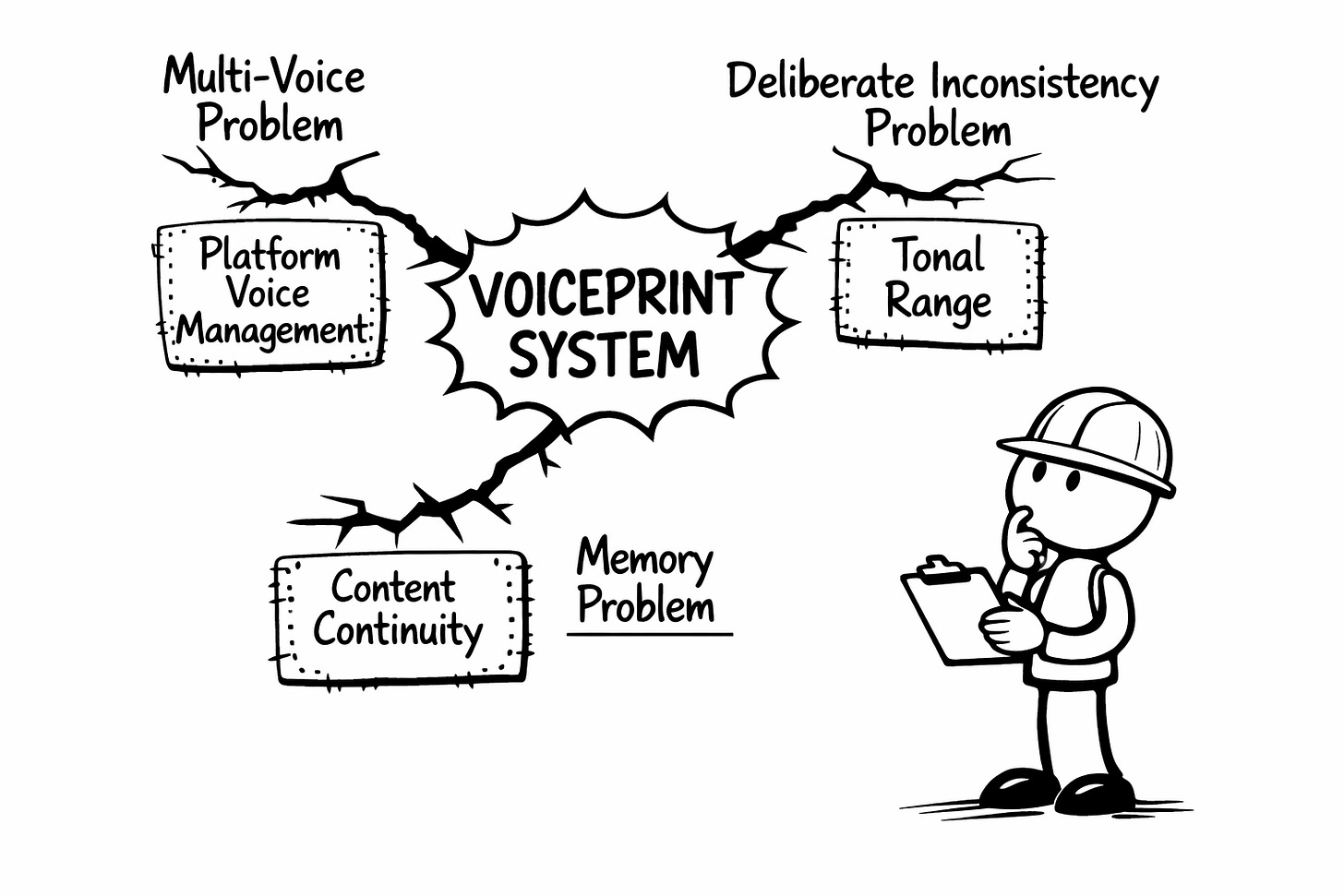

Three Ways Fiction Broke my Voiceprint

My methodology is built on something called a Voiceprint. A detailed map of how you write, documented across four layers: Vocabulary (your words), Architecture (how you structure ideas), Stance (your relationship with the reader), Tempo (the rhythm underneath the meaning).

You feed AI your Voiceprint. You calibrate through iterative feedback. AI produces output in your patterns instead of defaulting to the statistical average of every mediocre LinkedIn post ever published. One voice. Documented. Consistent.

Fiction put that system in a headlock and politely asked it to reconsider its life choices.

The multi-voice problem. A Voiceprint captures one identity. Fiction requires multiple simultaneous identities that need to stay distinct. Marcus sounds different from Zoe sounds different from Tina. Not because they use different vocabulary about the same topic—that’s surface-level—but because they think differently. They structure arguments differently. They relate to uncertainty differently.

The system I teach handles one voice brilliantly. It had no framework for preventing voices from bleeding into each other during the same project. Tina started sounding like Marcus. Zoe picked up my cadence instead of her own. The gravitational pull toward sameness—the exact problem the Voiceprint was built to solve—re-emerged between characters.

Turns out solving convergence for one voice doesn’t solve it for five.

The deliberate inconsistency problem. Ink Sync (my calibration process) trains AI for consistency. You run feedback loops until the output reliably matches your patterns. Consistency is the whole point.

Fiction needs consistency within each character and deliberate inconsistency between them. I needed the system to maintain five different voices simultaneously and keep them separate. I was asking my methodology to do the opposite of what I’d spent two years teaching it to do.

The memory problem. Non-fiction collaboration happens piece by piece. Each newsletter is essentially standalone. Your Voiceprint persists, but the content doesn’t need to remember with forensic precision what you wrote three issues ago.

Fiction needs to remember everything. That Tina speaks in fragments with a specific rhythm. That 173 bot accounts went dormant within days of each other. That Zoe’s skepticism manifests as academic hedging she’d never abandon. That someone’s bourbon preference in episode two better match episode seven, or readers will write to you about it until you die.

The collaboration system I built had no mechanism for persistent narrative memory across sessions. The methodology handled a singular voice beautifully. It had nothing for continuity.

Voice without memory is a singer who can hit every note but can’t remember the damn song.

Why This Matters (Even If You Never Write Fiction)

Every one of these failures is a complexity problem, not a fiction problem.

Multi-voice? You already deal with that. Your Facebook doesn’t sound like your newsletter doesn’t sound like your course content. Same person, different registers. The methodology handles this loosely. Fiction demanded I get precise about it. Which means the precision now exists for anyone managing voice across multiple platforms.

Deliberate inconsistency? That’s tonal range within a single piece. The section where you’re vulnerable should sound different from the section where you’re authoritative. Fiction forced me to articulate exactly what stays constant and what needs to shift. That distinction was always missing.

Persistent memory? That’s content series. Multi-part courses. Anything that references what came before without accidentally contradicting itself. The methodology handled standalone pieces like a dream. Fiction demanded continuity across a season. That continuity framework now exists for anyone building anything longer than a single article.

I pushed the system into harder territory and it cracked. The cracks showed me exactly where to reinforce.

Every tool gets better when you finally use it for something it wasn’t designed to handle.

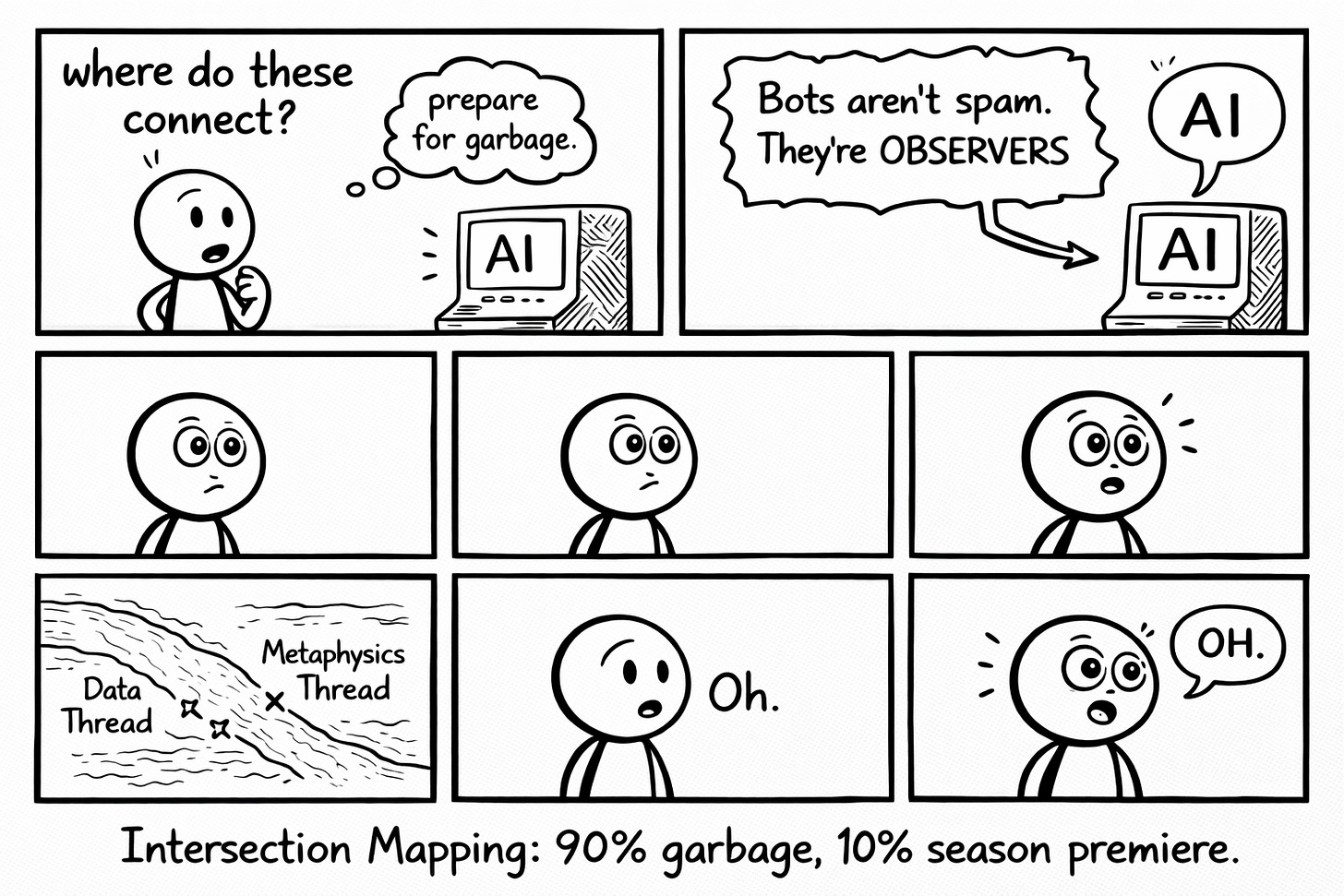

The Experiment: Intersection Mapping

Two techniques crystallized from the wreckage. Neither is revolutionary on its own. What’s different is how they extend the existing collaboration framework into territory it couldn’t reach before.

Something that actually happened. Not hypothetically. Actually.

I described two separate storylines I was developing.

Thread A: Zoe’s data analysis reveals that bots on a Reddit thread are discussing quantum physics. Weird, but explainable.

Thread B: The shelter’s existence seems tied to observer effects. Looking for it makes it disappear. Not looking allows it to exist.

I asked AI where these might naturally collide.

I was expecting garbage. AI suggestions for narrative connections are wrong far more often than they’re right, and the wrong ones have that particular flavor of confident mediocrity.

But this time: “The bots aren’t just generating spam. They’re farming believers. Every post about this women’s shelter that someone reads and never questions, never Googles, never doubts, just absorbs as true, is load-bearing. Enough belief and the shelter manifests. The bots aren’t the architects. They’re the evangelists.”

I stared at my screen for approximately eleven seconds.

That intersection became the entire season’s foundation. Not through observation. Through acceptance. Thousands of people who never verified, just absorbed, until a women’s shelter dragged itself from fiction into reality on the back of human credulity.

AI didn’t write that idea. But AI surfaced the connection hiding between two threads I was treating as entirely separate, because my brain was too full of logistics to see what was sitting right there, patient, obvious, waiting for someone to bother looking.

Intersection Mapping is using AI to discover where separate elements collide in ways you hadn’t planned. Most suggestions are useless. I cannot stress this enough. Your judgment still matters. Probably more than it did before, because now you’re sorting gold from a much larger pile of dirt.

But occasionally. Gold. The kind that feels inevitable to audiences but wasn’t remotely obvious during drafting.

And this isn’t fiction-exclusive. It works anywhere you’re juggling multiple ideas that might connect. Content series. Course architecture. Newsletter arcs. Anywhere the interesting thing lives between the pieces rather than inside them.

The Codex

Every character. Every location. Every established rule. Every planted detail. Every unresolved thread. All in one persistent document that AI references before it generates a single word.

When I draft a scene: “Given what we’ve established about Tina’s voice pattern, how would she phrase this warning?” AI proposes. I decide. The Codex updates. The cycle continues. Nobody’s eyes change color without authorization.

When I’m about to contradict my own continuity (which happens with a frequency that should probably disqualify me from writing fiction at all), AI flags the conflict before it becomes a plot hole.

Writers have kept story bibles forever. The concept predates electricity. What’s different is having a collaborator that can actually use it. Cross-reference forty pages of established details in seconds. Flag the contradiction before it ships. Never convince itself it “definitely remembers” when it definitely does not.

The Codex is the Voiceprint’s bigger, more obsessive sibling. The Voiceprint documents how you write. The Codex documents what you’ve written, and makes damn sure you don’t accidentally unwrite it next Tuesday.

For non-fiction, this translates directly: A course curriculum that builds on previous modules without contradicting them. A content series that references its own history accurately. A brand that evolves without creating parallel universes of conflicting information. Any long-form project where Session 1’s decisions need to remain true by Session 10.

The implementation is identical. Create a master reference document. Update it after each session. Have AI check against it before generating. The framework that keeps Marcus from contradicting Marcus also keeps Module 3 from contradicting Module 1.

The Part That Doesn’t Make the Highlight Reel

The upfront investment is enormous. Building the Codex for Signal Loss took longer than writing all the episodes. You’re not saving time. You’re borrowing against future productivity and hoping the project survives long enough to repay the debt. Signal Loss is the first project where the logistics didn’t win. The Codex is why.

AI doesn’t understand why something is powerful. It can tell you two elements might connect. It cannot tell you whether that connection will make anyone feel anything. That’s still craft. Still you. Still the difference between work that matters and work that merely exists.

And complexity becomes a hiding place if you let it. More threads aren’t automatically better. Sometimes the intersection AI suggests is technically interesting and dramatically dead.

AI isn’t making Signal Loss good. AI is making Signal Loss possible to attempt.

The creativity is mine. The judgment is mine. The work is absolutely still the work. What changed is the management. The logistics. The ability to hold a fictional universe in memory while my actual memory is three coffees deep at 1 AM.

Which is less inspiring than “AI made me a genius” but has the advantage of being true.

The Stress Test You Can Run This Week

Fiction stress-tested my system. But you don’t need to write an audio drama to find the same structural weaknesses in your own AI collaboration.

Pull your last three published pieces. Read them back to back. Not for quality, for consistency.

Ask yourself:

Does your voice hold across all three, or does it shift in ways you didn’t intend? If you’re writing for multiple platforms, do the platform versions sound like the same person in different rooms, or different people in the same room?

Is there anything you established in piece one that you accidentally contradicted in piece three? A claim you softened. A position you abandoned. A fact you stated differently because you forgot how you’d stated it before.

If you found drift, you’ve found your crack. Same cracks fiction found in my system: multi-voice bleed, unintentional inconsistency, memory gaps.

The fixes are identical:

Document the pattern that’s drifting. Build a reference that persists across sessions. Stop trusting yourself to “definitely remember.” Your memory lies. Mine does too. That’s why we build systems that don’t.

This diagnostic surfaces the weaknesses before something harder finds them for you. The Signal Loss story becomes evidence for the exercise, not just an interesting case study you read on a Thursday.

What You’re Building

The system didn’t break because it was wrong. It broke because I finally asked it to do something hard enough to find the edges.

That’s how you find out what anything is actually made of. You use it for the thing it wasn’t designed for, and you pay attention to where it screams.

The ambitious project you’ve been avoiding because the complexity felt impossible to manage alone? The story living in your head because you couldn’t figure out how to hold all the pieces simultaneously? The half-formed thing that feels too big for one brain and too personal for a committee?

The tools exist. Systematic AI collaboration isn’t limited to single-piece content. The framework scales into territory that should have been impossible for a solo creator. But it only works if you actually have something worth making complex.

Signal Loss has six storylines, five major characters, a season-long mystery, and a premise about digital entities observing reality into existence. I’m managing it. Some days barely. Other days with something approaching confidence. Most days somewhere in between, sustained by whatever lies I'm telling myself this week about why this time will be different.

The shelter is taking shape. One thread at a time. One intersection at a time. One consistency check at a time.

The abandoned project folder doesn’t have to keep growing.

🧉 What’s the ambitious project you’ve been avoiding because the complexity felt impossible to manage alone? Drop it in the comments. I want to see what you’re building. Or what you’ve been afraid to build.

Crafted with love (and AI).

Nick "Broke It to Fix It" Quick

PS… I’m on a writing streak right now, publishing daily while the momentum lasts. If you want systematic breakdowns on AI collaboration that don’t insult your intelligence, subscribe. When the streak breaks, you’ll at least have caught the good stuff.

PPS… Want to know how I’m managing six fictional timelines without losing my mind? I’m documenting the entire Signal Loss process: the Codex system, the intersection mapping failures, the voice management chaos. Reply “fiction” and I’ll send you the breakdown when it’s ready. (Turns out AI defaults to making everyone sound like the same person. Fiction made me fix it.)

This is a brilliant read. I’m really bought in to the shelter existing mechanism. You hooked me.