200 Novels in 12 Months. Not One Worth Reading.

The AI writing debate just collapsed into a false binary. Here’s the question both sides are missing.

Coral Hart can generate a novel in 45 minutes. Chuck Wendig says anyone who uses AI isn’t a “real writer.” And somehow, both of them are missing the point by exactly the same amount.

(Which is impressive, honestly. That kind of symmetrical wrongness takes work.)

Here’s the context. Last weekend, the New York Times profiled Hart, a romance writer who’s cranked out 200+ novels in a year using Claude. She’s sold 50,000 copies across 21 pen names and is now selling AI writing software for $250/month. Her philosophy, delivered with the confidence of someone who’s never questioned whether speed is the right metric: “If I can generate a book in a day, and you need six months to write a book, who’s going to win the race?”

Wendig, a sci-fi author with a popular blog, responded the next day like a grenade through a window. “Writers Who Use AI Are Not Real Writers.” Full stop. No exceptions. The comment section is exactly what you’d expect. A cage match between “fuck these people and their chatbots” and “this is just manufactured outrage.”

Two camps. One says the future is automation. The other says the future is purity.

They’re both arguing about the wrong thing.

The Question They Forgot to Ask

Hart’s framework is simple: writing is a race, and AI lets you win it. Wendig’s framework is simpler: writing is sacred, and AI profanes it.

Neither of them is asking the question that actually matters.

Not should you use AI. Not can you use AI.

Are you doing the thinking?

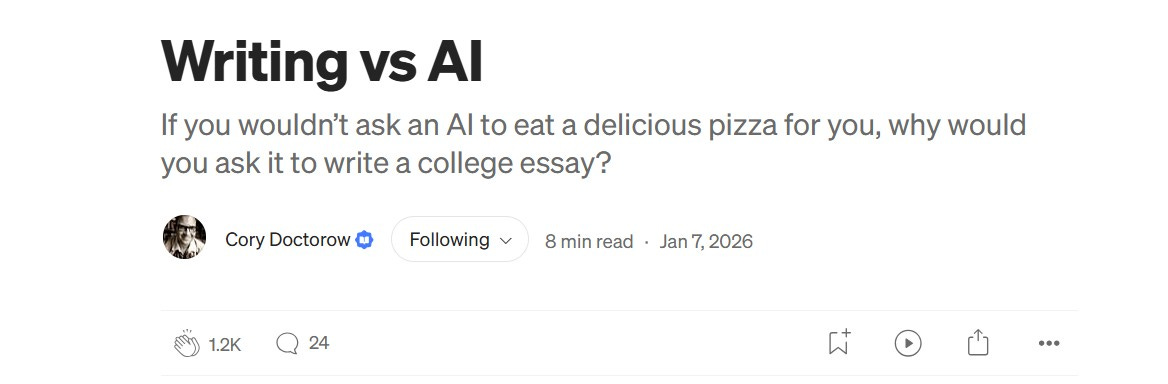

Cory Doctorow put it better than I will. Writing about students using ChatGPT for essays, he asked: “If you wouldn’t ask an AI to eat a delicious pizza for you, why would you ask it to write a college essay?”

The pizza metaphor sounds cute until you sit with it. Eating pizza isn’t about the calories entering your body. It’s about the experience of eating. The warmth, the texture, the satisfaction. You can’t outsource that and keep the benefit.

Writing works the same way.

You don’t understand something by reading about it. You understand it by wrestling it onto the page. The struggling with structure. The hunting for the right word. The moment when you realize what you actually think, three paragraphs into trying to explain it. That’s not the obstacle to the finished product. That’s the product.

When you outsource the writing, you outsource the thinking. And you end up with something that looks like thought without being thought at all.

Which brings us back to Hart’s 200 novels.

The $81 Problem

Here’s where it gets uncomfortable.

Researchers at the University of Michigan recently published a study on voice replication. They fine-tuned GPT-4o on individual authors’ complete works. Median cost: $81 per author.

Then they had MFA-trained readers from Iowa, Columbia, and NYU evaluate the output. These are people who’ve spent years learning to recognize voice, style, and craft.

The result? They preferred the AI output. Not just for “stylistic fidelity” (which you’d expect), but for writing quality. The fine-tuned AI beat human MFA candidates trying to imitate the same authors.

Eighty-one dollars to clone a voice well enough to fool trained experts.

(I've spent more than that on hot sauce this month. And my Cholula never put a novelist out of work.)

The study should terrify anyone who thinks voice is some mystical quality that AI can’t touch. It can. For less than a nice dinner.

But here’s what the study doesn’t prove: that the thinking transferred.

You can replicate the fingerprints without replicating the mind that left them. You can generate prose that sounds like someone without generating the ideas that made their prose worth reading in the first place.

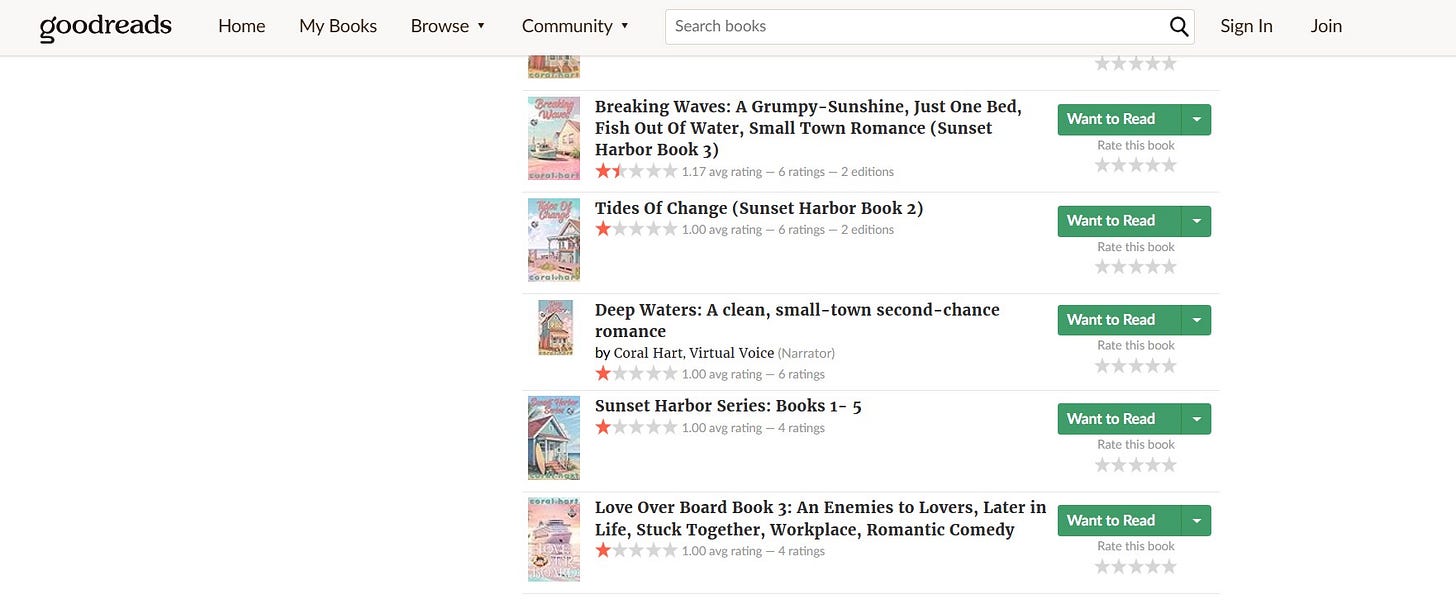

Hart’s novels have an average rating of 1-2 stars on Goodreads. She’s optimized for production, not for thought. The voice might pass. The substance doesn’t.

You Can’t Subtract Your Way to a Voice

I didn’t build my voice documentation system for AI. I built it for myself, a decade before ChatGPT existed.

In the 2010s, I was a ghostwriter. (Also copywriter, growth consultant, marketing mercenary. Whatever paid.) I spent years writing in other people’s voices. Thought leaders who had ideas but couldn’t get them on the page. Business owners who knew what they wanted to say but not how to say it. Founders who were too busy building to stop and write. My job was to sound like them, not me.

So I built a system. A framework for breaking voice into components: vocabulary patterns, sentence architecture, stance toward the reader, rhythm and tempo. The fingerprints people leave on their writing without realizing they’re doing it.

When AI got good enough to matter, I figured the framework would transfer.

(Narrator: It did not transfer.)

Same problem, right? Teach a machine a voice like I’d teach myself a client’s voice.

Completely different problem.

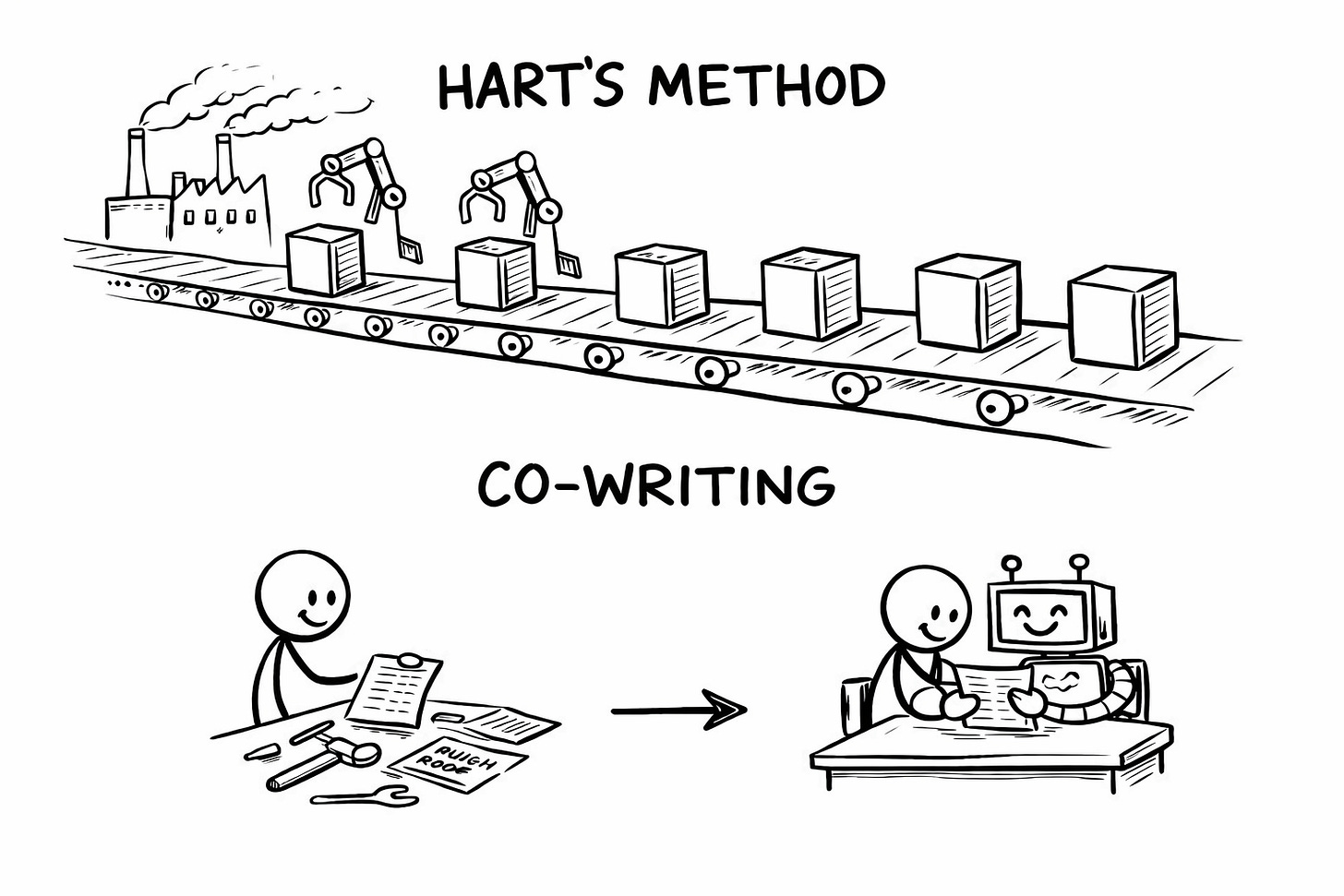

Hart recommends something she calls an “ick list.” Words you tell AI to avoid so the output doesn’t sound robotic. Steer clear of “delve.” Skip “vibrant.” Don’t say “tapestry.”

I get the impulse. Vocabulary was always part of my framework. But only a part. One slice of a much uglier pie. A lot of people think banned words are the unlock.

They aren’t. Not even close.

Dictating what words to avoid will not get you compelling words. You can ban “resonate” and “robust” and “elevate” until your prompt is longer than your output. AI will just find other ways to sound generic.

(It’s like telling someone “don’t be boring.” Okay. Now what? They’re still boring. They just stopped wearing khakis.)

Voice isn’t a list of banned words. It’s the thinking pattern that generates the words. The rhythm. The structure. The tangents you can’t resist. The way you land a punchline. The reason your readers can spot your writing three sentences in, even without a byline.

AI doesn’t have intuitions. It has patterns. Explaining your voice to a pattern-matching machine means articulating things you’ve never had to articulate before. The fingerprints you didn’t know you were leaving.

This is where Hart’s approach collapses. She automates. Feeds AI an outline, gets a novel, publishes. The thinking happened once (when she built her system). Now it’s just production. A slop factory with a Fabio clone on every cover.

Co-writing keeps you in the loop. AI handles scaffolding. You handle the ideas. The output sounds like you because you never stopped thinking.

You just stopped doing the mechanical labor alone.

The Race That Isn’t

“Who’s going to win the race?”

Hart asked this like it was rhetorical. Like the answer was obviously “the faster person.”

But a race implies a finish line worth crossing. A prize worth winning.

What’s the prize for 200 one-star novels? You get to be the most prolific writer nobody recommends.

She’s made six figures. Money is real. But fifty thousand sales across two hundred books is 250 copies per title. Most of those readers didn’t come back.

(The real money, as several analysts have pointed out, isn’t in the books. It’s in the $250/month software and the coaching business. The novels are the proof of concept. The product is the dream of becoming a slop factory yourself.)

Hart’s winning a race to produce the most forgettable crap at the lowest possible cost.

Let her win it.

Wendig’s running a different race. The race to be the most righteous voice in a burning building. His position isn’t “be thoughtful about AI.” It’s “anyone who touches it isn’t a real writer.” Full stop.

Hart’s mistake is obvious: she let the machine think for her. Wendig’s mistake is subtler: he let fear of the machine excuse him from thinking about it at all.

(Plenty of people wrote garbage long before ChatGPT existed. Believe me. I created some of it. Every piece of slop has a human fingerprint on the publish button.)

Two races. Neither worth running.

The slop flood is real. I’m not going to tell you quality always wins or that the cream rises. That’s cope. The factories are burying creators who can’t keep pace.

But here’s what the factories can’t do: think.

They can generate. They can flood every category with optimized covers and keyword-stuffed descriptions. What they can’t do is develop an idea that requires a specific human brain. They can’t notice what no one else noticed. They can’t make you feel like the writer sees you.

Wendig’s wrong that using AI disqualifies you. Hart’s wrong that speed is the point.

The question isn’t whether you use the tool. It’s whether you’re still doing the thinking.

Co-writing isn’t a compromise between their positions. It’s a rejection of the premise that writing is a race at all.

🧉 What’s one thing you refuse to let AI do for you? And one thing you happily hand over? There’s no right answer. But there’s definitely your answer. Drop it below.

Crafted with love (and AI),

Nick “Ghost of Ghostwriters Past” Quick

PS… If you’ve been doom-scrolling the Hart/Wendig discourse all weekend and still feel like neither camp quite gets it, you’re not alone. That’s what we’re building here. Every day, I publish something that helps you navigate this without picking a side you’ll regret. Subscribe if you’re tired of the false binary. And if you know someone else who’s been caught in the crossfire this week, forward this before they join the wrong army.

PPS… Hart’s “ick list” is a band-aid. A Voiceprint is a blueprint. If you want to actually document what makes your writing yours (not just the words to avoid, but the patterns that make you recognizable) the Voiceprint Quick-Start Guide walks you through the VAST framework in 15 minutes. Grab it free: